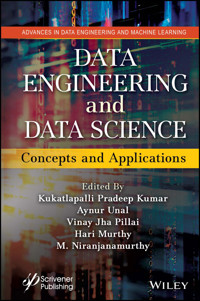

Data Engineering and Data Science E-Book

190,99 €

Mehr erfahren.

- Herausgeber: John Wiley & Sons

- Kategorie: Wissenschaft und neue Technologien

- Sprache: Englisch

DATA ENGINEERING and DATA SCIENCE Written and edited by one of the most prolific and well-known experts in the field and his team, this exciting new volume is the "one-stop shop" for the concepts and applications of data science and engineering for data scientists across many industries. The field of data science is incredibly broad, encompassing everything from cleaning data to deploying predictive models. However, it is rare for any single data scientist to be working across the spectrum day to day. Data scientists usually focus on a few areas and are complemented by a team of other scientists and analysts. Data engineering is also a broad field, but any individual data engineer doesn't need to know the whole spectrum of skills. Data engineering is the aspect of data science that focuses on practical applications of data collection and analysis. For all the work that data scientists do to answer questions using large sets of information, there have to be mechanisms for collecting and validating that information. In this exciting new volume, the team of editors and contributors sketch the broad outlines of data engineering, then walk through more specific descriptions that illustrate specific data engineering roles. Data-driven discovery is revolutionizing the modeling, prediction, and control of complex systems. This book brings together machine learning, engineering mathematics, and mathematical physics to integrate modeling and control of dynamical systems with modern methods in data science. It highlights many of the recent advances in scientific computing that enable data-driven methods to be applied to a diverse range of complex systems, such as turbulence, the brain, climate, epidemiology, finance, robotics, and autonomy. Whether for the veteran engineer or scientist working in the field or laboratory, or the student or academic, this is a must-have for any library.

Sie lesen das E-Book in den Legimi-Apps auf:

Seitenzahl: 598

Veröffentlichungsjahr: 2023

Ähnliche

Contents

Cover

Table of Contents

Series Page

Title Page

Copyright Page

Preface

1 Quality Assurance in Data Science: Need, Challenges and Focus

1.1. Introduction

1.2. Testing and Quality Assurance

1.3. Product Quality and Test Efforts

1.4. Data Masking in Data Model and Associated Risks

1.5. Prediction in Data Science

1.6. Role of Metrics in Evaluation

1.7. Quantity of Data in Quality Assurance

1.8. Identifying the Right Data Sources

1.9. Conclusion

References

2 Design and Implementation of Social Media Mining - Knowledge Discovery Methods for Effective Digital Marketing Strategies

2.1. Introduction

2.2. Literature Review

2.3. Novel Framework for Social Media Data Mining and Knowledge Discovery

2.4. Classification for Comparison Analysis

2.5. Clustering Methodology to Provide Digital Marketing Strategies

2.6. Experimental Results

2.7. Conclusion

References

3 A Study on Big Data Engineering Using Cloud Data Warehouse

3.1. Introduction

3.2. Comparison Study of Different Cloud Data Warehouses

3.3. Snowflake Cloud Data Warehouse

3.4. Google BigQuery Cloud Data Warehouse

3.5. Microsoft Azure Synapse Cloud Data Warehouse

3.6. Informatica Intelligent Cloud Services (IICS)

3.7. Conclusion

Acknowledgements

References

4 Data Mining with Cluster Analysis Through Partitioning Approach of Huge Transaction Data

4.1. Introduction

4.2. Methodology Used in Proposed Cluster Analysis System

4.3. Literature Survey on Existing Systems

4.4. Conclusion

References

5 Application of Data Science in Macromodeling of Nonlinear Dynamical Systems

5.1. Introduction

5.2. Nonlinear Autonomous Dynamical System

5.3. Nonlinear System - MOR

5.4. Data Science Life Cycle

5.5. Artificial Neural Network in Modeling

5.6. Neuron Spiking Model Using FitzHugh-Nagumo (F-N) System

5.7. Ring Oscillator Model

5.8. Nonlinear VLSI Interconnect Model Using Telegraph Equation

5.9. Macromodel Using Machine Learning

5.10. MOR of Dynamical Systems Using POD-ANN

5.11. Numerical Results

5.12. Conclusion

References

6 Comparative Analysis of Various Ensemble Approaches for Web Page Classification

6.1. Introduction

6.2. Literature Survey

6.3. Material and Methods

6.4. Ensemble Classifiers

6.5. Results

6.6. Conclusion

Acknowledgement

References

7 Feature Engineering and Selection Approach Over Malicious Image

7.1. Introduction

7.2. Feature Engineering Techniques

7.3. Malicious Feature Engineering

7.4. Image Processing Technique

7.5. Image Processing Techniques for Analysis on Malicious Images

7.6. Conclusion

References

Blog

8 Cubic-Regression and Likelihood Based Boosting GAM to Model Drug Sensitivity for Glioblastoma

8.1. Introduction

8.2. Literature Survey

8.3. Materials and Methods

8.4. Evaluations, Results and Discussions

Conclusion

References

9 Unobtrusive Engagement Detection through Semantic Pose Estimation and Lightweight ResNet for an Online Class Environment

9.1. Introduction

9.2. Related Work

9.3. Proposed Methodology

9.4. Experimentation

9.5. Results and Discussions

Conclusion

References

10 Building Rule Base for Decision Making - A Fuzzy-Rough Approach

10.1. Introduction

10.2. Literature Review

10.3. Discretization of the Dataset Using Fuzzy Set Theory

10.4. Description of the Dataset

10.5. Process Involved in Proposed Work

10.6. Experiment

10.7. Evaluation Result

10.8. Discussion

Conclusion

References

11 An Effective Machine Learning Approach to Model Healthcare Data

11.1. Introduction

11.2. Types of Data in Healthcare

11.3. Big Data in Healthcare

11.4. Different V’s of Big Data

11.5. About COPD

11.6. Methodology Implemented

Conclusion

References

12 Recommendation Engine for Retail Domain Using Machine Learning Techniques

12.1. Introduction

12.2. Proposed System

12.3. Results

12.4. Conclusion

References

13 Mining Heterogeneous Lung Cancer from Computer Tomography (CT) Scan with the Confusion Matrix

13.1. Introduction

13.2. Literature Review

13.3. Methodology

13.4. Result

13.5. Conclusion and Future Scope

References

14 ML Algorithms and Their Approach on COVID-19 Data Analysis

14.1. Introduction

14.2. DataSet

14.3. Types of Machine Learning Algorithms

14.4. Conclusion

References

15 Analysis and Design for the Early Stage Detection of Lung Diseases Using Machine Learning Algorithms

15.1. Introduction

15.2. Machine Learning Algorithms

15.3. Evaluation Metrics and Comparative Results for Early Detection of Lung Diseases

15.4. Conclusion

References

16 Estimation of Cancer Risk through Artificial Neural Network

16.1. Introduction

16.2. Case Studies Related to Cancer Risk Estimation Using ANN

16.3. Datasets Used in Cancer Risk Estimation

16.4. Discussion

16.5. Future Scope

16.6. Conclusion

References

17 Applications and Advancements in Data Science and Analytics

17.1. Data Science and Analytics in Software Testing

17.2. Applications of Data Science and Analytics

17.3. Selenium Testing Tool in Data Science

17.4. Challenges and Advancements in Data Science

17.5. Data Science and Analytics Tools

17.6. Conclusion

References

About the Editors

Index

Also of Interest

End User License Agreement

List of Tables

Chapter 1

Table 1.1 ARMA model results.

Chapter 2

Table 2.1 Differences between supervised, unsupervised and semi-supervised met...

Table 2.2 Dataset description.

Table 2.3 Classification results of bayes and decision tree classifiers.

Table 2.4 Clusters formed using expectation maximization method.

Chapter 4

Table 4.1 Data object points, isolated partitions and the cluster number.

Table 4.2 Computing resource comparison of partitioning based cluster analysis...

Chapter 5

Table 5.1 Comparison of three models.

Table 5.2 Performance of POD and neural network approximation of nonlinear rin...

Table 5.3 Time taken for full order computation and neural network approximati...

Table 5.4 Performance of POD and neural network approximation of ring oscillat...

Table 5.5 Execution Time taken for full order model evaluation and neural netw...

Table 5.6 Performance index - Mean Square Error (MSE) and relative error

L

2

(Ω)...

Table 5.7 Execution time* (in seconds) taken for full order model evaluation a...

Table 5.8 Performance of neural network approximation of nonlinear interconnec...

Table 5.9 Execution time taken by neural network approximation and full order ...

Chapter 6

Table 6.1 Summary of selected reported works for web-page classification.

Table 6.2 Original content after removing regular expressions, Stop words and ...

Table 6.3 Bagging meta estimator with various base estimators for DS1.

Table 6.4 Number of trees versus accuracy and number of trees versus time.

Table 6.5 Size of the random subset versus accuracy in bagging meta estimator....

Table 6.6 Best parameter of bagging meta estimator for DS1.

Table 6.7 Number of trees versus accuracy and number of trees versus time for ...

Table 6.8 min_samples_split versus accuracy in bagging random forest.

Table 6.9 Random forest best parameters for DS1.

Table 6.10 Learning rate versus accuracy in AdaBoost algorithm.

Table 6.11 Best parameters of Adaboost for DS1.

Table 6.12 max_depth versus accuracy for gradient tree boosting.

Table 6.13 max_leaf_nodes versus accuracy for gradient tree boosting.

Table 6.14 Gradient tree boosting best parameters for DS1.

Table 6.15 min_child_weight versus accuracy in XGBoost.

Table 6.16 Subsample versus accuracy in XGBoost.

Table 6.17 XGBoost best parameters.

Table 6.18 Accuracy of Single classifier and ensemble classifier in DS1 and DS...

Table 6.19 Accuracy of Single classifier and ensemble classifier in DS1 and DS...

Chapter 7

Table 7.1 Techniques available in feature selection process.

Chapter 8

Table 8.1 Different measures of performance for GAM.

Table 8.2 Statistical test via anova function for GAM.

Chapter 9

Table 9.1 The surrogate model algorithm.

Table 9.2 Parameter setting detail.

Table 9.3 Metrics using daisee dataset.

Chapter 10

Table 10.1 Data collected as a part of the study.

Table 10.2 Linguistic labels assigned for the variables.

Table 10.3 Decision table.

Table 10.4 Crisp intervals defined for the variables selected.

Table 10.5 Decision table after crisp discretization.

Table 10.6 Accuracy of rough approximation after fuzzy and crisp discretizatio...

Table 10.7 Number of rules generated after fuzzy based and set-based discretiz...

Table 10.8 Number of certain rules generated by LEM2 and Apriori algorithms.

Chapter 11

Table 11.1 Big data in healthcare.

Table 11.2 Performance analysis.

Chapter 14

Table 14.1 Diabetes symptoms and condition.

Table 14.2 Diabetes symptoms.

Chapter 15

Table 15.1 Confusion matrix representation.

Table 15.2 Performance metrics using machine learning techniques.

Chapter 16

Table 16.1 Survey of ANN for cancer risk estimation.

Table 16.2 Characteristics of the participants.

Table 16.3 Comparison of different ML methods with ANN for BC prediction.

Guide

Cover

Table of Contents

Series Page

Title Page

Copyright

Preface

Begin Reading

About the Editors

Index

Also of Interest

End User License Agreement

Pages

ii

iii

iv

xv

xvi

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

279

278

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

407

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

418

419

420

421

422

423

424

425

426

427

428

429

430

431

432

433

434

435

436

437

438

439

440

441

442

443

444

445

446

447

448

449

Scrivener Publishing100 Cummings Center, Suite 541JBeverly, MA 01915-6106

Advance in Data Engineering and Machine Learning

Series Editors: Niranjanamurthy M, PhD, Juanying XIE, PhD, and Ramiz Aliguliyev, PhD

Scope: Data engineering is the aspect of data science that focuses on practical applications of data collection and analysis. For all the work that data scientists do to answer questions using large sets of information, there have to be mechanisms for collecting and validating that information. Data engineers are responsible for finding trends in data sets and developing algorithms to help make raw data more useful to the enterprise.

It is important to have business goals in line when working with data, especially for companies that handle large and complex datasets and databases. Data Engineering Contains DevOps, Data Science, and Machine Learning Engineering. DevOps (development and operations) is an enterprise software development phrase used to mean a type of agile relationship between development and IT operations. The goal of DevOps is to change and improve the relationship by advocating better communication and collaboration between these two business units. Data science is the study of data. It involves developing methods of recording, storing, and analyzing data to effectively extract useful information. The goal of data science is to gain insights and knowledge from any type of data — both structured and unstructured.

Machine learning engineers are sophisticated programmers who develop machines and systems that can learn and apply knowledge without specific direction. Machine learning engineering is the process of using software engineering principles, and analytical and data science knowledge, and combining both of those in order to take an ML model that’s created and making it available for use by the product or the consumers. “Advances in Data Engineering and Machine Learning Engineering” will reach a wide audience including data scientists, engineers, industry, researchers and students working in the field of Data Engineering and Machine Learning Engineering.

Publishers at ScrivenerMartin Scrivener ([email protected])Phillip Carmical ([email protected])

Data Engineering and Data Science

Concepts and Applications

Edited by

Kukatlapalli Pradeep Kumar

Aynur Unal

Vinay Jha Pillai

Hari Murthy

and

M. Niranjanamurthy

This edition first published 2023 by John Wiley & Sons, Inc., 111 River Street, Hoboken, NJ 07030, USA and Scrivener Publishing LLC, 100 Cummings Center, Suite 541J, Beverly, MA 01915, USA© 2023 Scrivener Publishing LLCFor more information about Scrivener publications please visit www.scrivenerpublishing.com.

All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, except as permitted by law. Advice on how to obtain permission to reuse material from this title is available at http://www.wiley.com/go/permissions.

Wiley Global Headquarters111 River Street, Hoboken, NJ 07030, USA

For details of our global editorial offices, customer services, and more information about Wiley products visit us at www.wiley.com.

Limit of Liability/Disclaimer of WarrantyWhile the publisher and authors have used their best efforts in preparing this work, they make no representations or warranties with respect to the accuracy or completeness of the contents of this work and specifically disclaim all warranties, including without limitation any implied warranties of merchantability or fitness for a particular purpose. No warranty may be created or extended by sales representatives, written sales materials, or promotional statements for this work. The fact that an organization, website, or product is referred to in this work as a citation and/or potential source of further information does not mean that the publisher and authors endorse the information or services the organization, website, or product may provide or recommendations it may make. This work is sold with the understanding that the publisher is not engaged in rendering professional services. The advice and strategies contained herein may not be suitable for your situation. You should consult with a specialist where appropriate. Neither the publisher nor authors shall be liable for any loss of profit or any other commercial damages, including but not limited to special, incidental, consequential, or other damages. Further, readers should be aware that websites listed in this work may have changed or disappeared between when this work was written and when it is read.

Library of Congress Cataloging-in-Publication Data

ISBN 9781119841876

Front cover images supplied by Pixabay.comCover design by Russell Richardson

Preface

Data engineering is the aspect of data science that focuses on practical applications of data collection and analysis. The data science field is incredibly broad, encompassing everything from cleaning data to deploying predictive models. It complements emerging areas such as machine learning, artificial intelligence and cyber security analytics. Decision making is a vital component is an industry economy that is motivated by algorithms in data science allied fields.

Data-driven discovery is revolutionizing the modeling, prediction, and control of complex systems. This book brings together machine learning, engineering mathematics, and mathematical physics to integrate modeling and control of dynamical systems with modern methods in data science. It highlights many of the recent advances in scientific computing that enable data-driven methods to be applied to a diverse range of complex systems, such as turbulence, the brain, climate, epidemiology, finance, robotics, and autonomy. The book introduces the foundational concepts of data engineering – data models, value chain models and team dynamics. Aimed at advanced undergraduate and beginning graduate students in the engineering and physical sciences, the book presents a range of topics and methods from introductory to state of the art.

This book followed a sequential approach of development of an artifact which initiates from feasibility studies, communication and evolving towards construction and deployment. Similar entities are captured from the chapters received from various authors and a beautiful lineage of data items has been crafted.

1Quality Assurance in Data Science: Need, Challenges and Focus

Jasmine K.S.*, Ajay D. K. and Aditya Raj

Dept. of MCA, RV College of Engineering®, Bengaluru, Karnataka, India

Abstract

It is widely accepted that quality is assured for a product through the process of testing. With the rapid development in the area of data science, research is going on with proper management of data and with its right usage, test engineers can learn about their users. One can predict the associated risks and with a focus on data masking based on the data model. Prescriptive and predictive analysis can be more accurate if the techniques are developed and the accuracy is measured using metrics. Preparing data with required quality and identifying the possible resources are challenging tasks faced by a data scientist. The effective and systematic use of advanced technologies like high-speed hardware and network computing, cloud computing, cross platform tools, etc., continues to be an elusive goal for many organizations. In this context, the chapter investigates the feasibility of novel and practical solutions in this aspect.

Keywords: Quality assurance, testing, data science, data analysis, decision making

1.1 Introduction

1.1.1 Quality Assurance and Testing

In the traditional software development approach, Quality Assurance was done at the later stages of the development process and feedback was collected for improvement. In almost all organizations there exists a Quality Assurance team, responsible for identifying the product defects and resolving them before release of the product.

But in the Agile development approach, the Quality Assurance (QA) team works collaboratively with the development team with a shared responsibility in improving the quality of the product. Irrespective of the project and domain QA plays an important role in delivering high-quality products. It helps developers establish goals and define quality standards. The process also helps in identifying and resolving defects in the products before releasing them to market.

1.1.2 Data Science and Quality Assurance

Data is available in structured and unstructured format. There is a need of methods and algorithms to extract knowledge and inference so that available data can be converted into wisdom to apply in any application domain. In the context of data science, QA is a process of analyzing and modeling the available data to ensure high quality and meeting quality standards. Models have to be created with a variety of data sets and robustness tests have to be conducted for trained models similar to any use case for real-world scenarios [6].

1.1.3 Background

Data science basically puts efforts to verify the general consistency among relevant data and applies scientific methods to ensure the data quality. Domain knowledge is necessary to ensure not only the quality of data but also to avoid errors and inconsistencies in the data collected in the Quality assurance process [1]. After collecting the data, interpretation of data is also a huge challenge. So it is essential that quality assurance should be a continuous process throughout the product development phase, starting from data collection till the delivery of the product. Expert opinion will add value to it. In order to validate the results obtained after data analysis, domain experts play an important role. Finding and resolving quality-related issues once the product is delivered will result in unnecessary wastage of time, money and effort [2, 9]. In order to perform data analytics in an efficient manner, a variety of data sets is essential [3]. Frequency, time and many iterations are part of data analytics. So there is a need for systems which focus on data quality [4, 10]. Software quality and hardware quality also play an equal role [5].

1.2 Testing and Quality Assurance

Testing is a process which ensures the intended behavior of any product within the given time frame and also helps to avoid additional efforts, time and cost overruns. System testing ensures not only product quality but also process quality.

1.2.1 Key Terminologies Associated With Testing

1.2.1(a) Defect count: A defect count is one of the measurements of product quality. It shows the number of undetected errors. The defect rate can be computed by defective products observed divided by the number of units tested. For example, if 5 out of 10 tested units are defective, the defect rate is 5 divided by 10.

1.2.1(b) Test case execution: During the test execution process the developed code is executed with generated test cases and actual output obtained is compared with the expected output. After the test execution process, bugs and test execution status are maintained for further measures. Following are steps involved in test execution:

Step 1: Gathering testing requirements

Step 2: Test plan

Step 3: Test design

Step 4: Test execution

Step 5: Defect reporting and tracking

Step 6: Defect Mapping

1.2.1(c) Test execution classification: The type of testing can be classified based on the purpose for which it has to be executed: for example, performance testing, defects testing, regression testing, etc. During the test execution process, the main focus is to verify how much the actual results varied from the expected results.

1.2.1(d) Pre-requisites for test execution phase

Test Plan, test cases development and the test data should be ready

Test environment setup and its validation

Clear understanding of exit criteria

1.2.2(e) Post-requirements for test execution phase

Validation of actual results with expected results

Defects should be closed or deferred

Test execution and defects summary report

Prioritize the test plans based on the identified risks

Continue the process

1.2.2(f) Test velocity

Testing velocity shows the number of tests one is running per day/weekly/ monthly, etc. It also shows the difference between the planned and actual time.

1.3 Product Quality and Test Efforts

1.3.1 Testing Metrics

Testing metrics are required to measure and estimate process and product quality and to improve the efficiency of the overall testing process. Two major categories are defect metrics and productivity metrics.

Example of productivity metrics: Mileage of any vehicle compared to its ideal mileage recommended by the manufacturer. Figure 1.1 shows the test velocity and loop count also we can see the thread properties.

Figure 1.1 Test velocity, loop count.

Example of defect metrics: Number of defects found, accepted, rejected and deferred in the payment process using a credit card.

A few automated testing tools: Selenium, JMeter, Appium, Junit

The chapter demonstrates a few testing aspects using JMeter. It helps to test and analyze overall performance under different load types.

Description of Figure 1.1:

Name:

Provide a custom name or, if you prefer, simply leave it named the default “Thread Group”.

Thread count:

The number of threads indicates the number of users under test.

Ramp-up Period:

How quickly (in seconds) you want to add users. For example, if set to zero, all users will begin immediately. If set to 10, one user will be added at a time until the full amount is reached at the end of the 10 seconds. Let’s say 5 seconds.

Loop Count:

It indicates the number of times the test should repeat.

Figure 1.2 demonstrates thread delay of 300 milliseconds.

Figure 1.2 Thread delay.

In Figure 1.3, successful test results show in green icons. Figure 1.3 also shows load time, connect time, errors, the request data, the response data, etc. Figure 1.4 demonstrates the Test results in table and and Figure 1.5 shows the Test report summary.

Figure 1.3 Test results.

Figure 1.4 Test results in table.

CSV file is the source of data user for testing and one can see the dynamic results in the View Results Tree (Figure 1.6). In our example, it is no longer Boston and London, but Philadelphia and Berlin, Portland and Rome, etc.

Figure 1.5 Test report summary.

Figure 1.6 View results tree.

1.3.2 How to Improve the Business Value to Products Using Test Automation

With the advancements in the area of automation testing, in order to ensure software quality, not only the developed software has to meet the functional requirements specifications, it also has to meet the non-functional requirements specifications such as operational efficiency, reliability, security, maintainability, availability, code efficiency, etc., through which one can increase the business value.

With automation testing one can manage the performance of test activities there by giving conformance to test requirements. In the current scenario, automation testing became a crucial part of quality assurance [7].

The business value testers are delivering with their test efforts and can be evaluated by measuring the following attributes:

Percentage of improvement in product quality

Cost saving due to testing process

Success rate in defect prevention

1.3.3 Data Analysis and Management in Test Automation

Even though software development has undergone several advancements in the last few years, the importance of evaluating test execution results is unchanged, especially in the case of automated tests.

1.3.4 Data Models in Data Science

Success of any application depends on the data set/database which provides insight to analyze and takes a decision. In order to make a database useful, data modeling is very important. Data models play an important role in modeling any real-world scenario. It helps to model data elements and their relation to each other. As part of data modeling, data will be pre-processed like cleaning, organizing in the required direction [8].

Basic types of data models are:

Conceptual data modeConceptual data models are abstract models. Decisions regarding entities, business plans, rules and regulations to implement along with basic framework or infrastructure or layout options will be part of this model.

Logical data modelIn this type of data model, importance is given for relational factors such as properties, or data attributes. This model is mainly useful in data warehousing plans.

Physical data modelThis model focuses on data points and their relationships to make a final actionable blueprint.

1.4 Data Masking in Data Model and Associated Risks

When handling sensitive data such as financial records, one has to take care of the risk associated with data breaches to a minimum by addressing possible sources of risk. Each organization should have comprehensive strategy for reducing data breach risks and mitigating their effect in order to protect their brand and reputation. In the conventional approach, data protection is done with encryption [9].

With the approach of data masking, one can replace the characters of sensitive data with similar but meaningless characters. It is also associated with de-masking process, during which the application maps the changed numbers back to the original ones. It is normally implemented as a cloud-based application. This type of masking can be applied to PAN number, Aadhar number, etc. Applications will be designed to work with specific data formats which can handle the masked data.

1.5 Prediction in Data Science

Data analytics can be broadly classified majorly into three types such as Descriptive, Predictive and Prescriptive Analytics [12].

Descriptive Analytics – This model helps in hypothesis generation, variable transformation and any root cause analysis of specific behavioral patterns.

Predictive Analytics – This type of model is used to predict the future behavior of the dependent variable. Predictive analytics answers the question of what is likely to happen.

Prescriptive Analytics – This type of analytics is required to avoid the catastrophic consequences of a pandemic like Covid-19.

Case Study

Aim: To predict the prices of StainlessSteelPrice

Time Period: 3 months and 6 months

In this case study, the aim was to predict the price of stainless steel for the next three months using machine learning algorithms and compare different models on the basis of accuracy.

Solution Approach

The first step started with exploratory data analysis which checks the insights of historical data and is considered as descriptive analytics. It includes checking of consistent data, inconsistent data (outliers), missing value, measure of central tendency (mean, median and mode), measure of dispersion (Variance, standard deviation and range), graphical representation like Box plot which tells the value of outlier, identifies whether the data is symmetrical, determines how tightly data is grouped and also verifies the data is skewed. After feature selection from different attributes of the data set with the help of correlation matrix and univariate selection is applied followed by feature engineering which includes handling missing values and handling outliers. Modeling with the help of statistical method, ARIMA, is also performed here [11]. Figure 1.7 shows the Data set for analysis and Figure 1.8 represents the Graph shows analyzed data.

Models Used for Visualization

Boxplot Boxplot graphically depicts groups of numerical data through their quartiles.

Regression Model The linear regression model is very useful in forecasting, time series modeling and finding the cause and effect relationship between variables.

ARIMA Model One can forecast a time series using the series past values in ARIMA model.

Figure 1.7 Data set for analysis.

'MS'

df.describe()

Figure 1.8 Graph shows analyzed data.

Figure 1.9 Boxplot data.

The above Figure 1.9 represents the Boxplot data here we can easily analyze the values.

Figure 1.10 Correlation data plot.

Figure 1.11 Lag plot.

Figure 1.12 Autocorrelation.

Figure 1.13 Partial autocorrelation.

The above Figure 1.10 represents Correlation data plot, Figure 1.11 shows the Lag plot of the given data, Figure 1.12 represents the Autocorrelation and Figure 1.13 represents the Partial autocorrelation, we can easily analyze the data.

Table 1.1 ARMA model results.

Dep. Variable:

StainlessSteelPrice

No. Observations

78

Model:

ARMA(5, 4)

Log Likelihood

131.623

Method:

css-mle

S.D. of innovations

0.043

Date:

Thu, 03 Jun 2021

AIC

-241.246

Time:

11:50:56

BIC

-215.322

Sample:

07-01-2013

HQIC

-230.868

-12-01-2019

coef

std err

z

P>|z|

[0.025

0.975]

Const

0.8018

0.022

36.169

0.000

0.758

0.845

ar.L1. StainlessSteelPrice

0.5693

nan

nan

nan

nan

nan

ar.L2. StainlessSteelPrice

1.0398

0.039

26.499

0.000

0.963

1.117

ar.L3. StainlessSteelPrice

0.1129

nan

nan

nan

nan

nan

ar.L4. StainlessSteelPrice

-1.0213

nan

nan

nan

nan

nan

ar.L5. StainlessSteelPrice

0.2672

0.005

56.007

0.000

0.258

0.277

ma.L1. StainlessSteelPrice

0.7260

0.112

6.478

0.000

0.506

0.946

ma.L2. StainlessSteelPrice

-0.5262

0.083

-6.322

0.000

-0.689

-0.363

ma.L3. StainlessSteelPrice

-1.0750

0.072

-15.018

0.000

-1.215

-0.935

ma.L4. StainlessSteelPrice

-0.1248

0.110

-1.137

0.256

-0.340

0.090

Figure 1.14 ARIMA model - train-test-prediction.

Figure 1.15 ARIMA Model - Test & prediction.

The above Figure 1.14 represents the ARIMA model , we can easily analyze the train, test and prediction. Figure 1.15 shows the ARIMA Model, here we can analyze Test and prediction.

1.6 Role of Metrics in Evaluation

In order to measure, quality of model, evaluation metrics are used. It will be suitable for either a statistical or machine learning model. The metrics are required to measure environmental and sustainable impacts, and the scope of their application should be wide in order to cover variety of data. As a result, metrics are to be developed and used in a continuous fashion.

1.7 Quantity of Data in Quality Assurance

It is required to keep a threshold value to the quality score and benchmark them based on their relevance. This is very crucial for business success. High-quality data improves confidence in decision making. Data quantity plays an important role in deciding data quality because as the data size increases, decision making will be more challenging and will lead to the right one with the large set of available data.

1.8 Identifying the Right Data Sources

Everyone knows that quantity and quality of data is important for right decision making but at the same time, the data content also plays a crucial role. So the question, “Are we collecting the right data?” is very relevant. Each data set provides a unique point of view to business operations. There is a chance of duplicate data, data with gaps, and biased data, so one has to ensure all these points while gathering data from multiple resources. By aggregating data from different data sources, one can make a holistic view towards business operations. If you come across conflicting data, it indicates that data is gathered from multiple sources and it can be looked into from multiple perspectives.

1.8.1 Need to Gather Up-to-Date Data

Every piece of data has a time stamp showing when it was created. Data starts aging from time to time. It is important to understand when the data was collected and how current the data is. In order to ensure its relevance, it is essential to trace the origin of data, and also it is recommended to collect data from the source system where it is created. Digital business processes require real-time data to be effective. This data can be analyzed to provide real-time visibility towards business forecasting and operations.

1.8.2 Synthesising Existing Advanced Technologies for Continuous Business Improvements

The definition of the term “software quality” evolved through many years. DevOps and cloud computing technologies play important roles in this direction. But adopting DevOps requires the knowledge of Automation Testing to increase the effectiveness and coverage of software testing. Availability of high-speed internet made the trend of storing and maintaining digital data easy. With the usage of social networking, the data generating apps generate a large amount of data that can be estimated as Terabytes daily. There is a need of some techniques and standards to extract some meaningful results from this large data set. High-speed computing hardware, distributed and parallel computing can also play crucial roles in assuring quality data towards continuous business improvements.

1.9 Conclusion

The chapter focuses on the importance of data masking to predict the associated risks. The chapter also deals with data analytics methods like prescriptive and predictive approaches and demonstrates how the quality of the data influences the desired outcome. In writing this chapter, we are convinced that the insights provided will be of crucial value to any organization dependent on quality assurance in data science as the basis of their business success. This information can be adopted in their business strategy to leverage their potential to its maximum.

References

1. S. Farooqui and W. Mahmood, A survey of Pakistan’s SQA Practices: a Comparative Study, in

29th International Business Information Management Association Conference, Vienna, Austria, 2017

.

2. Rakesh Kumar, Birth Subhash, Maria Fatima, Waqas Mahmood, Quality Assurance for Analytics,

International Journal of Advanced Computer Science and Applications

, Vol. 9, No. 8, 2018 [Online], pp. 160-166.

3. F. Lambert, Tesla Autopilot confuses markings toward barrier in recreation of fatal Model X crash at exact same location, 3 April 2018. [Online]. Available:

https://electrek.co/2018/04/03/tesla-autopilot-crash-barrier-markings-fatal-model-x-accident/

. [Accessed 4 April 2018].

4. SeattleDataGuy, Data Quality Is Not as Sexy as Data Science, 15 September 2017. [Online]. Available:

https://medium.com/@SeattleDataGuy/good-data-quality-is-key-for-great-data-science-and-analytics-ccfa18d0fff8

.[Accessed 4 April 2018].

5. F. J. Buckley and R. Poston, Software Quality Assurance,

IEEE Transactions on Software Engineering

, pp. 36-41, 1984.

6. Michel Dumontier and Tobias Kuhn, Data Science – Methods, infrastructure, and applications,

Data Science

1 (2017) 1–5 1 DOI 10.3233/DS-170013 IOS Pres

7. Y. Chen, A.J. Elenee and G. Weber, IBM Watson: How cognitive computing can be applied to big data challenges in life sciences research,

Clin Ther

38 (2016), 688–701. doi:10.1016/j.clinthera.2015.12.001.

8. D. Demner-Fushman and N. Elhadad, Aspiring to unintended consequences of natural language processing: A review of recent developments in clinical and consumer-generated text processing,

Yearb Med Inform

10 (2016), 224–233. doi:10. 15265/IY-2016-017.

9.

https://acuvate.com/blog/challenges-faced-by-data-scientists/

[Date:12/11/2021]

10. Robert Hoehndorf and Núria Queralt-Rosinach, Data Science and symbolic AI: Synergies, challenges and opportunities,

Data Science

1 (2017) 27–38 27 DOI 10.3233/DS-170004 IOS Press

11. Kumar Manoj, Anand Madhu, An application of time series ARIMA forecasting model for predicting sugarcane production in India,

Studies in Business and Economics Studies in Business and Economics

, Vol. 9, Issue 1, April 2014, pp. 81-94.

12. Vaibhav Kumar, Predictive Analytics: A Review of Trends and Techniques, International

Journal of Computer Applications

, 182(1), pp.31-37, July 2018. DOI:10.5120/ijca201891743

Note

*

2Design and Implementation of Social Media Mining – Knowledge Discovery Methods for Effective Digital Marketing Strategies

Prashant Bhat and Pradnya Malaganve*

Department of Computer Science, School of Computational Sciences and IT, Garden City University, Bengaluru, India

Abstract

Nowadays, Data Science and Data Engineering have become an essential part of any business, given the enormous amount of data storage that are raised. Most industries are implementing Data Science techniques to get higher revenue and in order to increase customer satisfaction in their respective businesses, which also influences Digital Marketing and online business. Considering these things, we have implemented a strategic methodology and conducted number of experiments on the same. Data Mining tools, algorithms and methods are closely related to Data Science and Data Engineering. Hence, we have used machine learning algorithms in our experiments. Facebook social media dataset is used in the work which influences all the experiments conducted. The data contains the advertisement details of user interactions on every post uploaded regarding cosmetic products. In the present work, we have proposed a novel framework for knowledge discovery from Facebook advertisement data using Data Mining techniques and methodologies. One of the experiments shows the comparison analysis of different Classification algorithms and in the experiment, we have used different Decision Tree classifiers and Naive Bayes Classifiers to check Classification accuracy and other parameters, and as a result found that Decision Tree classifiers give greater accuracy while classifying Facebook data. Another experiment follows Clustering Data Mining technique and we have used Expectation maximization Clustering, based on which we have tried to suggest good Marketing Strategy for Facebook post publishers. These experiments have been conducted on the Facebook data to produce good marketing strategies for Digital Marketing, and the findings show which kind of content publishing on Facebook can get more views and customer interactions on it, whether it is a video, photo, link or a status.

Keywords: Classification, cluster, marketing strategy, facebook, data mining, digital marketing

2.1 Introduction

As the entire world is suffering from the Covid-19 pandemic at present, people fear interacting with each other face to face. In order to save lives, government has taken essential steps, such as lockdown, social distancing, sanitizing, increasing immunity in everyone, vaccination and several rules like no one should go out of their homes till the Covid-19 chain breaks and until we feel we can breathe peacefully again. In such a situation, social media has played a very helpful and enormous role to spread awareness about everything and most importantly in connecting people live [9]. And because of the digital world, one cannot imagine his/her life without social media and social channels. Although people cannot meet each other directly, they are using social media like Facebook, Twitter, Instagram, WhatsApp, etc., very widely and often. By this the companies are making unimaginable profits in the present days. Apart from these, other applications such as WebEx, Google Meet, Microsoft Teams, TeamViewer and other developments are being used by all educational institutions, schools, colleges and universities for academic purposes. Even for purchasing food and other goods, people have to use the marketing applications such as Amazon, Flipkart, Zomato, BigBasket, etc. After observing all these things, it is concluded that the world is ruled by digital media. And someday, the human being will get addicted to digital media entirely [4].

Hence, since people throughout the entire world are making use of digital media for their everyday needs, the amount of data stored on the cloud amounts to several terabytes every day, and this contains huge noise and useless data. In order to clean it and to extract knowledge out of it, data science techniques and Data Mining algorithms are very useful.

In our work, we conducted several experiments on a cosmetic company’s Facebook data set. The Facebook page of the company was generated for marketing advertisement of Cosmetic products and to spread information like special offers available on each product, to elaborate the product, i.e., the description about cosmetic products and a few more details about the products. The content uploaded to describe the products detail is in multimedia form. A few of our experiments show which kind of content uploading on Facebook may lead to more customer interaction. To achieve this, we have used Cluster techniques. Here interaction does not mean just giving a reply or comments but it includes each and every click on the post and page, likes, comments, shares and all the activities made on the post or page of the company’s Facebook data. As most of the researches are done using Data Mining Classification techniques, in our experiment also we have used Classification techniques to provide good Classification accuracy. We derived two different algorithms for Facebook data set and concluded which algorithm best suits to classify Facebook data set with good Classification accuracy rate [24].

The international broadcasting of social media was activated by the exponential evolution of Internet users, leading to an entirely new atmosphere for consumers to convey ideas and comment or advice about products and facilities. According to Statista, the number of social network users will surge from 2.44 billion to 3.5 billion from 2018 to 2021, an upsurge of around 150% in three years. Given its rapid expansion [12], social media has become the most significant media network for marketers to reach their customers. Companies comprehended quickly regarding the potential of using Internet-based social media to impact clients, incorporating social media business interaction in their approaches for leveraging their industries. Knowing and understanding the influence of advertisement is a vital issue to be included in a worldwide social media approach [10]. There have been a number of experiments focused on discovering the associations between digital publications on social networks and the influence of such publications measured by client’s interactions. Also, some experiments dedicated to research on executing predictive systems which can efficiently be used to predict the progression of a post before publishing it.

A system which can predict the effect of distinctly published posts can deliver a treasured benefit when a company determines to interact via any social media, adapting the elevation of products and services. Promotion managers and those who make the advertisement could make informed decisions on the accessibility of the published posts, hence positioning approaches toward improving the impact of posts, promoting from the estimates made. Also described, those social media publications are extremely associated to brand building. Data Mining offers a stimulating method for mining predictive knowledge from a mixture of data. In our work, we studied the applications of social media and Data Mining, specifically for assessing market trends from user involvements, and most of the work has been concentrated on the ideas and interactions of users via social network, with an emphasis on collecting data from various network groups or from personal posts. Also, our work is focused on predicting the effect of publishing distinct posts on a company’s Facebook page [11]. The effect is calculated through various existing methods related to consumer views and interactions. The resultant outcomes help the website administrator or the content uploading manager decide which kind of post should be published so that the maximum user interactions can be achieved. For validating the adopted method in the present work, we have used a cosmetics company with a well-known brand, containing 500 posts uploaded by the company on its Facebook brand page [6]. Hence, the data set of posts has been used as an input to the Data Mining technique [12].

2.1.1 Objectives of the Study

Implementing a novel framework using Data Mining techniques and algorithms in order to discover the knowledge

Evaluating comparison analysis of well-known Data Mining Classifiers to choose the best suitable classifier for Facebook data

Implementing Cluster model to predict the impact of uploaded content or post using their characteristics

Describing the relationship between the discovered knowledge, presenting the influence of input characteristics and their impact on the published posts

Section 2.3 describes the resources used, the algorithms and techniques preferred for the experiments. The section is focused on explaining the explicit background on the methodological features of the Data Mining technique which also includes prediction demonstrating and knowledge discovery.

2.2 Literature Review

Authors Mohammed and Ahmed Burhan’s [14] work shows the comparison of three distinct data mining algorithms for classifications, namely “Decision Tree, Naïve Bayes and Support Vector Machine”, applied on social media dataset by NYC, to generate the best result of the classification algorithm which can classify the variables according to the stages. The result of the authors [14] work conveys that Support Vector Machine performs best compared to other two techniques used in the work.

Twitter is a famous social media which carries a huge number of statements regarding happiness, dejection, communal information, and so on. In such cases when people feel anger, they express it in the tweet. Several tweets may hold Indonesian swear characters. It can be a serious cause because people of Indonesia may not use swear words. Twitter has given data about tweets by account, new topics, and advance keyword. Authors W. B. Zulfikar, M. Irfan, C. N. Alam and M. Indra [15] have analysed several tweets related to political data, political event, and well-known Indonesian persons because the presumed tweet carries Indonesian swear words. The resultant tweets processed via text mining and analysed using “Naive Bayes Classifier, Nearest Neighbor, and Decision Tree” to distinguish Indonesian swear words. Authors [15] experiment discovered the highest accurate classification method, which means the method can distinguish the actual meaning of “Indonesian swear word” present in the tweets.

Largely scalable computing atmosphere gives an opportunity of bringing out different data-intensive natural language processing and Data Mining jobs. Text classification is one with some matters inspected by many data scientists. The authors Tomas Pranckevičius and Virginijus Marcinkevičius [16] have examined “Naïve Bayes, Random Forest, Decision Tree, Support Vector Machines, and Logistic Regression” algorithms built using Apache Spark. Authors [16] have analysed short text on product review data of Amazon and focused on comparing and analysing the classifiers by calculating the classification accuracy considering the size of training data, and the number of n-grams.

Every day millions of videos are uploaded on YouTube by people around the world, hence enormous data is gathered in the cloud. Authors Riyan Amand and Edi Surya Negara [17] have found Data Mining Classification as a solution for digging up information from this data. Many time, users on YouTube may not find the exact category of video by the title they give. Hence, authors [17] have made an experiment to classify YouTube data associated with search text. The author’s [17