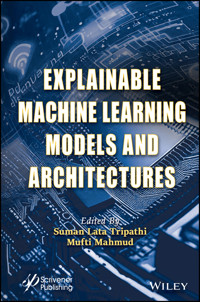

Explainable Machine Learning Models and Architectures E-Book

168,99 €

Mehr erfahren.

- Herausgeber: John Wiley & Sons

- Kategorie: Wissenschaft und neue Technologien

- Sprache: Englisch

EXPLAINABLE MACHINE LEARNING MODELS AND ARCHITECTURES This cutting-edge new volume covers the hardware architecture implementation, the software implementation approach, and the efficient hardware of machine learning applications. Machine learning and deep learning modules are now an integral part of many smart and automated systems where signal processing is performed at different levels. Signal processing in the form of text, images, or video needs large data computational operations at the desired data rate and accuracy. Large data requires more use of integrated circuit (IC) area with embedded bulk memories that further lead to more IC area. Trade-offs between power consumption, delay and IC area are always a concern of designers and researchers. New hardware architectures and accelerators are needed to explore and experiment with efficient machine-learning models. Many real-time applications like the processing of biomedical data in healthcare, smart transportation, satellite image analysis, and IoT-enabled systems have a lot of scope for improvements in terms of accuracy, speed, computational powers, and overall power consumption. This book deals with the efficient machine and deep learning models that support high-speed processors with reconfigurable architectures like graphic processing units (GPUs) and field programmable gate arrays (FPGAs), or any hybrid system. Whether for the veteran engineer or scientist working in the field or laboratory, or the student or academic, this is a must-have for any library.

Sie lesen das E-Book in den Legimi-Apps auf:

Seitenzahl: 350

Veröffentlichungsjahr: 2023

Ähnliche

Scrivener Publishing100 Cummings Center, Suite 541JBeverly, MA 01915-6106

Engineering Systems Design for Sustainable Development

Series Editor: Suman Lata Tripathi, PhD

Scope: A multidisciplinary approach is the foundation of this series, meant to intensify related research focuses and to achieve the desired goal of sustainability through different techniques and intelligent approaches. This series will cover the information from the ground level of engineering fundamentals leading to smart products related to design and manufacturing technologies including maintenance, reliability, security aspects, and waste management. The series will provide the opportunity for the academician and industry professional alike to share their knowledge and experiences with practitioners and students. This will result in sustainable developments and support to a good and healthy environment.

Publishers at ScrivenerMartin Scrivener ([email protected])Phillip Carmical ([email protected])

Explainable Machine Learning Models and Architectures

Edited by

Suman Lata Tripathi

and

Mufti Mahmud

This edition first published 2023 by John Wiley & Sons, Inc., 111 River Street, Hoboken, NJ 07030, USA and Scrivener Publishing LLC, 100 Cummings Center, Suite 541J, Beverly, MA 01915, USA© 2023 Scrivener Publishing LLCFor more information about Scrivener publications please visit www.scrivenerpublishing.com.

All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, except as permitted by law. Advice on how to obtain permission to reuse material from this title is available at http://www.wiley.com/go/permissions.

Wiley Global Headquarters111 River Street, Hoboken, NJ 07030, USA

For details of our global editorial offices, customer services, and more information about Wiley products visit us at www.wiley.com.

Limit of Liability/Disclaimer of WarrantyWhile the publisher and authors have used their best efforts in preparing this work, they make no representations or warranties with respect to the accuracy or completeness of the contents of this work and specifically disclaim all warranties, including without limitation any implied warranties of merchantability or fitness for a particular purpose. No warranty may be created or extended by sales representatives, written sales materials, or promotional statements for this work. The fact that an organization, website, or product is referred to in this work as a citation and/or potential source of further information does not mean that the publisher and authors endorse the information or services the organization, website, or product may provide or recommendations it may make. This work is sold with the understanding that the publisher is not engaged in rendering professional services. The advice and strategies contained herein may not be suitable for your situation. You should consult with a specialist where appropriate. Neither the publisher nor authors shall be liable for any loss of profit or any other commercial damages, including but not limited to special, incidental, consequential, or other damages. Further, readers should be aware that websites listed in this work may have changed or disappeared between when this work was written and when it is read.

Library of Congress Cataloging-in-Publication Data

ISBN 9781394185849

Front cover images supplied by Pixabay.comCover design by Russell Richardson

Preface

Machine learning and deep learning modules are now an integral part of many smart and automated systems where signal processing is performed at different levels. Signal processing in the form of text, image or video needs large data computational operations at the desired data rate and accuracy. Large data requires more use of integrated circuit (IC) area with embedded bulk memories that further lead to more IC area. Trade-offs between power consumption, delay and IC area are always a concern of designers and researchers. New hardware architectures and accelerators are needed to explore with efficient machine learning models. Many real-time applications like processing of biomedical data in healthcare, smart transportation, satellite image analysis, and IoT-enabled systems still have a lot of scope for improvements in terms of accuracy, speed, computational powers and overall power consumption. The current proposal deals with the efficient machine and deep learning models that support high-speed processors with reconfigurable architectures like graphic processing units (GPUs) and Field programmable gate arrays (FPGAs) or any hybrid system.

This book will cover the hardware architecture implementation of the machine algorithm. The software implementation approach and efficient hardware of the machine learning application with FPGA will be the distinctive feature of the book. Security in biomedical signal processing will also be a key focus.

Acknowledgements

Authors would like to thank School of Electronics and Electrical Engineering, Lovely Professional University, Phagwara, India, Department of Computer Science & Information Technology, Nottingham Trent University, Nottingham, UK for providing necessary facilities required for completing this book. Authors would also like to thanks to researchers from different organizations like IIT, NIT, Government and Private Universities and Colleges etc. who are contributing their chapters in this book.

1A Comprehensive Review of Various Machine Learning Techniques

Pooja Pathak* and Parul Choudhary†

Dept. of Computer Engineering and Applications, GLA University, Mathura, India

Abstract

The creation of an intelligent system that works like a human is due to Artificial intelligence (AI). It can be broadly classified into four techniques: machine learning, machine vision, automation and Robotics and natural language processing. These domains can learn from data provided, identify the hidden pattern and make decisions with human intervention. There are three types of machine learning: supervised learning, unsupervised learning, and reinforcement learning. Thus, to reduce the risk factor while decision making, machine learning techniques are more beneficial. The benefit of machine learning is that it can do the work automatically, once it learns what to do. Therefore, in this work, we discuss the theory behind machine learning techniques and the tasks they perform such as classification, regression, clustering, etc. We also provide a review of the state of the art of several machine learning algorithms like Naive Bayes, random forest, K-Means, SVM, etc., in detail.

Keywords: Machine learning, classification, regression, recognition, clustering, etc.

1.1 Introduction

Machine learning spans IT, statistics, probability, AI, psychology, neurobiology, and other fields. Machine learning solves problems by creating a model that accurately represents a dataset. Teaching computers to mimic the human brain has advanced machine learning and expanded the field of statistics to include statistical computational theories of learning processes.

Machine learning is the subdomain of artificial intelligence. There are two subsets of AI – machine learning and deep learning. Machine learning can effectively work on small datasets and it takes less time for training, while deep learning can work on large datasets and it takes more time for training. Machine learning has three types—supervised, unsupervised and reinforcement learning. Supervised learning algorithms such as neural networks are worked with labelled datasets. Unsupervised learning algorithms such as clustering, etc., are worked with unlabeled datasets. Machine learning algorithms are grouped by desired outcome.

Supervised learning: Algorithms map inputs to outputs. The classification problem is a standard supervised learning task. The learner must learn a function that maps a vector into one of several classes by looking at input-output examples. Unsupervised learning models unlabeled inputs. Semi-supervised learning combines labelled and unlabeled examples to create a classifier. Reinforcement learning teaches an algorithm how to act based on data. Every action affects the environment, which guides the learning algorithm. Transduction is similar to supervised learning but does not explicitly construct a function. Instead, it predicts new outputs based on training inputs, training outputs, and new inputs. The algorithm learns its own inductive bias from past experience.

Machine learning algorithms are divided into supervised and unsupervised groups. Classes are predetermined in supervised algorithms. These classes are created as a finite set by humans, which labels a segment of data. Machine learning algorithms find patterns and build math models. These models are evaluated based on their predictive ability and data variance [1].

In this chapter, we review some techniques of machine learning algorithms and elaborate on them.

1.1.1 Random Forest

To realise AI, machine learning is the most powerful technique. There are several algorithms in machine learning, out of which random forest is considered as a group classification technique algorithm known for its effectiveness and simplicity. The Proximities, Out-of-bag error, Variable Importance Measure, etc., are the important features of it. An algorithm based on ensemble learning and belonging to supervised learning can be used for regression and classification. The classifier can be called a “Decision Tree Classifier” from which it chooses the best tree as the final classification tree via voting. Note that as the number of trees increases in a forest, it gives high accuracy and prevents overfitting problems. Random forest algorithm is chosen because it takes less training time, it runs efficiently for a large dataset to predict output with highest accuracy and it maintain accuracy even if a big proportion of data is missing. Figure 1.1 shows a diagram of this algorithm.

Working: It is divided into two phases: first, it combines N-decision trees to create a random forest. And second, it predicts each tree that was created in the first step.

Step 1: First, choose k data points at random from the training dataset.

Step 2: Decision trees are to be built with selected subsets.

Step 3: Then choose any number of trees which we need to build and repeat steps 1 and 2. The random forest algorithm is used in four major sectors: medicine, banking, marketing, and land use. Although it is sometimes mentioned that this algorithm is for regression and classification, that is not true; random forest is good for regression only [2].

1.1.2 Decision Tree

Decision tree [3] represents a classifier expressed as a recursive partition of the instance space. The decision tree is a distributed tree with a root node and no incoming edges.

All of the other nodes have exactly one incoming edge. Internal or test nodes have outgoing edges. The rest are leaves. Each test node in a decision tree divides the instance space into two or more sub-spaces based on input values. In the simplest case, each test considers a single attribute, such that the instance space is portioned according to the attribute’s value. In case of numeric attributes, the condition refers to a range. Each leaf is assigned the best target class. The leaf may hold a probability vector that indicates the probability of the target attribute having a certain value. Navigating from the tree’s root to the leaf classifies instances based on tests along the way. Figure 1.2 shows a basic decision tree. Each node’s branch is labelled with the attribute it tests. Given this classifier, an analyst can predict the customer and understand their behaviour [4].

Figure 1.1 Architecture of random forest.

Figure 1.2 Decision tree.

1.1.3 Support Vector Machine

SVM kernel is the name of a function that transforms low-dimensional space to high-dimensional space. Non-linear separation problems can be solved using this kernel.

The main advantage of SVM is that it is very effective in a high dimension case.

SVM is used for regression as well as classification but primarily it is best for classification purpose; the main purpose is to find distinct input features by hyperplane. If there are two input features then there is only one line and if there are three input features then hyperplane is 2-D plane. The draw is, when the number of features exceeds by three then it becomes difficult to imagine [5].

An extreme vector is chosen by SVM which helps to create hyperplane. Vectors which are nearer to hyperplane are called support vectors; thus, the algorithm is known as support vector machine, illustrated in Figure 1.3.

Figure 1.3 Support vector model.

Example: SVM can be understood with an example.

A classification of dog and cat images can be easily done by SVM. In this case, first we train the model and then we apply testing with a creature. Cat and dog are two data points which are distinguished by one hyperplane. If there are extreme cases of cat, then it will classify cat; otherwise dog is to be classified.

There are two types of SVM kernel—linear and non-linear.

In linear SVM, dataset is classified into two classes using one hyperplane; such data is called linearly separable data. While in non-linear, dataset is not classified in one hyperplane; this data is called non-linear data.

1.1.4 Naive Bayes

Naive Bayes is based on Bayes theorem in classification technique. It is one of the methods in supervised learning algorithms and statistical method. But it is used for both clustering and classification depending on conditional probabilities that happen [6].

Let us assume one probabilistic model which allows to capture uncertainty by determining the probabilities of an outcome. The main reason for using Naive Bayes is that it can solve predictive problems; also, it evaluates learning algorithms. It obtains practical algorithms and can merge all observed data [7].

Figure 1.4 Naive Bayes.

Let’s consider a general probability distribution of two values R(a1, a2). Using Bayes rule, without loss of generality we get this equation in Figure 1.4.

Considering a general probability distribution of two values R(a1,a2). We obtain without any loss of generality an equation [8].

1.1.5 K-Means Clustering

It is one of the clustering techniques in unsupervised learning algorithm. It classifies number of clusters from given dataset; and for each cluster, k centers are defined [9] as shown in Figure 1.5. This k centers are placed in a calculated way due to various locations which cause different results. Hence the better way is to place each cluster away from the others.

1.1.6 Principal Component Analysis

It is used to convert a set of observation of correlated variables to linearly uncorrelated variable. PCA shown in Figure 1.6 is a statistical process which uses orthogonal transformation. The data dimension is reduced for making the computations faster as well as easier, which is why the process is called “dimensionality-reduction technique”. Through the linear combinations, it explains the structure of variance-covariance set of variables [10].

1.1.7 Linear Regression

The linear regression1 algorithm finds relationships and dependencies between variables. It models the relationship between a continuous scalar dependent variable z (also label or target) and one or more (a D-dimensional vector) explanatory variables denoted X using a linear function. Regression analysis predicts a continuous target variable, while classification predicts a label from a finite set. Multiple regression model with linear input variables is:

Figure 1.5 Clustering in K-means.

Figure 1.6 Principle component analysis [11].

supervised learning algorithms include linear regression [11]. We train the model on labelled data (training data) and use it to predict labels on unlabeled data (testing data).

Figure 1.7 shows how the model (red line) fits training data (blue points) with known labels (z axis) as accurately as possible by minimizing a loss function. We can use the model to predict unknown labels (x value, z value).

1.1.8 Logistic Regression

Like Naive Bayes, logistic regression [12] extracts a set of weighted features from the input, logs them, and adds them linearly. It is a discriminative classifier, while Naive

Bayes is generative. The logistic regression diagram in Figure 1.7 predicts event probability by fitting data to a logistic function. It uses several numerical or categorical predictor variables.

Figure 1.7 Visual representation of the logistic function [14].

Its hypothesis is defined as,

Where d is sigmoid function defined as,

In machine learning, we use a built-in function called fmin bfgs2 to find the minimum of this cost function given a fixed dataset. Parameters are initial values of parameters to be optimized and a function that, given the training set and a particular, computes the logistic regression cost and gradient with respect to for the x and y dataset. Final value is used to plot training data decision boundary.

1.1.9 Semi-Supervised Learning

Semi-supervised learning combines supervised and unsupervised methods. It can be useful in machine learning and data mining where unlabeled data is present and labelling it is tedious. With supervised machine learning, you train an algorithm on a “labelled” dataset with outcome information [13].

1.1.10 Transductive SVM

TSVM is widely used for semi-supervised learning with partially labelled data. Its generalizations have been a source of mystery. It labels unlabeled data so the margin between them is maximum. TSVM is NP-hard [14].

1.1.11 Generative Models

Data-generating models are generative. It models features and class (complete data). All algorithms modelling P (x, y) are generative because I can use its probability distribution to generate data points. One labelled sample per component confirms mixture distribution.

1.1.12 Self-Training

A classifier self-trains using labelled data. Unlabeled data feeds the classifier. The training set combines unlabeled and predicted labels. It’s repeated. The classifier self-trains, hence the name [15].

1.1.13 Relearning

Reinforcement learning focuses on how software agents should act to maximise cumulative reward. Reinforcement learning is one of three basic machine learning paradigms [16, 17].

1.2 Conclusions

In this chapter we survey some algorithms of machine learning. When we have less amount of labelled data then machine learning would be best. In the future we will review

Reinforcement learning. Today, each person uses machine learning algorithm, whether knowingly or unknowingly.

This chapter summarizes supervised learning algorithms and explains machine learning. It also describes machine learning algorithm structures. This area has seen a lot of development in the last decade. The learning methods produced excellent results that were impossible in the past. Due to rapid progress, developers can improve supervised learning methods and algorithms.

References

1. Carbonell, Jaime G., Ryszard S. Michalski, and Tom M. Mitchell. An overview of machine learning.

Machine Learning

(1983): 3-23.

2. Mahesh, Batta. Machine learning algorithms-a review.

International Journal of Science and Research (IJSR)

. [Internet] 9 (2020): 381-386.

3. Ayodele, Taiwo Oladipupo. Types of machine learning algorithms.

New Advances in Machine Learning

3 (2010): 19-48.

4. Ray, Susmita. A quick review of machine learning algorithms.

2019 International Conference on Machine Learning, Big Data, Cloud and Parallel Computing (COMITCon)

. IEEE, 2019.

5. Vabalas, Andrius,

et al

. Machine learning algorithm validation with a limited sample size.

PloS one

14.11 (2019): e0224365.

6. Tom Mitchell, McGraw Hill (2015)

Machine Learning

.

7. S. B. Kotsiantis. (2007) Supervised Machine Learning: A Review of Classification Techniques. In

Proceedings of the 2007 Conference on Emerging Artificial Intelligence Applications in Computer Engineering: Real Word AI Systems with Applications in eHealth, HCI, Information Retrieval and Pervasive Technologies, The Netherlands

.

8. Amanpreet Singh, Narina Thakur, Aakanksha Sharma (2016). A review of supervised machine learning algorithms. In

Computing for Sustainable Global Development (INDIACom), 2016 3rd International Conference on

.

9.

http://www.slideshare.net/GirishKhanzode/supervised-learning-52218215

10.

https://www.researchgate.net/publication/221907660_Types_of_Machine_Learning_Algorithms

11.

http://radimrehurek.com/data_science_python/

12.

http://www.slideshare.net/cnu/machine-learning-lecture-3Anoverviewofthesupervisedmachinelearningmethods

13.

http://gerardnico.com/wiki/data_mining/simple_regression

14.

http://aimotion.blogspot.mk/2011/11/machine-learning-with-python-logistic.html

15. Dasari L. Prasanna; Suman Lata Tripathi, “Machine and Deep‐Learning Techniques for Text and Speech Processing,” in

Machine Learning Algorithms for Signal and Image Processing

, IEEE, 2023, pp. 115-128, doi: 10.1002/9781119861850.ch7

16. Kanak Kumar; Kaustav Chaudhury; Suman Lata Tripathi, “Future of Machine Learning (ML) and Deep Learning (DL) in Healthcare Monitoring System,” in

Machine Learning Algorithms for Signal and Image Processing

, IEEE, 2023, pp. 293-313, doi:10.1002/9781119861850.ch17.

17. Deepika Ghai, Suman Lata Tripathi, Sobhit Saxena, Manash Chanda, Mamoun Alazab,

Machine Learning Algorithms for Signal and Image Processing

, Wiley IEEE Press-2022, ISBN: 978-1-119-86182-9

Notes

*

Corresponding author

:

†

Corresponding author

:

2Artificial Intelligence and Image Recognition Algorithms

Siddharth1*, Anuranjana1 and Sanmukh Kaur2

1Dept. of Information Technology, Amity School of Engineering and Technology, Amity University Uttar Pradesh, Noida, India

2Dept. of Electronics and Communication Engineering, Amity School of Engineering and Technology, Amity University Uttar Pradesh, Noida, India

Abstract

Various computer vision tasks, including video object tracking and image detection/classification, have been successfully achieved using feature detectors and descriptors. In various phases of the detection-description pipeline, many techniques use picture gradients to characterize local image structures. In recent times, convolutional neural networks (CNNs) have taken the place of some or all these algorithms that reply and detectors and descriptors. Earlier algorithms, such as the Harris Corner Detector, SIFT, ASIFT and SURF that were once hailed as cutting-edge image recognition algorithms have now been replaced by something more robust. This document highlights the generational improvement in image recognition algorithms and draws a contrast between current CNN-based image recognition techniques and previous state-of-the-art algorithms that don’t rely on CNN. The purpose of this study is to emphasize the necessity of using CNN-based algorithms over traditional algorithms which are not as dynamic.

Keywords: Convolution Neural Networks (CNN), Scale Invariant Feature Transform (SIFT), Artificial Intelligence (AI), Speeded Up Robust Features (SURF)

2.1 Introduction

The rising capabilities of artificial intelligence in areas like high-level thinking and sensory handling have made it more revered than human intelligence. Its encounter nature excels in every significant aspect of the supervisory environment. The sole focus of critics since the advent of the fifth computing period has been to pretend that the brain-computer is viable and dynamic [1]. The capacity for computers to decode and identify the contents of the billions of photographs and millions of hours of video that are published on the internet each year is a challenge that has immense importance. However, there has been a lot of study on deriving high-level information from raw pixel data.

Many conventional techniques rely on the discovery and extraction of significant key points. Numerous techniques have been put forth to find and describe characteristics mentioned in [2, 3]. SIFT and ASIFT [4], which are built on and improved upon from Harris Corner Detector [5], are two of the most often used techniques along with SURF [6]. The image matching algorithms usually consist of two parts: detector and descriptor. They first detect points of interest in the compared images and select a region around each point of interest, and then associate an invariant descriptor or feature to each region. Similarities may thus be established by matching the descriptors. Detectors and descriptors should be as invariant as possible [3].

For identifying and categorizing features, researchers have proposed Convolution Neural Network–based algorithms in recent years [7]. CNNs have been effectively employed to tackle challenging issues like speech recognition and image classification. They were first introduced by Fukushimain in 1998. CNNs are made up of neurons, and each one determines the learning’s weight and inclination. It also includes an information level, a yield layer, and various hidden layers, where the hidden layer includes standardized layers such as convolutional, pooling, and fully associated layers [10]. The convolutional layer connects two data arrangements using a convolution activity. It pretends to be a certain neuron’s input for visual enhancements. The pooling layer is used to reduce the dimensions by partnering the yield of the neuron cluster at a single layer with one neuron [8].

This paper highlights the transition between CNN-based and traditional image detection algorithms. We also draw a contrast between the two methods and why CNNs are preferred over the traditional image recognition/classification methods.

2.2 Traditional Image Recognition Algorithms

Traditional image recognition algorithms consist of a detector and descriptor vector. Before assigning an invariant descriptor or feature to each region, they first identify places of interest in the compared pictures and choose a region surrounding each one. Thus, by matching the descriptors, correspondences can be found. It is best if detectors and descriptors are as invariant as feasible [3].

2.2.1 Harris Corner Detector (1988)

It is a mathematical approach to extracting corners in an image and deducing features in an image. This was first introduced in 1988 and since then it has been used in many algorithms. Determining corners is done by calculating the change in intensity of an image. It is calculated using the eq below which is derived using the Taylor function for the 2D equation.

Where the term I x + u, y + v represents the shifted/new intensity and the shift is u and v and I u, v represents the original intensity.

The above equation can be rewritten in the matrix form as follows,

Where the M in the above equation is

We then next calculate the trace and determinant of matrix M to find R, where R is:

where,

det(

M

)=λ1λ2

trace(

M

)=λ1+λ2

λ1 and λ2 are the eigenvalues of

M

So, the magnitudes of these eigenvalues decide whether a region is a corner, an edge, or flat.

When |

R

| is small, which happens when λ1 and λ2 are small, the region is flat.

When

R

<0, which happens when λ1>>λ2 or vice versa, the region is edge.

When

R

is large, which happens when λ1 and λ2 are large and λ1∼λ2, the region is a corner.

A simple mathematical method for identifying which windows exhibit significant differences when moved in any direction is the Harris Corner Detector. Each window has a score R connected to it. We determine which ones are corners and which ones are not based on this score [5]. The Harris Corner Detector is found to be invariant to translation, rotation, and illumination changes [11], but not scale invariant, as well see some algorithms that adopted and improved on Harris corner detector (the most famous one being SIFT) [12]. Figure 2.1 above, shows the region variance from flat region to edge and then to a corner region.

Figure 2.1 Visualizing corner detection.

2.2.2 SIFT (2004)

In SIFT algorithm (Scale-invariant feature transform) image processing and recognition we try to reduce the image to a set of locally distinct points together with the information of said points. We then look for those points in other images and if the data matches, we can say that the image is similar, even if it is from a different viewpoint [4]. The initial goal of the SIFT method is to compare two images (or two image parts) that can be deduced from each other (or from a common image) by a rotation, a translation, and a scale change. The method turns out to be robust also to rather large changes in viewpoint angle, which explains its success [13].

We take a patch around the key point into account and use intensity values or the changes in intensity values, i.e., the image gradience in this area is used to describe it and turn it into a Descriptor Vector.

Now if we have multiple images where we detect the same key points, we can conclude that these are the same images; here, being the same does not necessarily mean being the same as they can be from different angles and with different lighting conditions.

The two images need not be the same, but they are of the same location from varying angles, and by matching the key points in the images we can conclude that they are of the same place [4, 14].

Computation of the SIFT Key Points

The locally distinct points or key points are found using the Difference of Gaussians Approach, which means the taken image is blurred using Gaussian Blur at different magnitudes. Then all the images are subtracted from each other and then we stack the difference images on top of each other. Then we look for extreme points, so points where the neighbours in the X-Y