6,99 €

Mehr erfahren.

- Herausgeber: Open Book Publishers

- Sprache: Englisch

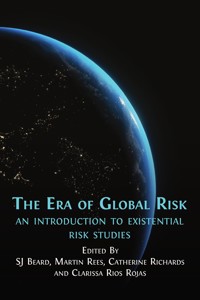

This innovative and comprehensive collection of essays explores the biggest threats facing humanity in the 21st century; threats that cannot be contained or controlled and that have the potential to bring about human extinction and civilization collapse. Bringing together experts from many disciplines, it provides an accessible survey of what we know about these threats, how we can understand them better, and most importantly what can be done to manage them effectively.

These essays pair insights from decades of research and activism around global risk with the latest academic findings from the emerging field of Existential Risk Studies. Voicing the work of world leading experts and tackling a variety of vital issues, they weigh up the demands of natural systems with political pressures and technological advances to build an empowering vision of how we can safeguard humanity’s long-term future.

The book covers both a comprehensive survey of how to study and manage global risks with in-depth discussion of core risk drivers: including environmental breakdown, novel technologies, global scale natural disasters, and nuclear threats. The Era of Global Risk offers a thorough analysis of the most serious dangers to humanity.

Inspiring, accessible, and essential reading for both students of global risk and those committed to its mitigation, this book poses one critical question: how can we make sense of this era of global risk and move beyond it to an era of global safety?

Das E-Book können Sie in Legimi-Apps oder einer beliebigen App lesen, die das folgende Format unterstützen:

Veröffentlichungsjahr: 2023

Ähnliche

THE ERA OF GLOBAL RISK

The Era of Global Risk

An Introduction to Existential Risk Studies

Edited by SJ Beard, Martin Rees, Catherine Richards, and Clarissa Rios Rojas

©2023 SJ Beard, Martin Rees, Catherine Richards, and Clarissa Rios Rojas. Copyright of individual chapters is maintained by the chapters’ authors

This work is licensed under an Attribution-NonCommercial 4.0 International (CC BY-NC 4.0). This license allows you to share, copy, distribute and transmit the text; to adapt the text for non-commercial purposes of the text providing attribution is made to the authors (but not in any way that suggests that they endorse you or your use of the work). Attribution should include the following information:

SJ Beard, Martin Rees, Catherine Richards and Clarissa Rios Rojas (eds), The Era of Global Risk: An Introduction to Existential Risk Studies. Cambridge, UK: Open Book Publishers, 2023, https://doi.org/10.11647/OBP.0336

Copyright and permissions for the reuse of many of the images included in this publication differ from the above. This information is provided in the captions and in the list of illustrations. Every effort has been made to identify and contact copyright holders and any omission or error will be corrected if notification is made to the publisher.

Further details about CC BY-NC licenses are available at http://creativecommons.org/licenses/by-nc/4.0/

All external links were active at the time of publication unless otherwise stated and have been archived via the Internet Archive Wayback Machine at https://archive.org/web

Digital material and resources associated with this volume are available at https://doi.org/10.11647/OBP.0336#resources

ISBN Paperback: 978-1-80064-786-2

ISBN Hardback: 978-1-80064-787-9

ISBN Digital (PDF): 978-1-80064-788-6

ISBN Digital ebook (epub): 978-1-80064-789-3

ISBN XML: 978-1-80064-791-6

ISBN HTML: 978-1-80064-792-3

DOI: 10.11647/OBP.0336

Cover image: Anirudh, Our Planet (October 14, 2021), https://unsplash.com/photos/Xu4Pz7GI9JY. Cover design by Jeevanjot Kaur Nagpal.

Contents

Preface viii

Martin Rees

Introduction xii

SJ Beard, Martin Rees, Catherine Richards, and Clarissa Rios Rojas

1. A Brief History of Existential Risk and the People Who Worked to Mitigate It 1

SJ Beard and Rachel Bronson

2. Theories and Models: Understanding and Predicting Societal Collapse 27

Sabin Roman

3. Existential Risk and Science Governance 53

Lalitha S. Sundaram

4. Beyond ‘Error and Terror’: Global Justice and Global Catastrophic Risk 77

Natalie Jones

5. We Have to Include Everyone: Enabling Humanity to Reduce Existential Risk 97

Sheri Wells-Jensen and SJ Beard

6. Natural Global Catastrophic Risks 119

Lara Mani, Doug Erwin, and Lindley Johnson

7. Ecological Breakdown and Human Extinction 143

Luke Kemp

8. Biosecurity, Biosafety, and Dual Use: Will Humanity Minimise Potential Harms in the Age of Biotechnology? 169

Kelsey Lane Warmbrod, Kobi Leins, and Nancy Connell

9. From Turing’s Speculations to an Academic Discipline: A History of AI Existential Safety 195

John Burden, Sam Clarke, and Jess Whittlestone

10. Military Artificial Intelligence as a Contributor to Global Catastrophic Risk 231

Matthijs M. Maas, Kayla Lucero-Matteucci, and Di Cooke

Afterword 277

SJ Beard

Contributor Biographies 283

Index 293

Preface

Martin Rees

© 2023 Martin Rees, CC BY-NC 4.0 https://doi.org/10.11647/OBP.0336.12

This book is about our entire planet’s future. The stakes have never been higher. The Earth has existed for 45 million centuries, but this is the first century in which one dominant species—ours—can determine, for good or ill, the future of the entire biosphere. Over most of history, the benefits we garner from the natural world have seemed an inexhaustible resource; the worst terrors humans confronted—floods, earthquakes, and diseases—came from nature too. But we are now deep in the ‘Anthropocene’ era. The human population, now exceeding eight billion, makes collective demands on energy and resources that are not sustainable without new technology and threaten irreversible changes to the climate. Novel technologies—especially bio and cyber—are socially transformative, but open up the possibility of severe threats if misapplied. The worst threats to humanity are no longer ‘natural’ ones; they are caused (or at least aggravated) by us.

Moreover, the world is far more interconnected by travel, the internet and supply chains; a disaster in one region will cascade globally.

Despite the concerns, there are some countervailing grounds for optimism. For most people in most nations, there has never been a better time to be alive, thanks to advances in health, agriculture, and communication, which have boosted the Global South as well as the northern world. Everyday life has been transformed in less than two decades by mobile phones, social media, and the internet; we would have been far less able to cope with recent shutdowns without these facilities. Computers double their power every two years. Gene-sequencing is a million times cheaper than it was 20 years ago: spin-offs from genetics could soon be as pervasive as those we’ve already seen from the microchip.

And this optimism about science need not be eroded by COVID-19. Indeed, in dealing with this globe-spanning plague, science has been our salvation. The response has shown the scientific community’s strengths—a colossal worldwide effort to develop and deploy vaccines, combined with honest efforts to keep the public informed. The crucial role of the underlying science—and the ‘scenario planning’ needed to minimise the likelihood of bio- and cyber- catastrophes—are key themes of the present book.

The challenges to governance posed by COVID-19 were unprecedented (at least in peacetime) in their urgency, impact, and global scope; ‘experts’ had to engage with politicians and the wider public in order to overcome them. But the world would have coped far better had there been more planning and preparedness at international levels. And there are conjectural threats—engineered pandemics and massive cyber attacks, for instance—that could create at least equal devastation at any time. Indeed, their probability and potential severity is increasing. COVID-19 must act as a wake-up call, reminding us—and our governments—of our vulnerabilities.

Looming over the world in this century is the threat of climate change. This is potentially a ‘global fever’, in some ways resembling a slow-motion version of COVID-19. For instance, both crises aggravate the level of inequality within and between nations. Those in megacities in the majority of the world can’t isolate from rogue viruses; medical care is minimal, and they are less likely to have access to vaccines. Likewise, it is those countries, and the poorest people in them, that will suffer most from global warming and the subsequent effects on food production and water supplies. Climate change and environmental degradation may well, later this century, have global consequences that are even graver than pandemics and could last longer (or, indeed, be irreversible). So too could the loss of biodiversity, leading to mass extinctions. Many, from Pope Francis downward, believe that the natural world’s diversity has value in its own right, quite apart from its crucial importance for us humans.

But a potential slow-motion catastrophe doesn’t engage our public and politicians: our predicament resembles that of the proverbial boiling frog, content in a warming tank until it’s too late to save itself. We fail to prioritise prevention and countermeasures, because their worst impact stretches beyond the time-horizon of political and investment decisions. Politicians recognise a duty to prepare for floods, terrorist acts, and other risks that are likely to materialise in the short term–-and are localised within their own domain. But unless there is a clamour from voters, they have minimal incentive to address longer-term threats that aren’t likely to occur while they’re still in office—and which are global rather than local.

And of course most of the challenges are global. Coping with COVID-19 is plainly a global challenge. Similarly, the threats of potential shortages of food, water, and natural resources—and the challenge of transitioning to low carbon energy—can’t be overcome by each nation separately. Nor can the regulation of potentially threatening innovations, especially those spearheaded by globe-spanning conglomerates. Indeed, a key issue is to what extent, in a ‘new world order’, nations will need to yield more sovereignty to new organisations along the lines of the IAEA, WHO, etc. And how do we manage the tension between privacy, security, and freedom in a world where small groups (or even a malign individual) empowered by bio or cyber technology could cause global devastation?

Scientists have an obligation to promote beneficial applications of their work in meeting these global challenges. Their input is crucial in helping governments decide wisely which scary scenarios—ecothreats or risks from misapplied technology—can be dismissed as science fiction, and how best to avoid the serious ones. We also need the insights of social scientists to help us envisage how human society can flourish in a networked and AI-dominated world.

The case for intense study of these extreme threats is compelling. But, until recently, they received minimal attention—far less than has been devoted to ‘routine’ accidents. Unless voters speak up, governments won’t properly prioritise the study of mega-threats that could jeopardise the very survival of future generations. So scientists must enhance their leverage by involvement with NGOs, via blogging and journalism, and by enlisting charismatic individuals and the media to amplify their voices and change the public mindset. It is encouraging to witness the number of activists increasing, especially the young—who can hope to live into the 22nd century. Their campaigning is welcome. Their commitment gives grounds for hope.

These areas of study, crucial to the world’s future, are still underprioritised in the world of academia and policy studies. I am glad that my university, Cambridge, is one of a still-small number that has created a Centre for the Study of Existential Risks (CSER). Staffed by idealistic young researchers, with expertise spanning natural and social sciences, the CSER has helped to deepen and solidify our understanding of this crucial agenda, and has thereby gained traction with policymakers.

This book, marking the 10th anniversary of CSER’s foundation—and written in collaboration with experts from other centres—offers a perspective on the key topics, in a clear format and style which we hope will spread an informed awareness of the epochal issues that it addresses.

I am an astronomer, and would like to close with a cosmic perspective. Our Earth—this tiny ‘pale blue dot’ in the cosmos—is a special, maybe even unique, place. We are its stewards during an especially crucial era. That is an important message for us all.

We need to think globally, we need to think rationally, we need to think long-term—we need to be ‘good ancestors’, empowered by 21st-century technology but guided by values that science alone cannot provide. This book should provide some grounding for these aspirations.

Introduction

SJ Beard, Martin Rees, Catherine Richards, and Clarissa Rios Rojas

© 2023 SJ Beard et al., CC BY-NC 4.0 https://doi.org/10.11647/OBP.0336.13

We are living in an era of global risk. While policymakers were once able to focus exclusively on the risks facing their particular constituency—be that a country, corporation, community, or institution—now, everybody must take account of the threats that endanger humanity as a whole. These come in many forms, from global-scale natural disasters (like volcanic super-eruptions) to anthropogenic environmental destabilisation (like climate change and loss of biosphere integrity), and from calamities that spread rapidly around our highly networked planet (like viruses and cyber threats) to the development of novel technologies with high destructive potential (such as artificial intelligence and biotechnologies). Reflecting this trend, the recent sixth edition of the United Nations Global Assessment Report on Disaster Risk Reduction, Our World at Risk, calls on member states for transformative governance that will lead to a resilient future, particularly given the increased occurrence and intensity of disasters. Similarly, the UN Secretary General’s report, ‘Our Common Agenda’, seeks to centralise the initiatives needed for better management to major global risks within discussions of global policy and governance.

One of the most prominent advocates for the importance of global risk has been the World Economic Forum, who defines a global risk as “the possibility of the occurrence of an event or condition that, if it occurs, could cause significant negative impact for several countries or industries”. Since 2006, the Forum’s annual Global Risk Report, based on a comprehensive risk perception survey of its members and stakeholders, has provided something of a barometer showing which risks are of greatest concern. For instance, their inaugural report found that:

The 2006 risk landscape is dominated by high impact headline risks, such as terrorism and an influenza pandemic, which top the global risk mitigation agenda and are increasingly well understood. Other risks, like climate change, whose cumulative impact will only be felt over the longer term, have begun to move to the centre of the policy debate and may offer the greatest challenges for global risk mitigation in the future.

1

However, by the time of its most recent 2022 edition, the focus of the report has shifted markedly, now finding that over the next five years, leaders are most concerned about societal risks (such as social cohesion, livelihood, and mental health) and environmental risks, but that “over a 10-year horizon, the health of the planet dominates concerns: environmental risks are perceived to be the five most critical long-term threats to the world as well as the most potentially damaging to people and planet”.2 One trend that can be observed in this shift in risk perception is a long-term move away from concern about external threats we need to secure ourselves against (such as specific viruses or terrorism) and towards systemic risks that we, as human beings, are creating for ourselves, through poor governance, short-termism, and a too narrow focus on economic productivity.

Such a shift is very much in line with the developing understanding of global risk at the Centre for the Study of Existential Risk, located at the University of Cambridge. However, our concern is not simply to understand what risks decision-makers are most concerned by, but which ones they should be more concerned about, and what they need to do to mitigate those risks. There is an increasingly rich vocabulary for understanding global risks,3 and with this, it has become clear that not all risks are the same. Some are also ‘extreme’, both in the sense that they involve extreme amounts of harm and that they could push global systems outside of their ‘normal operating space’.4 Within this class, two further subcategories have received particular attention. Global catastrophic risks (GCRs) involve events with one or more of the following characteristics: (a) “sudden, extraordinary, widespread disaster beyond the collective capability of national and international governments and the private sector to control”;5 (b) significant harm at the global scale, such as a large and sudden reduction in the global population,6 and/or (c) a failure of critical global systems,7 including the cluster of sociotechnological systems we sometimes call ‘human civilisation’. Finally, existential risks are those with the very worst potentialities, usually understood to involve either the extinction of humanity8 or “the permanent and drastic destruction of its potential for desirable future development” (according to some assumptions about what desirable futures might be).9 While these two are often conflated, it might be best to separate them into extinction risk and existential risk.10

There are many reasons why we should be especially concerned about extreme global risks, global catastrophic risks, and existential risks. Moral philosophers have argued that we have the strongest possible moral duty to mitigate these risks, whether on utilitarian,11 idealist,12agent-centred,13 or social-contract-based14 grounds. Psychologists have also shown how people are systemically biased towards downplaying and ignoring these risks, and thus we need to work hard if we are to overcome these biases and give the risks the attention they deserve.15 However, increasingly, we can also see that paying attention to risks such as these has tremendous practical importance. It seems likely that the current level of these risks is such that they could significantly impact the lives and futures of many people who are alive today, as well as being a significant threat to the long-term goals of many kinds of institution, from governments and charities to investors and corporations. In this book, we will not pay much attention to the reasons why one should focus on extreme global risks. Instead, we simply note that, if given the choice, most people would unquestionably want to protect themselves and others from such risks, and thus focus on the dual questions of how to understand these risks and manage them effectively.

The following ten chapters set out a number of different approaches to thinking about global, extreme, global catastrophic, and existential risks. The first five focus on the emerging science of global risk itself and build the case for an open and creative approach to studying these risks, drawing on lessons from the past—from the rich interdisciplinary literature on social and ecological collapse, from the experiences of people working on the governance of science and technology, from discussions about global injustice, and from the diversity of human beings with an interest in safeguarding our collective future. The second set of chapters then go on to provide more detailed assessments of different risk drivers (including natural disasters, environmental breakdown, biotechnology, the potential of transformative future artificial intelligence (AI) in general, and the military application of AI in particular); the peculiar challenges to studying and mitigating each of these; and how they compare. Most, but not all, of these chapters were written by researchers affiliated to, or associated with, the Centre for the Study of Existential Risk at the University of Cambridge, and the chapters aim to provide those researchers’ personal accounts of how best to think about this aspect of global risk while also engaging with, and surveying, a far broader range of literature and perspectives on the subject.

Our first chapter, ‘A Brief History of Existential Risk and the People Who Worked to Mitigate It’ by SJ Beard and Rachel Bronson, provides a historical account of our growing understanding of global risks and how scientists and others have worked to mitigate them. Looking back over the past 75 years, the chapter shows us how humanity has had to grapple with threats from nuclear weapons, environmental breakdown, and novel technologies to the political and technological forces that created them. However, it also surveys the many active scientific and political movements that have worked to avert disaster, as curious, compassionate, and courageous people have sought to understand these terrifying forces, bring them to wider public attention, and work to prevent human extinction and the collapse of civilisation. Using the iconic Doomsday Clock of the Bulletin of Atomic Scientists as a guide, it briefly tells the story of some of these people and organisations who sought to guide us safely through the 20th century and beyond. Understanding this history both helps us to understand the risks that continue to threaten humanity and offers opportunities to learn from the successes and failures of the past, rather than focusing only on whatever catastrophe is most immediate in our collective attention. In particular, the chapter highlights the importance of reinforcing key messages about risks, modelling extreme scenarios, managing the pace of scientific research, and placing its findings in the public domain—messages which are echoed in subsequent chapters.

The second chapter, ‘Theories and Models: Understanding and Predicting Societal Collapse’ by Sabin Roman, looks at what those who study global risks can learn from efforts to understand and model the process of societal and ecological collapse, which is a significant global risk in itself and also an example of the kind of extreme, non-linear, and potentially dangerous transition that is associated with extreme global risks more generally. Surveying the extensive and interdisciplinary literature on this subject, in some cases extending back several centuries, the chapter illustrates the ways in which many qualitative and quantitative modelling approaches can be applied to shed insight on the causes and nature of such collapses. Some of these approaches are primarily concerned with the exogenous causes of collapse, such as conflict or environmental catastrophes. However, other approaches view collapse as endogenous to societies themselves, originating in economic inequality or shifting societal dynamics, and it is argued that even in the presence of external causes we cannot fully understand collapse unless we take account of these endogenous effects that ultimately make societies vulnerable in the first place. Perhaps most promisingly, the chapter indicates how we can create constructive new approaches based around modelling a variety of feedback loops between different elements, and how these can be adapted to generate and test new hypotheses about social and ecological collapse (either past or future).

Chapter 3, ‘Existential Risk and Science Governance’ by Lalitha S. Sundaram, looks at how the governance of science might matter for the production and prevention of existential risk, and whether there are options for making science and technology less risky that are being ignored. In particular, it focuses on the ways in which scientific governance is conventionally framed within the global risk community—as something extrinsic to be regulated, with either greater top-down control to promote safety, or greater libertarian freedom to promote innovation—and highlights the potential shortcomings of this approach. As an alternative, it proposes considering scientific governance more broadly as a constellation of socio-technical processes that shape and steer technology, and argues that research culture and self-governance within science need to be seen as central to how science and technology developments play out. This alternative framing highlights many new levers at our disposal for ensuring the safe and beneficial development of technologies; overlooking such possibilities could mean robbing humanity of some of our most effective tools for mitigating global risk. The chapter ends by proposing some areas where scientists and the global risk community might together hope to influence those existing modalities, such as via education, professional bodies, two-way policy engagement, collective action, and public outreach.

Chapter 4, ‘Beyond “Error and Terror”: Global Justice and Global Catastrophic Risk’ by Natalie Jones, serves as an invitation to consider global political, economic, social, and legal systems (particularly in relation to global justice and inequality) when studying and addressing global catastrophic risks. While the previous chapter showed how our understanding of risk was hampered by too great a focus on top-down approaches to mitigation and governance, this chapter highlights a no less important blind spot in much of the thinking about global risk: the tendency to focus more on individuals and institutions as agents of risk, and neglect the importance of systems of extraction, oppression, marginalisation, and corruption. While individuals and institutions are undoubtedly important drivers of global risk, studying global risk while ignoring global injustice can distort our understanding of risk. In contrast, adding a global justice lens onto our existing strategies helps us see the nature of risks more clearly. Furthermore, as the case of climate change shows, strategies to reduce global catastrophic risk will be more effective if they take account of global justice considerations. It follows that policies to reduce global catastrophic risk can—and should—be designed to simultaneously mitigate risk and achieve justice.

Chapter 5, ‘We Have to Include Everyone: Enabling Humanity to Reduce Existential Risk’ by Sheri Wells-Jensen and SJ Beard, argues for the importance of considering diversity and inclusion as integral both to a flourishing science of global risk and to efforts to mitigate such risks. Given the scale and importance of global risk, it can be tempting to believe that only the most able would be able to understand and mitigate it effectively. However, the chapter argues that such thinking is clearly mistaken. Far from being merely vulnerable and unable to help, disabled people and others who are marginalised or excluded are the real experts in vulnerability, adaptation, and resilience, and have a lot to contribute to studying and managing risks, even on the global scale. Moreover, diversity and inclusion are vital sources of creativity and insight. This chapter explores the limitations and costs of standard narratives around diversity and inclusion in global risk, and shows how the global risk community would benefit from championing inclusive futures and paying more attention to disabled people and other marginalised groups. It focuses on the benefits of diversity and inclusion across three case studies (foresight and horizon scanning, space colonisation, and bioethics) to highlight this point, while also considering the wider costs of marginalisation and exclusion to society as a whole.

Moving onto specific drivers of risk, Chapter 6, ‘Natural Global Catastrophic Risks’ by Lara Mani, Doug Erwin, and Lindley Johnson, considers risks from ‘natural’ disasters. It explores the dichotomies that are often neglected and left on the peripheries of discussions about such risks falling somewhere between hazard and vulnerability. The chapter shares a similar perspective to Chapter 2, that while the historical and geological record of such disasters can be used to study their impact, we need to consider more than just the rate of disasters as exogenous events and also take account of the factors that make societies and species more or less vulnerable to them if we are to understand the evolving nature of this risk. The chapter argues that, while humanity has lived with global-scale natural threats (such as large magnitude volcanic eruptions and Near-Earth Object impacts) throughout history, the risk of such events is currently growing due to the increasing scale and complexity of human society. Thus, while the probability of potentially catastrophic natural hazards of this kind may be relatively low, it is certainly not negligible, and the societal and economic impacts are potentially vast; however, this type of hazard is frequently underestimated in the literature. The chapter surveys the state of current thinking around extreme natural risks and asks what can be learned from efforts to reduce some of these risks (such as Planetary Defense against near-Earth objects) for improving our resilience to natural global-scale catastrophes more generally.

Chapter 7, ‘Ecological Breakdown and Human Extinction’ by Luke Kemp, explores the catastrophic potential of anthropogenic environmental risks, and (in particular) climate change. The chapter considers both the scale and nature of global risk from climate change and the arguments for prioritising climate mitigation as a way of reducing global risk. Reviewing the available evidence, it notes the weaknesses of certain arguments that climate change is and is not a risk with global catastrophic and existential potential. However, while there are many plausible reasons to be concerned about the catastrophic potential of climate change, it finds that attempts to argue that we should not consider climate change as being of the same severity as technological global risks often depend upon spurious notions of what a climate-induced catastrophe might involve. It then considers the appropriateness of using existing discourse around existential and global catastrophic risk to talk about climate change in the first place, given that this often frames risks in terms of their potential impact on long-term economic and technological growth, which is a questionable goal and one that (in many ways) assumes that possible ecological limits to human growth should be disregarded out of hand. Finally, however, in considering the case for climate mitigation as a global risk reduction strategy, the chapter makes the case that there is compelling evidence in favour of this, not only due to the direct impacts of climate mitigation but also the substantial co-benefits to human health and flourishing that many policies aimed at climate mitigation might provide. However, it also argues that many of the strategies proposed for climate mitigation at the global scale are problematic because they misidentify the root causes of the problem in identifying climate change as a ‘tragedy of the commons’ when it is actually a ‘tragedy of the elite’ where, as previously discussed in Chapter 4, systems of global injustice are empowering a small number of agents with the capacity to do large amounts of harm and also incentivising them to do so.

Chapter 8, ‘Biosecurity, Biosafety, and Dual Use: Will Humanity Minimise Potential Harms in the Age of Biotechnology?’ by Kelsey Lane Warmbrod, Kobi Leins, and Nancy Connell, discusses a number of recent advances in the life sciences that may serve to contribute to the current level of global risk (both positively and negatively), their convergence with developments in many other fields (such as AI and nanotech), and the harms that might be caused by their misuse. The chapter surveys recent developments across genomics, gain of function experiments, gene drives, synthetic biology, and AI-enabled biological research. It contrasts the rapid development and interdisciplinarity of these fields with the slow-moving pace of efforts to govern their use, often relying on the now decades-old Biological Weapons Convention. It thus emphasises the need for new approaches that fully embrace the power and flexibility of bottom-up science governance, as described in Chapter 3, and also the empowerment of communities who are often disproportionately affected by the quest for new technologies, as advocated for in Chapters 4 and 5. Grappling with the multiple potentialities of new technologies requires careful thought, but it also requires researchers and practitioners to work collectively to address the challenges we currently face as biology marches towards a global bioeconomy. This is an achievable goal but will require action to be taken soon. Urgent actions include creating and conducting a robust risk assessment methodology and implementing appropriate biosafety measures; strengthening frameworks for obtaining and enforcing consent for research, including at the community level; and requiring higher standards of interpretability for algorithms and big datasets used in biological research and the development of biotechnologies (a problem also discussed in the next chapter).

Chapter 9, ‘From Turing’s Speculations to an Academic Discipline: A History of AI Existential Safety’ by John Burden, Sam Clarke, and Jess Whittlestone describes the development of thought related to artificial intelligence (AI) and existential risk. These risks are more likely to be realised by future AI systems with greater capabilities and generality than current systems; however, the field of AI is moving extremely swiftly and AI systems are becoming more ubiquitous in the daily lives of people around the world. Great care must, therefore, be taken to ensure these systems are safe. The chapter describes how the field of existential AI safety has matured from pure speculative concerns in the 20th century into a rigorous academic discipline of technical expertise. In particular, it focuses on the problem of alignment. An AI system is considered aligned if it behaves according to the values of a particular entity, such as a person, an institution, or humanity as a whole. There are many ways in which AI systems may become misaligned, or in which the need for different alignments may pull it in conflicting directions, and the problem could thus arise in a wide variety of contexts, with different but no less serious existential consequences in each of these. Just as important as our evolving understanding of the problems of AI safety, however, have been the development of new approaches to achieving AI safety and ensuring meaningful—and beneficial—human control over AI systems. Furthermore, despite the significant progress that has been made, the field remains surprisingly small, and its recent history only serves to highlight the many prospects for further development in the near future.

Finally, Chapter 10, ‘Military Artificial Intelligence as a Contributor to Global Catastrophic Risk’ by Matthijs M. Maas, Kayla Lucero-Matteucci, and Di Cooke, focuses specifically on the uses of AI to increase humanity’s destructive capabilities within the military context. After reviewing past military GCR research and recent pertinent advancements in military AI, the chapter focuses on lethal autonomous weapons systems (LAWS) and the intersection between AI and nuclear weapons, both of which have received the most attention thus far. Regarding LAWS, it argues that, while the destructive capabilities of this technology are increasing, it is unlikely these will constitute a global catastrophic or existential risk in the near future, based primarily on current and anticipated costs and production trajectories. On the other hand, it argues that the application of AI to nuclear weapons has a significantly higher GCR potential. The chapter cites the danger of this within existing debates over when, where, and why nuclear weapons could lead to a GCR, as well as the recent geopolitical context, by identifying relevant converging global trends that may be raising the risks of nuclear warfare. The chapter turns its focus to the existing research on specific risks arising at the intersection of nuclear weapons and AI, and outlines six hypothetical areas where the use of AI systems in, around, or against nuclear weapons could increase the likelihood of nuclear escalation and result in global catastrophes. These systems include the automation of nuclear decision-making, the pressurisation of human decision-making, AI deployment in systems peripheral to nuclear weapons, AI as a threat to information security, AI as a threat to nuclear integrity, and broader impacts on strategic stability. The chapter concludes with suggestions for future directions of study, and sets the stage for a research agenda that can gain a more comprehensive and multidisciplinary understanding of the potential risks from military AI, both today and in the future.

While nowhere near fully comprehensive in scope, these chapters provide a snapshot of a rapidly evolving field: the scientific study of global risk as a phenomenon of urgent but tractable problems with global importance.16 It is a field that, although undergoing significant growth in recent years, still remains surprisingly small and neglected. Unfortunately, it is also a field that is already showing signs of disciplinary fracture (for instance, between researchers working primarily on environmental risks and those working primarily on technological risks) that desperately needs to be understood and addressed. This book represents the first interdisciplinary survey of the topic to come out since Nick Bostrom and Milan Cirkovic’s Global Catastrophic Risks in 2008,17 and its intention is precisely to provide both a survey and prospectus for this science as a vibrant, open, and rigorous field of academic research. Each of these chapters presents a clear call for action and has been specially written with an educated lay audience in mind, although we submit that, given the range and nature of material being presented, they may not always be for the faint of heart. Nevertheless, we believe that, in this era where no one can ignore the threats that endanger all humanity, it is imperative that this science should be available to all, and that everyone should ask themselves: what is my role and how can I contribute to bringing the era of global risk to a close and move towards an era of global safety?

Acknowledgements

The editors of this volume would like to thank Clare Arnstein, Esmé Booth, Laura Elmer, Alice Jondorf, Jess Bland, Seán Ó hÉigeartaigh, and the staff at Open Book Publishing for invaluable assistance in preparing this volume. Many of the chapters here grew out of panel discussions from the 2020 Cambridge Conference on Catastrophic Risk at the Centre for the Study of Existential Risk and we would also like to thank Lara Mani, Catherine Rhodes, and Annie Bacon for helping to organise this as well as the other panellists and participants for their contributions. This publication was made possible through the support of a grant from Templeton World Charity Foundation, Inc. The opinions expressed in this publication are those of the author(s) and do not necessarily reflect the views of Templeton World Charity Foundation, Inc.

1World Economic Forum, Global Risks 2006 (2006).

2 World Economic Forum, The Global Risks Report 2022: 17th Edition (2022).

3 Cremer, Carla Zoe and Luke Kemp, ‘Democratising risk: In search of a methodology to study existential risk’, arXiv preprint arXiv:2201.11214 (2021); Sundaram, Lalitha S., Matthijs M. Maas and S.J. Beard, ‘From Evaluation to Action: Ethics, Epistemology and Extreme Technological Risk’ in Catherine Rhodes (ed), Managing Extreme Technological Risk. World Scientific Publishing (forthcoming).

4 Broska, Lisa Hanna, Witold-Roger Poganietz, and Stefan Vögele, ‘Extreme events defined—A conceptual discussion applying a complex systems approach’, Futures, 115 (2020), p.102490.

5 Schoch-Spana, Monica, Anita Cicero, Amesh Adalja, Gigi Gronvall, Tara Kirk Sell, Diane Meyer, Jennifer B. Nuzzo, et al. ‘Global catastrophic biological risks: Toward a working definition’, Health Security, 15(4) (2017), pp.323-328.

6 For instance: Cotton-Barratt Owen, Sebastian Farquhar, John Halstead, Stefan Schubert, and Andrew Snyder-Beattie, Global Catastrophic Risks 2016. Global Challenges Foundation (2016) use a 10% reduction; Kemp, Luke, Chi Xu, Joanna Depledge, Kristie L. Ebi, Goodwin Gibbins, Timothy A. Kohler, Johan Rockström et al. ‘Climate Endgame: Exploring catastrophic climate change scenarios’, Proceedings of the National Academy of Sciences, 119(34) (2022): e218146119 use a 25% reduction (while referring to risks involving a 10% reduction as ‘Decimation Risks’), and Maas, Lucero-Matteucci, and Cooke (this volume) use a threshold of 1 million fatalities.

7 Avin, Shahar, Bonnie C. Wintle, Julius Weitzdörfer, Seán S. Ó hÉigeartaigh, William J. Sutherland, and Martin J. Rees, ‘Classifying global catastrophic risk’, Futures, 102 (2018), pp.20-26.

8 Kemp et al. (2022).

9 Bostrom, Nick. ‘Existential risks: Analyzing human extinction scenarios and related hazards,’ Journal of Evolution and Technology, 9 (2002).

10 Cremer and Kemp (2021).

11Bostrom, Nick, ‘Existential risk prevention as global priority,’, Global Policy,4(1) (2013), pp.15–31.

12 Parfit, Derek, Reasons and Persons. Oxford University Press (1984); Beard, S.J. and Patrick Kaczmarek, ‘On Theory X and what matters most’, Ethics and Existence: The Legacy of Derek Parfit (2021), p.358.

13 Scheffler, Samuel, Why Worry About Future Generations? Oxford University Press (2018).

14 Finneron-Burns, Elizabeth, ‘What’s wrong with human extinction?’, Canadian Journal of Philosophy,47(2–3) (2017), pp.327–43; Beard, S.J. and Patrick Kaczmarek, ‘On the wrongness of human extinction’, Argumenta,5 (2019), pp.85–97.

15Yudkowsky, Eliezer, ‘Cognitive biases potentially affecting judgement of global risks’, Global Catastrophic Risks,1(86) (2008), p.13.

16 One work that is foundational for both this science in general and this volume in particular was published by Martin Rees in 2003. It was originally intended that this work should be titled Our Final Century?; however, its publishers sought to outdo one another in making this sound more alarmist, first removing the question mark for the UK edition and then substituting ‘Hour’ for ‘Century’ for the American market. For this reason, the international group of authors behind these chapters cite this important work as both Rees, M., Our Final Century: Will Civilisation Survive the Twenty-First Century? Random House (2003) and Rees, M., Our Final Hour: A Scientist’s Warning. Basic Books (2003).

17Bostrom, Nick and Milan M. Ćirković (eds), Global Catastrophic Risks. OUP (2008).

1. A Brief History of Existential Risk and the People Who Worked to Mitigate It

SJ Beard and Rachel Bronson

© 2023 SJ Beard and Rachel Bronson, CC BY-NC 4.0 https://doi.org/10.11647/OBP.0336.01

Despite garnering significant academic, political, and public attention, the existential risks posed by nuclear weapons, environmental breakdown, and disruptive technologies continue to threaten human survival, and we may now be in a more perilous position than at any other time in history. For over 75 years we have been dragooned into unacceptable gambles by political and technological forces, and were lucky to survive thus far. However, this story has not just been about luck. Since the emergence of such risks, curious, compassionate, and courageous people (including many scientists) have sought to understand these terrifying forces, bring them to wider public attention, and work with every tool at their disposal to prevent human extinction and the collapse of civilisation. In this chapter, we seek to revisit the ups and downs of this perilous journey, using as our guide the shifting time of the Doomsday Clock, and to briefly tell the story of some of the people and organisations who sought to guide us safely through it. Understanding this history is vital, not only because these risks remain pressing, but also because it offers an opportunity for those currently working to reduce existential risk (and especially those in the nascent academic field of Existential Risk Studies) to learn from the successes and failures of the past. In particular, we show the importance of reinforcing key messages about risks and how to manage them, modelling extreme scenarios to understand them better, managing the pace of scientific research, and placing its findings in the public domain. If we can learn these lessons and apply them rigorously, then history shows we can turn back the hands of the Doomsday Clock, and ensure that our future is no longer a hostage to our fortune.

The origins of our understanding of Existential Risk

People have speculated about the ‘the end of the world’ since the dawn of history—indeed, the oldest story that has been passed down may well be the Mesopotamian deluge myth, which tells of a flood that wiped out all but a few humans, and is familiar to most in the west through the biblical story of Noah.1 However, such eschatological speculation has largely been bound up with religious beliefs and invariably ends with humanity continuing on Earth, in the afterlife, or via an eternal cosmic cycle of rebirth. Furthermore, as Martin Rees argued in his book Our Final Century:

Throughout most of human history the worst disasters have been inflicted by environmental forces—floods, earthquakes, volcanos, and hurricanes—and by pestilence. But the greatest catastrophes of the twentieth century were directly induced by human agency.

Virtually everyone alive today is familiar (to some degree) with the anthropogenic risks that threaten global disaster, like nuclear war, climate change, and risks from disruptive technologies such as artificial intelligence (AI) and biotech. These risks are both naturalistic—in the sense that we understand how they could happen within the laws of nature—and absolute, in the sense that there may be no reprieve for humanity and no afterlife.

In fact, the very idea that humanity was vulnerable to going extinct in this way may be a relatively recent invention. It arose from the scientific discovery of prehistoric fossils and its implication of a ‘deep past’ during which evidence of extinctions was incontrovertible, our growing awareness that there is no great difference in kind between humans and other species, the spread of secular atheism, and the acceleration of social, scientific, and technological change.2

Perhaps the first group to fully express this change in thinking were authors of speculative fiction. For instance, Mary Shelley, one of the founders of science fiction, wrote The Last Man in 1826,3 which tells the story of Lionel, who witnesses the death of all other humans in the last few decades of the 21st century from a series of apocalyptic events, most notably a worldwide plague. The first mention of human extinction being caused by self-improving machines comes from Samuel Butler’s 1863 Darwin Among the Machines, later reprinted as part of his novel Erewhon.4, 5 Similarly, the first discussion of the existential risk posed by atomic weaponry is arguably found in H.G. Wells’s The World Set Free,6 while more recently, sci-fi authors have been among the first to explore how humanity may bring about its own demise through our harmful influence on planet Earth.7

Such writers not only captured the popular imagination but also directly influenced academic research. H.G. Wells’s 1901 book Anticipations of the Reaction of Mechanical and Scientific Progress Upon Human Life and Thought,8 for instance, is a foundational text for the academic discipline of Futures Studies, a subject of vital importance to our understanding of existential risk.9Wells also wrote at least two non-fiction, if not entirely serious, essays on the risk of human extinction, ‘On Extinction’ and ‘The Extinction of Man’,10 while the first book-length non-fiction work to classify and explore the entire range of possible existential catastrophes was Isaac Asimov’s A Choice of Catastrophes.11

Yet, while they have a vital role in raising awareness and exploring different futures, science-fiction authors are often the first to argue for the importance of the hard science on which they draw. Thus, the true foundation of the study of existential risk belongs to a group of pioneering scientists and philosophers working during and shortly after World War II, who became concerned about several overlapping trends and developments with the potential to significantly threaten humanity’s future.

The threat of nuclear weapons

Worries about the risk of a global catastrophe first gained major scientific attention after World War II, with widespread concern about nuclear weapons and their potential to wipe humanity off the face of the Earth. The speed and violence with which nuclear technology evolved was breathtaking, even to those closely involved in its development.

As early as 1939, world-renowned scientists Albert Einstein and Leo Szilard penned a letter to US President Franklin D. Roosevelt about a breakthrough in nuclear technology that was so powerful, and could have such tremendous battlefield consequences, that a single nuclear bomb, “carried by boat and exploded in a port, might very well destroy the whole port”, a possibility seen as too significant for the President to ignore. A mere six years later, one such bomb was used to destroy an entire city and its population, followed by another one. A few years after that, nuclear arsenals were capable of destroying civilisation as we know it.

The first scientific concern that nuclear weapons might have the potential to end humanity as a whole appears to have come from scientists involved in the first nuclear tests, and related to whether they might accidentally ignite the Earth’s atmosphere, although these concerns were quickly dismissed.12

However, many who worked on the Manhattan Project continued to have severe reservations about the power of the weapons they helped to produce. After successfully performing the first controlled nuclear chain reaction at the University of Chicago in 1942, confirming its potential to release energy, the team of scientists working on the Manhattan Project dispersed, with some going off to Los Alamos and other research laboratories to develop nuclear weapons, while others stayed in Chicago to undertake their own research. Many of those who stayed were themselves immigrants to the United States and were keenly aware of the intertwining of science and politics. They, with the help of colleagues, began actively organising and engaging on how to keep the future of nuclear technology safe. For instance, they helped advance the Franck report in June 1945 that foreshadowed a dangerous and costly nuclear arms race, and argued against a surprise nuclear attack on Japan. This group went on to establish the Bulletin of the Atomic Scientists of Chicago (The Bulletin), whose first issue was published a mere four months after the atomic bombs were dropped on Hiroshima and Nagasaki. With support from the University of Chicago’s President, Robert Hutchins, and colleagues in international law, political science, and other related fields, they helped kick off and support a global citizen-scientist movement that had a powerful effect on the creation of the global nuclear order.13 Many of these same individuals were also instrumental in establishing the Federation of Atomic (now American) Scientists, which was located in Washington DC to ensure proximity to key decision-makers whose views they hoped to sway. In contrast, The Bulletin’s headquarters in Chicago focused more on engaging and educating the public about the political and ethical challenges presented by the advancement of science, which they anticipated would only accelerate in the years to come. The founders believed that public pressure was key to political responsibility, and education was the best channel to ensure it.14

Two years after its founding, The Bulletin published Martyl Langsdorf’s now iconic ‘Doomsday Clock’ to serve as the first cover of its new magazine. Over time, the Clock became globally recognised, in part because of its simplicity and bluntness. Married to a Manhattan Project scientist, Martyl was an artist who understood the urgency and desperation her husband and colleagues felt about managing nuclear technology. She created the Clock to convey their deep concern, as well as to draw attention to their belief that responsible citizens could prevent catastrophe by mobilising and engaging. The message of the Clock is clear: humans can prevent this clock from striking midnight. In that, it provides both a challenge and some hope.

In 1949 the USSR tested its first nuclear weapons, and in reaction to this, The Bulletin’s editor moved the hands of the Clock from seven to three minutes to midnight. In doing so, he activated the Clock, turning it from a static to a dynamic metaphor. The clock would evolve into a symbol that, according to Kennette Benedict, former Executive Director of The Bulletin:

[warns] the public about how close we are to destroying our world with dangerous technologies of our own making. It is a metaphor, a reminder of the perils we must address if we are to survive on the planet.

15

In 1953 the clock moved to two minutes to midnight, after the United States and USSR detonated the first thermonuclear weapons (H-bombs). This was the latest the clock was ever set in the 20th century (the furthest away it has been to midnight was 17 minutes in 1991, following the end of the Cold War). The Doomsday Clock, and its now annual setting, remains perhaps the most widely recognised and oft-cited symbol of our existential predicament, as well as the most easily understood representation of our attempts to come to terms with it.

Another factor that increased both public and scientific concern about nuclear weapons was growing awareness of the risk that radioactive particles could contaminate the environment, with catastrophic effects. This theory was promoted by Hermann Muller, who discovered that radiation can induce genetic mutations and received the first post-war Nobel Prize in physiology for this work. Muller—along with Einstein, Bertrand Russell, and other prominent scientists of the day—later wrote the Russell-Einstein Manifesto in 1955, according to which:

No one knows how widely such lethal radioactive particles might be diffused, but the best authorities are unanimous in saying that a war with H-bombs might possibly put an end to the human race… sudden only for a minority, but for the majority a slow torture of disease and disintegration.

16

An important consequence of this manifesto was the establishment of the Pugwash Conferences on Science and World Affairs, which were initiated in 1957 by Russell and Joseph Rotblat, a physicist who also worked on the Manhattan Project. The Pugwash Conferences were vital for establishing communication channels at a time when Cold War tensions were at their highest, and the Conferences undertook vital background work to establish key non-proliferation treaties such as the 1963 Partial Test Ban Treaty, the 1968 Non-Proliferation Treaty, and the 1972 Biological Weapons Convention. Joseph Rotblat and the Pugwash Conferences were awarded the 1995 Nobel Peace Prize for their “efforts to diminish the part played by nuclear arms in international politics and, in the longer run, to eliminate such arms”.17 To this day, Pugwash remains the existential risk organisation with the widest global reach.18

Alongside these efforts of scientists, popular protest and resistance to the development, creation, and use of nuclear weapons was also vitally important. This resistance has taken many forms. For instance, in the UK, Bertrand Russell helped to establish both the Campaign for Nuclear Disarmament, a large and conventional pressure group, and the Committee of 100, a group set up specifically to perform acts of civil disobedience. He explained the need for both groups as follows:

The Campaign for Nuclear Disarmament has done and is doing valuable and very successful work to make known the facts, but the press is becoming used to its doings and beginning to doubt their news value. It has therefore seemed to some of us necessary to supplement its campaign by such actions as the press is sure to report. There is another, and perhaps more important reason for the practice of civil disobedience in this time of utmost peril. There is a very widespread feeling that however bad their policies may be, there is nothing that private people can do about it. This is a complete mistake. If all those who disapprove of government policy were to join massive demonstrations of civil disobedience they could render government folly impossible and compel the so-called statesmen to acquiesce in measures that would make human survival possible.

19

In founding this group, Russell established a justification for civil disobedience in the face of existential risk that remains to this day, most prominently in the Extinction Rebellion protests.

Russell was far from the only person to lead such a movement. Martin Luther King Jr and other civil rights leaders saw nuclear disarmament as essential and inextricably linked to the quest for social justice and racial equality. Many shared the view of Langston Hughes that American racism had played an important role in Harry Truman’s decision to use nuclear weapons aggressively against Japanese people, and feared that they would be used selectively against non-whites in future.20 There was also the argument that nuclear weapons were simply the latest, and most dangerous, manifestation of oppressive and destructive attitudes that marginalised groups had struggled against for centuries. As Dr King put it in his very final speech delivered at the Bishop Charles Mason Temple in Memphis on April 3rd 1968:

Another reason that I’m happy to live in this period is that we have been forced to a point where we’re going to have to grapple with the problems that men have been trying to grapple with through history, but the demands didn’t force them to do it. Survival demands that we grapple with them. Men, for years now, have been talking about war and peace. But now, no longer can they just talk about it. It is no longer a choice between violence and nonviolence in this world; it’s nonviolence or nonexistence.

21

Also of great significance, but often overlooked, has been the resistance of indigenous peoples to nuclear colonialism.Indigenous lands and lives were often the first to be co-opted for the production of nuclear weapons, from being displaced to make way for nuclear test sites to being hired at low wages to work in the mining and refining of uranium and other materials at significant costs to their own health.22

While concerned scientists and others did much to expose the risks from nuclear weapons as they developed, the nature of these risks meant that many people, including some of those who were responsible for creating these risks, were not aware of their immediacy. For instance, the 1963 Arkhipov incident, in which a Russian submarine commander wished to use nuclear weapons in retaliation for a perceived attack during the Cuban Missile Crisis before his junior officer overruled him, remained largely unknown until 2002.23

Policies can—and have—made a difference in reducing risk. For instance, Robert McNamara sent a memo to President John F. Kennedy in 1963 arguing that falling production costs made it realistic to expect at least eight new nuclear powers to emerge in the next ten years, while Kennedy himself predicted that perhaps 25 nuclear weapon states would emerge by the end of the 1970s.24 Yet nuclear powers have emerged at a far slower pace. By some estimates, up to 56 states may have, at one time or another, possessed the capability to develop a nuclear weapons programme, yet the vast majority of these either chose not to engage in nuclear weapons activity or voluntarily terminated their programmes, with only ten states ever having developed nuclear weapons of their own—one of which (South Africa) has since disarmed.25 In this, and other ways, the various initiatives we describe here have helped to make our world safer, though it remains far from safe. One 2013 study estimated the odds of a nuclear war having occurred between 1945 and 2011 as 61%;26 however, in truth it is likely still too early to say what our chances really were and, indeed, we may never know.

Environmental breakdown and climate change

Concerns about risks to humanity from our own environmental impacts are nothing new. In The Epochs of Nature (1778), Georges-Louis Leclerc, the Comte de Buffon, wrote contemptuously of those who have “ravaged the land, starve it without making it fertile, destroy without building, use everything up without renewing anything”.27 In 1821, Charles Fourier wrote The Material Deterioration of the Planet, concerning humanity’s negative impacts on our environment and the harmful consequences for ourselves. While his theories do not match with our modern understanding of the planetary system, his concern that “we bring the axe and destruction, and the result is landslides, the denuding of mountain-sides, and the deterioration of the climate” still rings true today.28 Similarly, Fredrich Engels noted how:

In relation to nature, as to society, the present mode of production is predominantly concerned only about the immediate, the most tangible result. Then surprise is expressed that the more remote effects of actions directed to this end turn out to be quite different, are mostly quite the opposite in character.

29

In 1896 Svante Arrhenius was the first to uncover the basic principles of anthropogenic climate change. As he later explained his findings to a general audience: “any doubling of the percentage of carbon dioxide in the air would raise the temperature of the earth’s surface by 4°”, while “the slight percentage of carbonic acid in the atmosphere may, by the advances of industry, be changed to a noticeable degree in the course of a few centuries.”30

Yet prior to the mid-20th century, and the post-war ‘great acceleration’ of population, economic productivity, and environmental destruction, such concerns remained marginal. Some of the earliest general studies of the possibility for human extinction and the collapse of civilisation, including William Vogt’s Road to Survival31 and Fairfield Osborne’s Our Plundered Planet,32 linked this threat specifically to environmental harms such as soil erosion and pollution. Another pivotal early work was Rachel Carson’s Silent Spring,33 which not only echoed these earlier concerns, but increased their scientific rigour and added a crucial policy edge by raising public awareness about the danger from chemical pesticides, such as DDT. Carson was a marine biologist, nature writer, and pioneering conservationist who became concerned about the ecological effects of indiscriminate overuse of pesticides, which she called “biocides”. As she wrote in Silent Spring:

Along with the possibility of the extinction of mankind by nuclear war, the central problem of our age has ... become the contamination of man’s total environment with such substances of incredible potential for harm—substances that accumulate in the tissues of plants and animals and even penetrate the germ cells to shatter or alter the very material of heredity upon which the shape of the future depends (Carson, 1962).

A further growth in public concern about environmental harms came in 1968, when two young biologists, Paul and Anne Ehrlich, were commissioned to write The Population Bomb,34 which received widespread attention in both the academic and popular press. It warned about the catastrophic impacts of overpopulation, which the Ehrlichs claimed could lead to “hundreds of millions” of deaths from starvation. Early manifestations of this concern included the founding of organisations such as Friends of the Earth and Greenpeace (both in 1969) and the first Earth Day (April 22nd 1970), which saw 20 million Americans march in cities across the country. In 1972, the Club of Rome—an organisation of scientists, economists, diplomats, government officials, and other influencers from around the world—published The Limits to Growth,35 which developed the first global systems models to investigate the long-run impacts of trends in population, consumption, environmental degradation, and technology.36 Its conclusions were stark: “If the present growth trends in world population, industrialization, pollution, food production, and resource depletion continue unchanged, the limits to growth on this planet will be reached sometime within the next one hundred years”. By 1978, The Bulletin weighed in with a cover story asking Is Mankind Warming the Earth?—to which its author answered, “Yes.”37

One of the more optimistic pronouncements within the Limits to Growth report was that the advent of nuclear energy might mean that the atmospheric concentration of greenhouse gases may cease to rise, “one hopes before it has had any measurable ecological or climatological effect”. History has not borne this prediction out. Nor did it take long for scientists to confirm that humanity’s emission of greenhouse gases was already having deleterious climatic and ecological impacts, with grave implications for our future. Less than a decade later, James Hansen led a ground-breaking study in Science, showing that: