190,99 €

Mehr erfahren.

- Herausgeber: John Wiley & Sons

- Kategorie: Wissenschaft und neue Technologien

- Sprache: Englisch

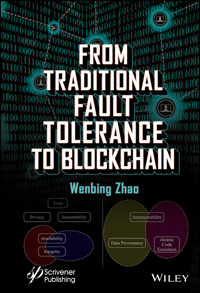

This book covers the most essential techniques for designing and building dependable distributed systems, from traditional fault tolerance to the blockchain technology. Topics include checkpointing and logging, recovery-orientated computing, replication, distributed consensus, Byzantine fault tolerance, as well as blockchain.

This book intentionally includes traditional fault tolerance techniques so that readers can appreciate better the huge benefits brought by the blockchain technology and why it has been touted as a disruptive technology, some even regard it at the same level of the Internet. This book also expresses a grave concern on using traditional consensus algorithms in blockchain because with the limited scalability of such algorithms, the primary benefits of using blockchain in the first place, such as decentralization and immutability, could be easily lost under cyberattacks.

Sie lesen das E-Book in den Legimi-Apps auf:

Seitenzahl: 729

Veröffentlichungsjahr: 2021

Ähnliche

Table of Contents

Cover

Title Page

Copyright

Dedication

List of Figures

List of Tables

Acknowledgments

Preface

References

1 Introduction

1.1 Basic Concepts and Terminologies for Dependable Computing

1.2 Means to Achieve Dependability

1.3 System Security

REFERENCES

2 Logging and Checkpointing

2.1 System Model

2.2 Checkpoint-Based Protocols

2.3 Log Based Protocols

REFERENCES

3 Recovery-Oriented Computing

3.1 System Model

3.2 Fault Detection and Localization

3.3 Microreboot

3.4 Overcoming Operator Errors

REFERENCES

4 Data and Service Replication

4.1 Service Replication

4.2 Data Replication

4.3 Optimistic Replication

4.4 CAP Theorem

REFERENCES

5 Group Communication Systems

5.1 System Model

5.2 Sequencer Based Group Communication System

5.3 Sender Based Group Communication System

5.4 Vector Clock Based Group Communication System

REFERENCES

6 Consensus and the Paxos Algorithms

6.1 The Consensus Problem

6.2 The Paxos Algorithm

6.3 Multi-Paxos

6.4 Dynamic Paxos

6.5 Fast Paxos

6.6 Implementations of the Paxos Family Algorithms

REFERENCES

7 Byzantine Fault Tolerance

7.1 The Byzantine Generals Problem

7.2 Practical Byzantine Fault Tolerance

7.3 Fast Byzantine Agreement

7.4 Speculative Byzantine Fault Tolerance

REFERENCES

8 Cryptocurrency and Blockchain

8.1 History of Cryptocurrency

8.2 Bitcoin

8.3 Ethereum

8.4 Attacks on Blockchain

References

9 Consensus Algorithms for Blockchain

9.1 Model on Blockchain Consensus

9.2 Proof of Work

9.3 Proof of Resources

9.4 Virtual Mining

References

10 Blockchain Applications

10.1 The Value of Blockchain

10.2 Blockchain-Enabled Cyber-Physical Systems

10.3 On Blockchain Throughput

10.4 A Critical Look on Blockchain from Economy Perspective

References

Index

End User License Agreement

List of Illustrations

Chapter 1

Figure 1.1 An example of a chain of threats with two levels of recursion.

Figure 1.2 The rollback recovery is enabled by periodically taking checkpoints a...

Figure 1.3 With redundant instances in the system, the failure of a replica in s...

Figure 1.4 Main types of assets in a distributed system.

Chapter 2

Figure 2.1 An example distributed system.

Figure 2.2 Consistent and inconsistent global state examples.

Figure 2.3 An example of the domino effect in recovery with uncoordinated checkp...

Figure 2.4 Finite state machine specification for the coordinator in the Tamir a...

Figure 2.5 Finite state machine specification for the participant in the Tamir a...

Figure 2.6 Normal operation of the Tamir and Sequin checkpointing protocol in an...

Figure 2.7 Finite state machine specification for the Chandy and Lamport distrib...

Figure 2.8 Normal operation of the Chandy and Lamport global snapshot protocol i...

Figure 2.9 A comparison of the channel state definition between (a) the Chandy a...

Figure 2.10 Example state intervals.

Figure 2.11 An example for pessimistic logging.

Figure 2.12 Transport level (a) and application level (b) reliable messaging.

Figure 2.13 Optimization of pessimistic logging: (a) concurrent message logging ...

Figure 2.14 Probability density function of the logging latency.

Figure 2.15 A summary of the mean logging latency and mean end-to-end latency un...

Figure 2.16 Probability density function of the end-to-end latency.

Figure 2.17 Normal operation of the sender-based logging protocol.

Figure 2.18 An example normal operation of the sender-based logging protocol.

Figure 2.19 Two concurrent failures could result in the loss of determinant info...

Chapter 3

Figure 3.1 The three-tier architecture.

Figure 3.2 The Java EE architecture.

Figure 3.3 An example runtime path of an end-user request.

Figure 3.4 Component class and component instances.

Figure 3.5 The chi-square cumulative distribution function for degree of freedom...

Figure 3.6 The path shape of the example runtime path shown in Figure 3.3.

Figure 3.7 Component class and component instances.

Figure 3.8 Dependency templates for nodes, processes, network paths, and the nei...

Figure 3.9 A partial dependency graph for an example system.

Figure 3.10 The error function.

Figure 3.11 A hypothetical dependency graph with abnormality for each component ...

Figure 3.12 The components that form a cycle in the f-map are reduced to a singl...

Figure 3.13 The architecture of an Operator Undo framework [5, 6].

Chapter 4

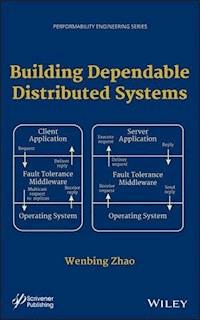

Figure 4.1 The replication algorithm is typically implemented in a fault toleran...

Figure 4.2 Active replication, without (top) and with (bottom) voting at the cli...

Figure 4.3 Passive replication.

Figure 4.4 Semi-active replication.

Figure 4.5 A write-all algorithm for data replication.

Figure 4.6 The problem of the write-all-available algorithm for data replication...

Figure 4.7 Preventing a transaction from accessing a not-fully-recovered replica...

Figure 4.8 An example run of the quorum consensus algorithm on a single data ite...

Figure 4.9 Basic steps for optimistic data replication for an operation-transfer...

Figure 4.10 An example run of a system with three sites that uses Lamport clocks...

Figure 4.11 An example run of a system with three sites that uses vector clocks.

Figure 4.12 An example for the determination of the new version vector value aft...

Figure 4.13 An example operation propagation using vector clocks in a system wit...

Figure 4.14 An example for operation propagation using timestamp matrices in a s...

Figure 4.15 Update commit using ack vectors in a system with three replicas.

Figure 4.16 Update commit using timestamp matrices in a system with three replic...

Figure 4.17 An illustration of the CAP theorem.

Figure 4.18 Partition mode and partition recovery.

Chapter 5

Figure 5.1 Examples of systems that ensure uniform total ordering and nonuniform...

Figure 5.2 In the sequencer based approach, a general system is structured into ...

Figure 5.3 An example rotation sequencer based system in normal operation.

Figure 5.4 Normal operation of the membership view change protocol.

Figure 5.5 Membership change scenario: competing originators.

Figure 5.6 Membership change scenario: premature timeout.

Figure 5.7 Membership change scenario: temporary network partitioning.

Figure 5.8 A simplified finite state machine specification for Totem.

Figure 5.9 A successful run of the Totem Membership Protocol.

Figure 5.10 Membership changes due to a premature timeout by

N

2.

Figure 5.11 Messages sent before

N

1 fails in an example scenario.

Figure 5.12 Messages delivered during recovery for the example scenario.

Figure 5.13 Message sent before the network partitions into two groups, one with...

Figure 5.14 Messages delivered during recovery in the two different partitions f...

Figure 5.15 Causal ordering using vector clocks.

Chapter 6

Figure 6.1 Normal operation of the Paxos algorithm.

Figure 6.2 A deadlock scenario with two competing proposers in the Paxos algorit...

Figure 6.3 If the system has already chosen a value, the safety property for con...

Figure 6.4 If two competing proposers propose concurrently, the system might end...

Figure 6.5 With the promise-not-to-accept-older-proposal requirement in place, e...

Figure 6.6 Normal operation of Multi-Paxos in a client-server system with 3 serv...

Figure 6.7 View change algorithm for Multi-Paxos.

Figure 6.8 With reconfigurations, a group of 7 replicas (initially 5 active and ...

Figure 6.9 The Primary and secondary quorums formation for a system with 3 main ...

Figure 6.10 The Primary and secondary quorums formation as the system reconfigur...

Figure 6.11 Normal operation of Cheap Paxos in a system with 3 main replicas and...

Figure 6.12 The Primary and secondary quorums formation for a system with 3 main...

Figure 6.13 Normal operation of (Multi-) Fast Paxos in a client-server system.

Figure 6.14 Collision recovery in an example system.

Figure 6.15 Expansion of the membership by adding two replicas in method 1.

Figure 6.16 Expansion of the membership by adding two replicas in method 2.

Figure 6.17 Reduction of the membership by removing two replicas one after anoth...

Chapter 7

Figure 7.1 Two scenarios that highlight why it is impossible to use 3 generals t...

Figure 7.2 The message flow and the basic steps of the

OM

(1) algorithms.

Figure 7.3 The message flow and the basic steps of the

OM

(2) algorithms.

Figure 7.4 Normal operation of the PBFT algorithm.

Figure 7.5 PBFT view change protocol.

Figure 7.6 A worst case scenario for tentative execution.

Figure 7.7 Normal operation of Fast Byzantine fault tolerance.

Figure 7.8 Zyzzyva agreement protocol (case 1).

Figure 7.9 Zyzzyva agreement protocol (case 2).

Figure 7.10 A corner case in view change in Zyzzyva.

Chapter 8

Figure 8.1 Bitcoin nodes.

Figure 8.2 The relationship between private key, public key, and address in Bitc...

Figure 8.3 Bitcoin transaction structure.

Figure 8.4 An example transaction chain in Bitcoin.

Figure 8.5 Bitcoin transactions per block data since its inception in 2009 throu...

Figure 8.6 Bitcoin block structure.

Figure 8.7 An issue with Bitcoin Merkle tree computation where different trees c...

Figure 8.8 Bitcoin blockchain consensus and conflict resolution.

Figure 8.9 Structure of Ethereum transaction.

Figure 8.10 State transition via transaction in Bitcoin and Ethereum.

Figure 8.11 Ethereum smart contract structure.

Figure 8.12 An example transaction receipt in the JSON format. The content is co...

Figure 8.13 Ethereum block structure.

Figure 8.14 The annotated source code on verification of an ommer block.

Figure 8.15 An example on what kind of stale blocks may be chosen as an ommer bl...

Figure 8.16 The annotated source code on the block reward scheme in Ethereum.

Figure 8.17 The cache size vs. the epoch number.

Figure 8.18 The dataset size vs. the epoch number.

Figure 8.19 The Ethash algorithm.

Figure 8.20 The double-spending attack steps.

Chapter 9

Figure 9.1 A model for public blockchain consensus.

Figure 9.2 Main loop used by a mining node to compete in the creation of a new b...

Figure 9.3 Major steps in the CreateNewBlock function in PeerCoin PoS.

Figure 9.4 Major steps in the CreateCoinStake function in PeerCoin PoS.

Figure 9.5 Major steps in the CheckStakeKernelHash function in PeerCoin PoS.

Figure 9.6 Information included in the data stream for computing PoS hash.

Figure 9.7 Major steps in PoET consensus.

Chapter 10

Figure 10.1 Main benefits of blockchain for applications.

Figure 10.2 A model for cyber-physical systems.

Figure 10.3 Blockchain-enabled CPS applications.

Figure 10.4 Key operations and their relationship with the CPS applications and ...

Figure 10.5 Basic CPS operations with respect to the latency and throughput requ...

Figure 10.6 Stale block rate for different block sizes and block intervals.

Figure 10.7 Throughput for different combinations of block sizes and block inter...

Figure 10.8 Payment channel operation.

Figure 10.9 Two level logging for sensing data with blockchain.

Figure 10.10 The format for the raw data (together with the aggregated data tupl...

Figure 10.11 Summary of the token paradigm.

Figure 10.12 A classification of blockchain applications based on token usage.

Figure 10.13 The impossibility trinity hypothesis.

List of Tables

Chapter 7

Table 7.1 Messages received and final decisions in two cases for OM(1,4).

Table 7.2 Messages received and step (3) calculation in two cases for instances ...

Table 7.3 Messages received and step (3) calculation in two cases for instances ...

Table 7.4 Messages received and step (3) calculation in two cases for instances ...

Table 7.5 Messages received and step (3) calculation in two cases for instances ...

Table 7.6 Messages received and step (3) calculation in two cases for instances ...

Table 7.7 Final decision made at each lieutenant in step (3) of

OM

(2).

Chapter 10

Table 10.1 Blockchain-enabled IoT-based applications.

Table 10.2 Blockchain-enabled supply chain applications.

Table 10.3 Blockchain-enabled manufacturing applications.

Table 10.4 Blockchain-enabled automobile production.

Table 10.5 Blockchain-enabled energy systems.

Table 10.6 Blockchain-enabled healthcare systems.

Table 10.7 Blockchain-enabled smart city.

Table 10.8 Blockchain-enabled workplace.

Table 10.9 General discussions on blockchain-enabled CPS applications.

Guide

Cover

Table of Contents

Title Page

Copyright

Dedication

List of Figures

List of Tables

Acknowledgments

Preface

References

Begin Reading

Index

End User License Agreement

Pages

vii

ii

iii

iv

v

xiii

xiv

xv

xvi

xvii

xviii

xix

xxi

xxiii

xxiv

xxv

xxvi

xxvii

xxix

xxx

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

419

420

421

422

423

424

425

427

428

429

430

431

432

Scrivener Publishing

100 Cummings Center, Suite 541J

Beverly, MA 01915-6106

Publishers at Scrivener

Martin Scrivener ([email protected])

Phillip Carmical ([email protected])

From Traditional Fault Tolerance to Blockchain

Wenbing Zhao

Cleveland State University

This edition first published 2021 by John Wiley & Sons, Inc., 111 River Street, Hoboken, NJ 07030, USA and Scrivener Publishing LLC, 100 Cummings Center, Suite 541J, Beverly, MA 01915, USA

© 2021 Scrivener Publishing LLC

For more information about Scrivener publications please visit www.scrivenerpublishing.com.

All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, except as permitted by law. Advice on how to obtain permission to reuse material from this title is available at http://www.wiley.com/go/permissions.

Wiley Global Headquarters

111 River Street, Hoboken, NJ 07030, USA

For details of our global editorial offices, customer services, and more information about Wiley products visit us at www.wiley.com.

Limit of Liability/Disclaimer of Warranty

While the publisher and authors have used their best efforts in preparing this work, they make no representations or warranties with respect to the accuracy or completeness of the contents of this work and specifically disclaim all warranties, including without limitation any implied warranties of merchant-ability or fitness for a particular purpose. No warranty may be created or extended by sales representatives, written sales materials, or promotional statements for this work. The fact that an organization, website, or product is referred to in this work as a citation and/or potential source of further information does not mean that the publisher and authors endorse the information or services the organization, website, or product may provide or recommendations it may make. This work is sold with the understanding that the publisher is not engaged in rendering professional services. The advice and strategies contained herein may not be suitable for your situation. You should consult with a specialist where appropriate. Neither the publisher nor authors shall be liable for any loss of profit or any other commercial damages, including but not limited to special, incidental, consequential, or other damages. Further, readers should be aware that websites listed in this work may have changed or disappeared between when this work was written and when it is read.

Library of Congress Cataloging-in-Publication Data

ISBN 978-1-119-68195-3

Cover image: Pixabay.Com

Cover design by Russell Richardson

Set in size of 11pt and Minion Pro by Manila Typesetting Company, Makati, Philippines

Printed in the USA

10 9 8 7 6 5 4 3 2 1

To My Parents

List of Figures

1.1 An example of a chain of threats with two levels of recursion.

1.2 The rollback recovery is enabled by periodically taking checkpoints and usually logging of the requests received.

1.3 With redundant instances in the system, the failure of a replica in some cases can be masked and the system continue providing services to its clients without any disruption.

1.4 Main types of assets in a distributed system.

2.1 An example distributed system.

2.2 Consistent and inconsistent global state examples.

2.3 An example of the domino effect in recovery with uncoordinated checkpointing.

2.4 Finite state machine specification for the coordinator in the Tamir and Sequin checkpointing protocol.

2.5 Finite state machine specification for the participant in the Tamir and Sequin checkpointing protocol.

2.6 Normal operation of the Tamir and Sequin checkpointing protocol in an example three-process distributed system.

2.7 Finite state machine specification for the Chandy and Lamport distributed snapshot protocol.

2.8 Normal operation of the Chandy and Lamport global snapshot protocol in an example three-process distributed system.

2.9 A comparison of the channel state definition between (a) the Chandy and Lamport distributed snapshot protocol and (b) the Tamir and Sequin global checkpointing protocol.

2.10 Example state intervals.

2.11 An example for pessimistic logging.

2.12 Transport level (a) and application level (b) reliable messaging.

2.13 Optimization of pessimistic logging: (a) concurrent message logging and execution (b) logging batched messages.

2.14 Probability density function of the logging latency.

2.15A summary of the mean logging latency and mean end-to-end latency under various conditions.

2.16 Probability density function of the end-to-end latency.

2.17 Normal operation of the sender-based logging protocol.

2.18 An example normal operation of the sender-based logging protocol.

2.19 Two concurrent failures could result in the loss of determinant information for regular messages.

3.1 The three-tier architecture.

3.2 The Java EE architecture.

3.3 An example runtime path of an end-user request.

3.4 Component class and component instances.

3.5 The chi-square cumulative distribution function for degree of freedom of 1, 2, 3, 4, 5.

3.6 The path shape of the example runtime path shown in Figure 3.3.

3.7 Component class and component instances.

3.8 Dependency templates for nodes, processes, network paths, and the neighbor sets.

3.9 A partial dependency graph for an example system.

3.10 The error function.

3.11 A hypothetical dependency graph with abnormality for each component and the weight for each edge labeled.

3.12 The components that form a cycle in the f-map are reduced to a single unit in the r-map for recursive recovery.

3.13 The architecture of an Operator Undo framework.

4.1 The replication algorithm is typically implemented in a fault tolerance middleware framework.

4.2 Active replication, without (top) and with (bottom) voting at the client.

4.3 Passive replication.

4.4 Semi-active replication.

4.5 A write-all algorithm for data replication.

4.6 The problem of the write-all-available algorithm for data replication.

4.7 Preventing a transaction from accessing a not-fully-recovered replica is not sufficient to ensure one-copy serializable execution of transactions.

4.8 An example run of the quorum consensus algorithm on a single data item.

4.9Basic steps for optimistic data replication for an operation-transfer system.

4.10 An example run of a system with three sites that uses Lamport clocks.

4.11 An example run of a system with three sites that uses vector clocks.

4.12 An example for the determination of the new version vector value after reconciling a conflict.

4.13 An example operation propagation using vector clocks in a system with three replicas.

4.14 An example for operation propagation using timestamp matrices in a system with three replicas.

4.15 Update commit using ack vectors in a system with three replicas.

4.16 Update commit using timestamp matrices in a system with three replicas.

4.17 An illustration of the CAP theorem.

4.18 Partition mode and partition recovery.

5.1 Examples of systems that ensure uniform total ordering and nonuniform total ordering.

5.2 In the sequencer based approach, a general system is structured into a combination of two subsystems, one with a single receiver and the other with a single sender of broadcast messages.

5.3 An example rotation sequencer based system in normal operation.

5.4 Normal operation of the membership view change protocol.

5.5 Membership change scenario: competing originators.

5.6 Membership change scenario: premature timeout.

5.7 Membership change scenario: temporary network partitioning.

5.8 A simplified finite state machine specification for Totem.

5.9 A successful run of the Totem Membership Protocol.

5.10 Membership changes due to a premature timeout by N2.

5.11 Messages sent before N1 fails in an example scenario.

5.12 Messages delivered during recovery for the example scenario.

5.13 Message sent before the network partitions into two groups, one with {N1, N2}, and the other with {N3, N4, N5}.

5.14 Messages delivered during recovery in the two different partitions for the example scenario.

5.15Causal ordering using vector clocks.

6.1 Normal operation of the Paxos algorithm.

6.2 A deadlock scenario with two competing proposers in the Paxos algorithm.

6.3 If the system has already chosen a value, the safety property for consensus would hold even without the promise-not-to-accept-older-proposal requirement.

6.4 If two competing proposers propose concurrently, the system might end up choosing two different values without the promise-not-to-accept-older-proposal requirement.

6.5 With the promise-not-to-accept-older-proposal requirement in place, even if two competing proposers propose concurrently, only a single value may be chosen by the system.

6.6 Normal operation of Multi-Paxos in a client-server system with 3 server replicas and a single client.

6.7 View change algorithm for Multi-Paxos.

6.8 With reconfigurations, a group of 7 replicas (initially 5 active and 2 spare replicas) can tolerate up to 5 single faults (without reconfigurations, only up to 3 faults can be tolerated).

6.9 The Primary and secondary quorums formation for a system with 3 main replicas and 2 auxiliary replicas.

6.10 The Primary and secondary quorums formation as the system reconfigures due to the failures of main replicas.

6.11 Normal operation of Cheap Paxos in a system with 3 main replicas and 1 auxiliary replica.

6.12 The Primary and secondary quorums formation for a system with 3 main replicas and 2 auxiliary replicas.

6.13 Normal operation of (Multi-) Fast Paxos in a client-server system.

6.14 Collision recovery in an example system.

6.15 Expansion of the membership by adding two replicas in method 1.

6.16 Expansion of the membership by adding two replicas in method 2.

6.17 Reduction of the membership by removing two replicas one after another.

7.1 Two scenarios that highlight why it is impossible to use 3 generals to solve the Byzantine generals problem.

7.2 The message flow and the basic steps of the OM(1) algorithms. 252

7.3 The message flow and the basic steps of the OM(2) algorithms. 254

7.4 Normal operation of the PBFT algorithm.

7.5PBFT view change protocol.

7.6 A worst case scenario for tentative execution.

7.7 Normal operation of Fast Byzantine fault tolerance.

7.8 Zyzzyva agreement protocol (case 1).

7.9 Zyzzyva agreement protocol (case 2).

7.10 A corner case in view change in Zyzzyva.

8.1 Bitcoin nodes.

8.2 The relationship between private key, public key, and address in Bitcoin.

8.3 Bitcoin transaction structure.

8.4 An example transaction chain in Bitcoin.

8.5 Bitcoin transactions per block data since its inception in 2009 through September 15, 2020. The data are downloaded from https://www.blockchain.com/charts/n-transactions-per-block.

8.6 Bitcoin block structure.

8.7 An issue with Bitcoin Merkle tree computation where different trees could produce the same Merkle root.

8.8 Bitcoin blockchain consensus and conflict resolution.

8.9 Structure of Ethereum transaction.

8.10 State transition via transaction in Bitcoin and Ethereum.

8.11 Ethereum smart contract structure.

8.12 An example transaction receipt in the JSON format. The content is color-coded. The yellow blocks are identifier information for the transaction, the contract invoked, and the block in which the transaction reside. The blue block contains the cumulative gas used. The green block contains the logs. The red block contains the logs Bloom filter string. The purple block contains the status of the transaction (success or not). The pink block contains the gas used for this transaction alone.

8.13 Ethereum block structure.

8.14 The annotated source code on verification of an ommer block.

8.15 An example on what kind of stale blocks may be chosen as an ommer block.

8.16 The annotated source code on the block reward scheme in Ethereum.

8.17 The cache size vs. the epoch number.

8.18 The dataset size vs. the epoch number.

8.19 The Ethash algorithm.

8.20 The double-spending attack steps.

9.1A model for public blockchain consensus.

9.2 Main loop used by a mining node to compete in the creation of a new block using PoS in PeerCoin.

9.3 Major steps in the CreateNewBlock function in PeerCoin PoS.

9.4 Major steps in the CreateCoinStake function in PeerCoin PoS.

9.5 Major steps in the CheckStakeKernelHash function in PeerCoin PoS.

9.6 Information included in the data stream for computing PoS hash.

9.7 Major steps in PoET consensus.

10.1 Main benefits of blockchain for applications.

10.2 A model for cyber-physical systems.

10.3 Blockchain-enabled CPS applications.

10.4 Key operations and their relationship with the CPS applications and the blockchain benefits.

10.5 Basic CPS operations with respect to the latency and throughput requirements.

10.6 Stale block rate for different block sizes and block intervals.

10.7 Throughput for different combinations of block sizes and block intervals.

10.8 Payment channel operation.

10.9 Two level logging for sensing data with blockchain.

10.10 The format for the raw data (together with the aggregated data tuple) for local logging.

10.11 Summary of the token paradigm.

10.12 A classification of blockchain applications based on token usage.

10.13 The impossibility trinity hypothesis.

List of Tables

7.1 Messages received and final decisions in two cases for OM(1,4).

7.2 Messages received and step (3) calculation in two cases for instances of OM(1) at G1.

7.3 Messages received and step (3) calculation in two cases for instances of OM(1) at G2.

7.4 Messages received and step (3) calculation in two cases for instances of OM(1) at G3.

7.5 Messages received and step (3) calculation in two cases for instances of OM(1) at G4.

7.6 Messages received and step (3) calculation in two cases for instances of OM(1) at G5.

7.7 Final decision made at each lieutenant in step (3) of OM(2).

10.1 Blockchain-enabled IoT-based applications.

10.2 Blockchain-enabled supply chain applications.

10.3 Blockchain-enabled manufacturing applications.

10.4 Blockchain-enabled automobile production.

10.5 Blockchain-enabled energy systems.

10.6 Blockchain-enabled healthcare systems.

10.7 Blockchain-enabled smart city.

10.8 Blockchain-enabled workplace.

10.9 General discussions on blockchain-enabled CPS applications.

Acknowledgments

This book is dedicated to my parents. They tried their best to help me pursue my dreams through so many years’ financial hardship. They took their life savings to pay the government so that I could be free to emigrate to the greatest country on earth. When I stumbled and had nowhere else to go, they took me under their wings and took care of me. I am forever in their debt.

I also would like to thank my beautiful wife, Hao, and my lovely children Dorothy, Emily, and Arthur. It is them that make my life so enjoyable and meaningful.

W. Z.

Preface

Cloud services are playing an ever increasingly important role in all aspects of our society, governments, businesses, and individuals alike. We depend on these services on a daily basis, such as financial (e.g., online banking and stock trading), e-commerce (e.g., online shopping), civil infrastructure (e.g., electric power grid and traffic control), entertainment (e.g., online gaming and multimedia streaming), and personal data storage (e.g., various cloud services such as Dropbox, Google Drive, and OneDrive). Behind these cloud services is distributed computing, which addresses many critical issues in making the services dependable and trustworthy. The most important of all is to build consensus in its internal operations that span many different computing nodes.

Distributed consensus has been studied for several decades, at least starting in 1970s. The reason why distributed consensus is important is that a distributed system would span over many computing nodes, and these nodes must maintain a common view on the system state so that each can operate as planned towards the objectives of the system. Prolonged inconsistency among different components of the system would damage the integrity of the system and ultimately would result in system-level failures that are visible to end users.

The cost of system failures is enormous. If a data center is brought down by a system failure, the average cost for downtime may range from $42,000 to about $300,000 per hour [2, 6]. The cost can be estimated by summing up the wasted expenses and the loss of revenue. While the labor cost of downtime may be estimated relatively easily (i.e., roughly, wasted expenses per hour = number of employees × average salary per hour) [13], it is much harder to estimate the loss of revenue, especially due to the damages on the reputation of the business and the loyalty of its potential customers [2].

Ensuring high availability of distributed systems is not cheap. In [7], the cost of data center is estimated to range from $450 per square foot for 99.671% availability (i.e., 28.8 hours of downtime per year), to $1,100 per square foot for 99.995% availability (i.e., 0.4 hours of downtime per year). That is perhaps one reason why about 59% of Fortune 500 companies suffer from 1.6 hours or more of downtime per week [2].

All classical consensus algorithms rely on a concept referred to as membership, that is, every node would know how many nodes are in the current membership, the logical role of each node, and how to reach other nodes. Another important construct is voting via the sending of messages to each other. Typically, one of the members would assume a special role, which is referred to as the primary or the coordinator. The coordinator might fail or become compromised, in which case, a new coordinator would be elected through voting. As such, classical distributed consensus algorithms are expensive, highly complex, and not scalable due to the heavy use of multiple rounds of message exchanges among the members.

In January 2009, the launch of the first practical cryptocurrency, Bitcoin [12], has completely changed the picture. The most essential prerequisite for a cryptocurrency is the assurance that it is virtually impossible for anyone to double-spend the money (i.e., cryptocurrency) one has. Bitcoin addressed this requirement by introducing an immutable distributed ledger in the form of a chain of blocks where each block aggregates hundreds or even thousands of transactions. This distributed ledger is often referred to as the blockchain. The immutability of the blockchain is achieved by several means: (1) cryptographic protection of the blockchain, such as digital signature, one-way hash function, and chaining of the blocks; (2) massive degree of replication of the blockchain across many nodes in the Bitcoin network; and (3) a novel probabilistic consensus algorithm that is completely different from classical consensus algorithms.

The consensus algorithm used in Bitcoin does not involve any explicit form of voting, therefore, there is no extra message exchange among the nodes in the Bitcoin network for the purpose of reaching consensus. In Bitcoin, the consensus building process is converted into a lottery-like stochastic process where the winner of the lottery gets the right to create a new block of transactions and collects an award [22]. To ensure fairness and to ensure the process to be a stochastic process, every participating node would work on a Proof-of-Work (PoW) based puzzle, and the first one that finds a solution becomes the winner. The PoW puzzle has a predefined target difficulty, and a participating node would experiment with different ways of making the hash of the block header meet the target difficulty. This is a CPU-intensive process. Hence, the only way a node could gain advantage over other nodes is to invest in better hardware that can perform the hash operation faster. The Bitcoin consensus algorithm is referred to as PoW and sometimes as the Nakamoto algorithm, named after Bitcoin’s creator, which is apparently a pseudonym. This novel form of consensus algorithm has aroused huge interest in the research and application of the blockchain technology [20]. Some even expressed the hope that the blockchain technology would lead to a new-form of economy, just like what the Internet has transformed our society [16].

This book contains two parts. The first part consists of the first 7 chapters and it covers the most essential techniques for building dependable distributed systems. The last 3 chapters form the second part, which covers the blockchain technology.

Chapter 1 introduces the basic concepts and terminologies of dependable distributed computing, as well as the primary means to achieve dependability.

Chapter 2 describes the checkpointing and logging mechanisms, which are widely used in practice to achieve some form of fault tolerance. Checkpointing and logging enable the recoverability of the system but do not prevent service disruption. These mechanisms are relatively simple to implement and understand, and they incur minimum runtime overhead while demanding very moderate extra resources (only stable storage). Furthermore, checkpointing and logging also serve as the foundation for more sophisticated dependability techniques.

Chapter 3 covers research works on recovery-oriented computing, including fault detection and diagnosis, microreboot, and system-level undo and redo. Recovery-oriented computing aims to facilitate faster recovery after a system failure and thereby improving the availability of the system. Similar to checkpointing and logging, the mechanisms for recovery-oriented computing do not prevent service disruption, hence, it is a promising approach for many e-commerce application, but not suitable for applications that require high reliability.

Chapter 4 outlines the replication technique for data and service fault tolerance. This is the fundamental technique to ensure high reliability. Through active replication (i.e., the use of multiple redundant copies of the application processes), the system would be able to mask the failure of a replica and continue to process clients’ requests (this is actually not entirely true, as we will show in later chapters, some failures may cause extended period of unavailability of the system). With replication comes the complexity of consistency issue. Ideally, the replicas should always maintain consistency with each other. However, doing so might not incur too much runtime overhead to be acceptable for some applications, or may cause extended period of system unavailability. Hence, strict consistency may have to be compromised either for better performance [15] or for better availability [19].

Chapter 5 explains the group communication systems, which can be used to implement active replication. A group communication system typically offers a totally ordered reliable multicast service for messages, a membership server, and a view synchrony service. These set of services help the replicas to maintain consistency even in the presence of failures, which would reduce the development cost of building dependable systems with active replication.

Chapter 6 discusses the consensus problem and describes several Paxos algorithms, including the Classic Paxos, Dynamic Paxos, Cheap Paxos, and Fast Paxos. While it is easy for a group of processes to agree on the same value if all processes can communicate with each other promptly and if none of them fails, distributed consensus is an incredibly hard problem when processes might fail and there might be extended delay to send or receive a message. The classical Paxos algorithm solves the consensus problem (under the non-malicious fault model) in a very elegant and efficient manner by separating the safety concern and the liveness concern [9]. Additional Paxos algorithm are developed to minimize the resources required, and to reduce the latency for achieving consensus by using a higher redundancy level [10, 18].

Chapter 7 introduces the problem of Byzantine fault tolerance. A Byzantine fault is synonymous with a malicious fault. Because a malicious faulty component may choose to behave like any of the non-malicious faults, the Byzantine fault model encompasses any arbitrary fault. The distributed consensus problem under the Byzantine fault model was first studied several decades ago by Lamport, Shostak, and Pease [11]. A much more efficient algorithm for achieving fault tolerance under the Byzantine fault model (referred to as Practical Byzantine fault tolerance) was proposed by Castro and Liskov in 1999 [5]. Since then, the research on Byzantine fault tolerance exploded. With the pervasiveness of cyberattacks and espionages, dealing with malicious faults becomes an urgent concern now compared with several decades ago.

Chapter 8 provides an overview of cryptocurrency and the blockchain technology, including the early conception of cryptocur rency, the first implementation of cryptocurrency in Bitcoin [12], the concept of smart contract and its implementation in Ethereum [4], as well as the vision of decentralized organizations [16] powered by smart contract and the blockchain technology.

Chapter 9 explains the consensus algorithms used in the blockchain technology in depth. Since the original PoW algorithm was introduced in Bitcoin, there has been great effort on improving PoW in various aspects, and on finding alternative algorithms that do not consume as much energy. A common set of requirements for such algorithms is laid out [22] and different proposals are examined with respect to the requirements [17]. In this chapter, we also discuss the Proof-of-Stake (PoS) consensus algorithm, which is the second most well-known algorithm behind PoW for blockchain. We will explain the PoS implementation in PeerCoin [8]. It is the first implementation of PoS in a practical cryptocurrency (i.e., PeerCoin) in 2013 and it has gone through several revisions to address its initial vulnerabilities.

Chapter 10 presents the applications of the blockchain technology and issues that will directly impact on how widely the blockchain technology can be adopted, including the value of the blockchain technology and the efforts to increase the throughput of blockchain systems [1, 3, 14, 21]. We primarily focus on blockchain applications in the area of cyber-physical systems (CPS) [20]. CPS is evolving rapidly and the integration of blockchain and CPS could potentially transform CPS design for much stronger security and robustness.

Wenbing ZhaoCleveland, USAMarch 2021

References

1. E. Akbari, W. Zhao, S. Yang, and X. Lou. The impact of block parameters on the throughput and security of blockchains. In

Proceedings of the 2020 International Conference on Blockchain Technology

, pages 13–18. ACM, 2020.

2. A. Arnold. Assessing the financial impact of downtime, April 2010.

http://www.businesscomputingworld.co.uk/assessing-the-financial-impact-of-downtime/

.

3. A. Back, M. Corallo, L. Dashjr, M. Friedenbach, G. Maxwell, A. Miller, A. Poelstra, J. Timón, and P. Wuille. Enabling blockchain innovations with pegged sidechains.

URL:

http://www.opensciencereview.com/papers/123/enablingblockchain-innovations-with-pegged-sidechains

, 72, 2014.

4. V. Buterin

et al.

Ethereum white paper.

https://ethereum.org/en/whitepaper/

, 2013.

5. M. Castro and B. Liskov. Practical byzantine fault tolerance. In

Proceedings of the third symposium on Operating systems design and implementation

, OSDI ’99, pages 173–186, Berkeley, CA, USA, 1999. USENIX Association.

6. Channel Insider. Unplanned it outages cost more than $5,000 per minute: Report.

http://www.channelinsider.com/c/a/Spotlight/Unplanned-IT-Outages-Cost-More-than-5000-per-Minute-Report-105393/

, May 2011.

7. J. Clark. The price of data center availability, October 2011.

http://www.data-centerjournal.com/design/the-price-of-data-center-availability/

.

8. S. King and S. Nadal. Ppcoin: Peer-to-peer crypto-currency with proof-of-stake.

https://www.peercoin.net/assets/paper/peercoin-paper.pdf

, 2008.

9. L. Lamport. Paxos made simple.

ACM SIGACT News (Distributed Computing Column)

, 32(4):18–25, December 2001.

10. L. Lamport. Fast paxos.

Distributed Computing

, 19(2):79–193, 2006.

11. L. Lamport, R. Shostak, and M. Pease. The byzantine generals problem.

ACM Transactions on Programming Languages and Systems

, 4:382–401, 1982.

12. S. Nakamoto. Bitcoin: A peer-to-peer electronic cash system.

https://bitcoin.org/bitcoin.pdf

, 2008.

13. T. Pisello and B. Quirk. How to quantify downtime, January 2004.

http://www.networkworld.com/careers/2004/0105man.html

.

14. J. Poon and T. Dryja. The bitcoin lightning network: Scalable off-chain instant payments, 2016.

15. Y. Saito and M. Shapiro. Optimistic replication.

ACM Comput. Surv.

, 37(1):42– 81, Mar. 2005.

16. M. Swan.

Blockchain: Blueprint for a new economy

. “O’Reilly Media, Inc.”, 2015.

17. W. Wang, D. T. Hoang, P. Hu, Z. Xiong, D. Niyato, P. Wang, Y. Wen, and D. I. Kim. A survey on consensus mechanisms and mining strategy management in blockchain networks.

IEEE Access

, 7:22328–22370, 2019.

18. W. Zhao. Fast paxos made easy: Theory and implementation.

International Journal of Distributed Systems and Technologies (IJDST)

, 6(1):15–33, 2015.

19. W. Zhao. Optimistic byzantine fault tolerance.

International Journal of Parallel, Emergent and Distributed Systems

, 31(3):254–267, 2016.

20. W. Zhao, C. Jiang, H. Gao, S. Yang, and X. Luo. Blockchain-enabled cyber-physical systems: A review.

IEEE Internet of Things Journal

, 2020.

21. W. Zhao, S. Yang, and X. Lou. Secure hierarchical processing and logging of sensing data and iot events with blockchain. In

Proceedings of the 2020 International Conference on Blockchain Technology

, pages 52–56. ACM, 2020.

22. W. Zhao, S. Yang, and X. Luo. On consensus in public blockchains. In

Proceedings of the 2019 International Conference on Blockchain Technology

, pages 1–5, 2019.

1Introduction

Distributed systems bring many benefits to us, for example, we can share resources such as data storage and processing cycles much more easily; we can collaborative on projects efficiently even if the team members span across the planet; we can solve challenging problems by utilizing the vast aggregated computing power of large scale distributed systems. However, if not designed properly, distributed systems may appear to be less dependable than standalone systems. As Leslie Lamport pointed out: “You know you have one (a distributed system) when the crash of a computer you’ve never heard of stops you from getting any work done” [10]. In this book, we introduce various dependability techniques that can be used to address the issue brought up by Lamport. In fact, with sufficient redundancy in the system, a distributed system can be made significantly more dependable than a standalone system because such a distributed system can continue providing services to its users even when a subset of its nodes have failed.

In this chapter, we introduce the basic concepts and terminologies of dependable distributed computing and system security, and outline the primary approaches to achieving dependability.

1.1 Basic Concepts and Terminologies for Dependable Computing

The term “dependable systems” has been used widely in many different contexts and often means different things. In the context of distributed computing, dependability refers to the ability of a distributed system to provide correct services to its users despite various threats to the system such as undetected software defects, hardware failures, and malicious attacks.

To reason about the dependability of a distributed system, we need to model the system itself as well as the threats to the system clearly [2]. We also define common attributes of dependable distributed systems and metrics on evaluating the dependability of a distributed system.

1.1.1 System Models

A system is designed to provide a set of services to its users (often referred to as clients). Each service has an interface that a client could use to request the service. What the system should do for each service is defined as a set of functions according to a functional specification for the system. The status of a system is determined by its state. The state of a practical system is usually very complicated. A system may consist of one or more processes spanning over one or more nodes, and each process might consist of one or more threads. The state of the system is determined collectively by the state of the processes and threads in the system. The state of a process typically consists of the values of its registers, stack, heap, file descriptors, and the kernel state. Part of the state might become visible to the users of the system via information contained in the responses to the users’ requests. Such state is referred to as external state and is normally an abstract state defined in the functional specification of the system. The remaining part of the state that is not visible to users is referred to as internal state. A system can be recovered to where it was before a failure if its state was captured and not lost due to the failure (for example, if the state is serialized and written to stable storage).

From the structure perspective, a system consists of a one or more components (such as nodes or processes), and a system always has a boundary that separates the system from its environment. Here environment refers to all other systems that the current system interact with. Note that what we refer to as a system is always relative with respect to the current context. A component in a (larger) system by itself is a system when we want to study its behavior and it may in turn have its own internal structures.

1.1.2 Threat Models

Whether or not a system is providing correct services is judged by whether or not the system is performing the functions defined in the functional specification for the system. When a system is not functioning according to its functional specification, we say a service failure (or simply failure) has occurred. The failure of a system is caused by part of its state in wrong values, i.e.,errors in its state. We hypothesize that the errors are caused by some faults [6]. Therefore, the threats to the dependability of a system are modeled as various faults.

A fault might not always exhibit itself and cause error. In particular, a software defect (often referred to as software bug) might not be revealed until the code that contains the defect is exercised when certain condition is met. For example, if a shared variable is not protected by a lock in a multithreaded application, the fault (often referred to as race condition) does not exhibit itself unless there are two or more threads trying to update the shared variable concurrently. As another example, if there is no boundary check on accessing to an array, the fault does not show up until a process tries to access the array with an out-of-bound index. When a fault does not exhibit itself, we say the fault is dormant. When certain condition is met, the fault will be activated.

When a fault is activated, initially the fault would cause an error in the component that encompasses the defected area (in programming code). When the component interacts with other components of the system, the error would propagates to other components. When the errors propagate to the interface of the system and render the service provided to a client deviate from the specification, a service failure would occur. Due to the recursive nature of common system composition, the failure of one system may cause a fault in a larger system when the former constitutes a component of the latter, as shown in Figure 1.1. Such relationship between fault, error, and failure is referred to as “chain of threats” in [2]. Hence, in literature the terms “faults” and “failures” are often used interchangeably.

Figure 1.1An example of a chain of threats with two levels of recursion.

Of course, not all failures can be analyzed with the above chain of threats. For example, a power outage of the entire system would immediately cause the failure of the system.

Faults can be classified based on different criteria, the most common classifications include:

◾ Based on the source of the faults, faults can be classified as:

– Hardware faults, if the faults are caused by the failure of hardware components such as power outages, hard drive failures, bad memory chips, etc.

– Software faults, if the faults are caused by software bugs such as race conditions and no-boundary-checks for arrays.

– Operator faults, if the faults are caused by the operator of the system, for example, misconfiguration, wrong upgrade procedures, etc.

◾ Based on the intent of the faults, faults can be classified as:

– Non-malicious faults, if the faults are not caused by a person with malicious intent. For example, the naturally occurred hardware faults and some remnant software bugs such as race conditions are non-malicious faults.

– Malicious faults, if the faults are caused by a person with intent to harm the system, for example, to deny services to legitimate clients or to compromise the integrity of the service. Malicious faults are often referred to as commission faults, or Byzantine faults [5].

◾ Based on the duration of the faults, faults can be classified as:

– Transient faults, if such a fault is activated momentarily and becomes dormant again. For example, the race condition might often show up as transient fault because if the threads stop accessing the shared variable concurrently, the fault appears to have disappeared.

– Permanent faults, if once a fault is activated, the fault stays activated unless the faulty component is repaired or the source of the fault is addressed. For example, a power outage is considered a permanent fault because unless the power is restored, a computer system will remain powered off. A specific permanent fault is the (process) crash fault. A segmentation fault could result in the crash of a process.

◾ Based on how a fault in a component reveals to other components in the system, faults can be classified as:

– Content faults, if the values passed on to other components are wrong due to the faults. A faulty component may always pass on the same wrong values to other components, or it may return different values to different components that it interacts with. The latter is specifically modeled as Byzantine faults [5].

– Timing faults, if the faulty component either returns a reply too early, or too late alter receiving a request from another component. An extreme case is when the faulty component stops responding at all (

i.e.,

it takes infinite amount of time to return a reply),

e.g.,

when the component crashes, or hangs due to an infinite loop or a deadlock.

◾ Based on whether or not a fault is reproducible or deterministic, faults (primarily software faults) can be classified as:

– Reproducible/deterministic faults. The fault happens deterministically and can be easily reproduced. Accessing a null pointer is an example of deterministic fault, which often would lead to the crash of the system. This type of faults can be easily identified and repaired.

– Nondeterministic faults. The fault appears to happen nondeterministically and hard to reproduce. For example, if a fault is caused by a specific interleaving of several threads when they access some shared variable, it is going to be hard to reproduce such a fault. This type of software faults is also referred to as Heisenbugs to highlight their uncertainty.

◾ Given a number of faults within a system, we can classify them based on their relationship:

– Independent faults, if there is no causal relationship between the faults,

e.g.,

given fault A and fault B, B is not caused by A, and A is not caused by B.

– Correlated faults, if the faults are causally related,

e.g.,

given fault A and fault B, either B is caused by A, or A is caused by B. If multiple components fail due to a common reason, the failures are referred to as common mode failures.

When the system fails, it is desirable to avoid catastrophic consequences, such as the loss of life. The consequence of the failure of a system can be alleviated by incorporating dependability mechanisms into the system such that when it fails, it stops responding to requests (such systems are referred to as fail-stop systems), if this is impossible, it returns consistent wrong values instead of inconsistent values to all components that it may interact with. If the failure of a system does not cause great harm either to human life or to the environment, we call such as system a fail-safe system. Usually, a fail-safe system defines a set of safe states. When a fail-safe system can no longer operate according to its specification due to faults, it can transit to one of the predefined safe states when it fails. For example, the computer system that is used to control a nuclear power plant must be a fail-safe system.

Perhaps counter intuitively, it is often desirable for a system to halt its operation immediately when it is in an error state or encounters an unexpected condition. The software engineering practice to ensure such a behavior is called fail fast [9]. The benefits of the fail-fast practice are that it enables early detection of software faults and the diagnosis of faults. When a fault has been propagated to many other components, it is a lot harder to pinpoint the source of the problem.

1.1.3 Dependability Attributes and Evaluation Metrics

A dependable system has a number of desirable attributes and some of the attributes can be used as evaluation metrics for the system. We classify these attributes into two categories: (1) those that are fundamental to, and are immediate concern of, all distributed systems, including availability, reliability, and integrity; and (2) those that are secondary and may not be of immediate concern of, or be applicable to all systems, such as maintainability and safety.

The availability and reliability of a system can be used as evaluation metrics. Other attributes are normally not used as evaluation metrics because it is difficult to quantify the integrity, maintainability, and safety of a distributed system.

1.1.3.1 Availability

Availability is a measure of the readiness of a dependable system at a point in time, i.e., when a client needs to use a service provided by the system, the probability that the system is there to provide the service to the client. The availability of a system is determined by two factors:

◾ Mean time to failure (MTTF). It characterizes how long the system can run without a failure.

◾ Mean time to repair (MTTR). It characterizes how long the system can be repaired and recovered to be fully functional again.

Availability is defined to be MTTF/(MTTF + MTTR). Hence, the larger the MTTF, and higher the availability of a system. Similarly, the smaller the MTTR, the higher the availability of the system.

The availability of a system is typically represented in terms of how many 9s. For example, if a system is claimed to offer five 9s availability, it means that the system will be available with a probability of 99.999%, i.e., the system has 10−5 probability to be not available when a client wants to access the service offered by the system at any time, which means that the system may have at most 5.256 minutes of down time a year.

1.1.3.2 Reliability

Reliability is a measure of the system’s capability of providing correct services continuously for a period of time. It is often represented as the probability for the system to do so for a given period of time t, i.e., Reliability = R(t). The larger the t, the lower the reliability value. The reliability of a system is proportional to MTTF. The relationship between reliability and availability can be represented as . Reliability is very different from availability. If a system fails frequently but can recover very quickly, the system may have high availability. However, such a system would have very low reliability.

1.1.3.3 Integrity

Integrity refers to the capability of a system to protect its state from being compromised under various threats. In dependable computing research, integrity is typically translated into the consistency of server replicas, if redundancy is employed. As long as the number of faulty replicas does not exceed a pre-defined threshold, the consistency of the remaining replicas would naturally imply the integrity of the system.