Erhalten Sie Zugang zu diesem und mehr als 300000 Büchern ab EUR 5,99 monatlich.

- Herausgeber: Pontificia Universidad Católica del Ecuador

- Kategorie: Bildung

- Sprache: Englisch

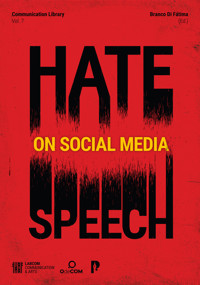

This book explores the nature of hate speech on social media. Readers will find chapters written by 21 authors from 18 universities or research centers. It includes researchers from 11 countries, prioritizing a diversity of approaches from the Global North and Global South – Brazil, Cyprus, Ethiopia, Germany, Nigeria, Portugal, South Africa, Spain, Switzerland, Turkey, and the USA. The analyses herein involve the realities in an even larger number of countries, given the transnational approach of some of these studies. One can find a preview of the chapters at the beginning of the book, with abstracts organized in a separate section. It is evident that the authors study the impact of recent events on hate speech – the Covid-19 pandemic, Russia- Ukraine war, the refugee crisis – and recurrent attacks on minority groups such as women, immigrants, or the LGBTQ+ community. The authors employ classic and digital research methods, using quantitative and qualitative data gathered from platforms like Telegram, Facebook, Instagram, Twitter, and YouTube. As a result, readers will encounter taxonomic proposals, new methodological approaches, theoretical frameworks, and mapping of behavioral patterns.

Sie lesen das E-Book in den Legimi-Apps auf:

Seitenzahl: 384

Veröffentlichungsjahr: 2023

Das E-Book (TTS) können Sie hören im Abo „Legimi Premium” in Legimi-Apps auf:

Ähnliche

Preface A GLOBAL APPROACH TO HATE SPEECH ON SOCIAL MEDIA

Abstracts 1. AGGRAVATED ANTI-ASIAN HATE SINCE COVID-19 AND THE #STOPASIANHATE MOVEMENT: CONNECTION, DISJOINTNESS, AND CHALLENGES

2. IS IT FINE? INTERNET MEMES AND HATE SPEECH ON TELEGRAM IN RELATION TO RUSSIA’S WAR IN UKRAINE

3. SYRIAN REFUGEES IN THE SHADE OF THE ‘ANTI-SYRIANS’ DISCOURSE: EXPLORING DISCRIMINATORY DISCURSIVE STRATEGIES ON TWITTER

4. DISSEMINATING AND RESISTING ONLINE HATE SPEECH IN TURKEY

5. HATE SPEECH ON TWITTER: THE LGBTIQ+ COMMUNITY IN SPAIN

6. CIRCULATION SYSTEMS, EMOTIONS, AND PRESENTEEISM: THREE VIEWS ON HATE SPEECH BASED ON ATTACKS ON JOURNALIST IN BRAZIL

7. CLIPPING: HATE SPEECH IN SOCIAL MEDIA AGAINST FEMALE SOPORTS JOURNALISTS IN GREECE

8. MAPPING SOCIAL MEDIA HATE SPEECH REGULATIONS IN SOUTHERN AFRICA: A REGIONAL COMPARATIVE ANALYSIS

9. ETHIOPIAN SOCIO-POLITICAL CONTEXTS FOR HATE SPEECH

10. SOCIAL MEDIA NARRATIVES AND REFLECTIONS ON HATE SPEECH IN NIGERIA

11. HATE SPEECH AMONG SECURITY FORCES IN PORTUGAL

Chapter 1 AGGRAVATED ANTI-ASIAN HATE SINCE COVID-19 AND THE #STOPASIANHATE MOVEMENT: CONNECTION, DISJOINTNESS, AND CHALLENGES

Chapter 2 IS IT FINE? INTERNET MEMES AND HATE SPEECH ON TELEGRAM IN RELATION TO RUSSIA’S WAR IN UKRAINE

Chapter 3 SYRIAN REFUGEES IN THE SHADE OF THE ‘ANTI-SYRIANS’ DISCOURSE: EXPLORING DISCRIMINATORY DISCURSIVE STRATEGIES ON TWITTER

Chapter 4 DISSEMINATING AND RESISTING ONLINE HATE SPEECH IN TURKEY

Chapter 5 HATE SPEECH ON TWITTER: THE LGBTIQ+ COMMUNITY IN SPAIN

Chapter 6 CIRCULATION SYSTEMS, EMOTIONS, AND PRESENTEEISM: THREE VIEWS ON HATE SPEECH BASED ON ATTACKS ON JOURNALIST IN BRAZIL

Chapter 7 CLIPPING: HATE SPEECH IN SOCIAL MEDIA AGAINST FEMALE SOPORTS JOURNALISTS IN GREECE

Chapter 8 MAPPING SOCIAL MEDIA HATE SPEECH REGULATIONS IN SOUTHERN AFRICA: A REGIONAL COMPARATIVE ANALYSIS

Chapter 9 ETHIOPIAN SOCIO-POLITICAL CONTEXTS FOR HATE SPEECH

Chapter 10 SOCIAL MEDIA NARRATIVES AND REFLECTIONS ON HATE SPEECH IN NIGERIA

Chapter 11 HATE SPEECH AMONG SECURITY FORCES IN PORTUGAL

Authors

Preface A GLOBAL APPROACH TO HATE SPEECH ON SOCIAL MEDIA

Branco Di FátimaLabCom – University of Beira Interior

Hate speech manifests itself in different social contexts, such as political debates, artistic expression, professional sports, or work environments. However, the rapid development of digital technologies, and especially of social media platforms, has created additional challenges to understanding this extreme act. Although this field of study is already over two decades old (Duffy, 2003), many questions still need to be answered.

There is no universally accepted definition of hate speech. Its characterization is a point of intellectual dispute among different worldviews, many outside the Western universe and little known. In general, hate speech is an attack on a person or group, usually targeting members of a social minority. Thus, it can be classified as sexist, racist, xenophobic, ageist, fatphobic, or homophobic, among others. Haters direct their attacks, for example, against women, Black people, immigrants, seniors, disabled people, and the LGBTQ+ community. The United Nations (n.d.) emphasizes that hate speech refers to offenses based on inherent traits, such as race, nationality, or gender.

Hate speech can also originate from and amplify religious intolerance (against Catholics or Muslims, for example), inflame tribal conflicts, or fuel prejudice against individuals within the same country (south vs north, capital vs countryside). Given the diversity of approaches, understanding the phenomenon involves the context in which it emerges. As a communicative act, the roots of hate speech are the codes and values of a particular culture (Matamoros-Fernández & Farkas, 2021).

These are only some of the challenges. Empirical studies based on Big Data show that detecting hate speech on social media is difficult (Miranda et al., 2022). Indeed, haters mobilize numerous subterfuges to obscure their intentions. For example, haters can use irony, humor, and satire to disguise a violent narrative (Schwarzenegger & Wagner, 2018). Moreover, in order to dehumanize opponents, a systematic strategy is to compare victims to repulsive animals, such as snakes, wasps, spiders, or cockroaches (Ndahinda & Mugabe, 2022).

Hate speech on social media can be verbal (posts, comments, articles, etc.) and non-verbal (emojis, stickers, photos, etc.). These multimedia attacks create and reinforce stereotypes based on toxic language. They can range from mere insults to calls for physical extermination and genocide. Sometimes they stem from emotional outbursts and go viral online, migrating from one platform to another (López-Paredes & Di Fátima, 2023). Thus, they affect both the victims and society itself by undermining democratic spaces for deliberation.

Regulating hate speech is not a simple issue. Sometimes it is driven by nationalist groups or far-right parties, going hand in hand with disinformation and conspiracy theories. Occasionally, haters use freedom of expression to justify their behaviors (Amores et al., 2021). In the name of combating hate, authoritarian states also have passed vague laws that censor the public sphere (Garbe, Selvik & Lemaire, 2023). So whose responsibility is it to regulate hate speech: the governments’, social media platforms’, or society’s? It is a game of chess, and every move counts.

Some authors have pointed to the power of social media in shaping hate speech (Müller & Schwarz, 2021). The platforms would be open and favor violent narratives (Brown, 2018). However, how one can regulate hate speech without interfering with freedom of expression remains an open question. First, it is urgent to map hate online and the results of its platformization, which has fostered old and new forms of abuse (Gagliardone, 2019).

Hate speech is more complex and diverse on social media. It spreads at high speed and can impact behaviors beyond the borders where it originates. Hate is ubiquitous, interactive, and multimedia. It is available 24/7, reaching a much larger audience. On social media, haters can be anonymous and find support from individuals with the same aggressive mindset. This is just a brief characterization and certainly presents many theoretical gaps that need improvement.

This book explores the nature of hate speech on social media. Readers will find chapters written by 21 authors from 18 universities or research centers. It includes researchers from 11 countries, prioritizing a diversity of approaches from the Global North and Global South – Brazil, Cyprus, Ethiopia, Germany, Nigeria, Portugal, South Africa, Spain, Switzerland, Turkey, and the USA. The analyses herein involve the realities in an even larger number of countries, given the transnational approach of some of these studies.

One can find a preview of the chapters at the beginning of the book, with abstracts organized in a separate section. It is evident that the authors study the impact of recent events on hate speech – the Covid-19 pandemic, Russia-Ukraine war, the refugee crisis – and recurrent attacks on minority groups such as women, immigrants, or the LGBTQ+ community. The authors employ classic and digital research methods, using quantitative and qualitative data gathered from platforms like Telegram, Facebook, Instagram, Twitter, and YouTube. As a result, readers will encounter taxonomic proposals, new methodological approaches, theoretical frameworks, and mapping of behavioral patterns.

While hate speech is rooted in national identity and shaped by context, it is a global phenomenon that requires transnational study to uncover its unique characteristics. For example, who are the primary targets? What forms do the messages take? How do virtual armies replicate violent narratives? What emotional drivers underlie hate speech on social media? And lastly, how can legal dilemmas surrounding regulation be resolved?

The construction of these answers is open and subject to constant dispute. Theoretical and methodological normalization needs to be improved. Currently, hate speech in digital environments challenges academia and society. This book aims to dispel some of these uncertainties.

References

Amores, J. J., Blanco-Herrero, D., Sánchez-Holgado, P. & Frías-Vázquez, M. (2021). Detectando el odio ideológico en Twitter: Desarrollo y evaluación de un detector de discurso de odio por ideología política en tuits en español. Cuadernos.info, 49(2021), 98-124. https://doi.org/10.7764/cdi.49.27817

Brown, A. (2018). What is so special about online (as compared to offline) hate speech? Ethnicities, 18(3), 297-326. https://doi.org/10.1177/1468796817709846

Duffy, M. E. (2003). Web of hate: A fantasy theme analysis of the rhetorical vision of hate groups online. Journal of Communication Inquiry, 27(3), 291-312. https://doi.org/10.1177/0196859903252850

Gagliardone, I. (2019). Defining online hate and its “Public Lives”: What is the place for “extreme speech”? International Journal of Communication, 13(2019), 3068-3087.

Garbe, L., Selvik, L. M. & Lemaire, P. (2023). How African countries respond to fake news and hate speech. Information, Communication & Society, 26(1), 86-103. https://doi.org/10.1080/1369118X.2021.1994623

López-Paredes, M. & Di Fátima, B. (2023). Memética: la reinvención de las narrativas en el mundo digital, protestas sociales y discursos de odio. In: Márquez, O.C. & Parras, A.P. (Eds.). Visiones contemporáneas: narrativas, escenarios y ficciones (pp. 25-37). Madrid: Fragua.

Matamoros-Fernández, A. & Farkas, J. (2021). Racism, hate speech, and social media: A systematic review and critique. Television & New Media, 22(2), 205-224. https://doi.org/10.1177/1527476420982230

Miranda, S., Malini, F., Di Fátima, B. & Cruz, J. (2022). I love to hate! The racist hate speech in social media. Proceedings of the 9th European Conference on Social Media (pp. 137-145). Krakow: Academic Conferences International (ACI).

Müller, K. & Schwarz, C. (2021). Fanning the flames of hate: Social media and hate crime. Journal of the European Economic Association, 19(4), 2131-2167, https://doi.org/10.1093/jeea/jvaa045

Ndahinda, F. M. & Mugabe, A. S. (2022). Streaming hate: Exploring the harm of anti-banyamulenge and anti-Tutsi hate speech on Congolese social media. Journal of Genocide Research, 1-15. https://doi.org/10.1080/14623528.2022.2078578

Schwarzenegger, C. & Wagner, A. J. (2018). Can it be hate if it is fun? Discursive ensembles of hatred and laughter in extreme right satire on Facebook. Studies in Communication and Media, 7(4), 473-498. https://doi.org/10.5771/2192-4007-2018-4-473

United Nations (n.d.). Understanding hate speech: What is hate speech? https://shre.ink/cVjq

Abstracts 1. AGGRAVATED ANTI-ASIAN HATE SINCE COVID-19 AND THE #STOPASIANHATE MOVEMENT: CONNECTION, DISJOINTNESS, AND CHALLENGES

Lizhou FanUniversity of Michigan, [email protected] YuUniversity of Michigan, [email protected] J. GillilandUniversity of California, [email protected]

As the COVID-19 pandemic has unfolded, there has been a dramatic increase in incidents of anti-Asian hate, including violent hate crimes such as the 2021 Atlanta Spa Shootings. Documenting and analyzing hate and counterspeech is essential and urgent work that can both record history in the making, and provide new insights for those working to de-escalate hate and diminish social inequity. By building two social media archives of hate and counterspeech on Twitter and using them to conduct different kinds of computational discourse analyses, we identified how anti-Asian hate has increased since the beginning of the COVID-19 pandemic, and how the #StopAsianHate movement has responded to many aspects of this hate, including stereotyping, stigmatization, and use of derogatory language. However, our research suggests that it remains challenging to counter anti-Asian hate speech and the associated movement by responding in in direct and actionable ways that could attract more public attention and result in systemic changes in how Asians and Asian Americans are regarded in US society. We also argue that the forms of analysis we describe here show strong potential for use the emerging field of computational archival science – supporting archival digital intelligence by assisting archivists and researchers to identify important themes related to emerging social issues efficiently, and connections between very large digital collections, especially those of social media archives. Keywords: hate speech, counterspeech, anti-Asian, Covid-19, social media, Twitter

2. IS IT FINE? INTERNET MEMES AND HATE SPEECH ON TELEGRAM IN RELATION TO RUSSIA’S WAR IN UKRAINE

Mykola MakhortykhUniversity of Bern, [email protected] González-AguilarInternational University of La Rioja, [email protected]

The rise of digital platforms has changed the ways hate speech is disseminated today. Internet memes, namely digital content units sharing features of content and form, are one of the new formats in which hate speech is spread across different online platforms. Distinguished by their virality and frequent use of humoristic remixing of popular culture elements, memes are increasingly used by extremist groups to normalize hate speech towards vulnerable communities. However, the relationship of Internet memes and hate speech in the context of armed conflicts, where the use of hate speech is both particularly common and worrisome, currently remains under-studied. Using a sample of memes from pro-war Russophone Telegram channels, we examine this relationship in the context of the ongoing Russia’s war in Ukraine. Relying on the intertextual discourse analysis, we identify three main functions of memes: 1) spreading hate speech; 2) amplifying personal attacks; and 3) glorifying the Russian army and its officials. Keywords: memes, war, Telegram, Russia, Ukraine, hate speech

3. SYRIAN REFUGEES IN THE SHADE OF THE ‘ANTI-SYRIANS’ DISCOURSE: EXPLORING DISCRIMINATORY DISCURSIVE STRATEGIES ON TWITTER

Özlem AlikılıçYaşar University, Tü[email protected] GökalilerYaşar University, Tü[email protected]İnanç AlikılıçMalatya Turgut Özal University, Tü[email protected]

Along with the increase of user-generated content in social media, immigrants are often subject to hate speech. Recently, Turkey has become an important region for migrants from Syria, and the refugee problem has become a frequently shared issue by the Turkish public on social media. This study intended to evaluate the hatred dimension of contents produced on Twitter regarding the Syrian refugees in Turkey. For two months, 245,587 tweets in total, posted under the hashtags of ‘#suriyeli’ (Syrian), ‘#mülteci’ (refugee), ‘#suriyelimülteci’ (Syrian refugee), ‘#suriyelileriistemiyoruz’ (we don’t want the Syrians), and ‘#suriyelilerdefolsun’ (Syrians piss of), were collected, and discourse strategies were applied. Findings from the tweets showed that those who have negative views about Syrian refugees use discriminatory language to glorify the ‘we’ phenomenon while separating the refugees into ‘others’. The findings also showed that positive tweets about Syrian refugees consisted of content around religion and supporting government policies. Among the negative contents, the excesses of criticisms regarding the Turkish government and its policies are remarkable. Keywords: Syrian refugees, Turkey, online hate speech, discriminatory discourse, Twitter

4. DISSEMINATING AND RESISTING ONLINE HATE SPEECH IN TURKEY

Mine Gencel BekUniversität Siegen, [email protected]

The chapter aims to contribute to the book with the Turkish case. It first reviews the literature on hate speech in Turkey with a special focus on the studies supported by the Hrant Dink Foundation which was established after the killing of Hrant Dink in 2007. A case study on hate speech recently directed to popular singer Sezen Aksu follows that. It reveals how hate speech is directed at the popular singer on different axis, including womanhood, LGBTQI, non-Turkish, and non-Muslim identities in the name of religion and Islam, as well as the association with animals as a hate object. Finally, the chapter discusses the ideas and attempts against hate speech and its limitations and potentials. Keywords: hate speech, Twitter, popular culture, Turkey, sexism

5. HATE SPEECH ON TWITTER: THE LGBTIQ+ COMMUNITY IN SPAIN

Patricia de-Casas-MorenoUniversity of Extremadura, [email protected] Parejo-CuéllarUniversity of Extremadura, [email protected] Vizcaíno-VerdúUniversity of Huelva, [email protected]

The Internet and specifically social media became an area of interaction where hate speech gained visibility. Several minority groups have been exposed in an explosion of hateful comments due to their gender identity. In this case, the LGBTIQ+ collective group known as Lesbian, Gay, Bisexual, Transgender, Intersex, Queer and other identities not included in the above, became a target for their sexual orientation. This study intends to compile a comprehensive theoretical framework, as well as detailed case studies in Spain to offer an overview of the current panorama of the aforementioned group. We also outline the prevailing hate speech through social media such as Twitter. We conclude that there is still much to debate in this context and that platforms should be encouraged to strengthen their anti-speech measures to prevent and avoid this kind of discourse. Keywords: social media, LGBTIQ+, hate speech, Twitter, toxicity, Spain

6. CIRCULATION SYSTEMS, EMOTIONS, AND PRESENTEEISM: THREE VIEWS ON HATE SPEECH BASED ON ATTACKS ON JOURNALIST IN BRAZIL

Edson CapoanoUniversity of Minho, [email protected]ítor de SousaUniversity of Trás-os-Montes and Alto Douro, [email protected] PratesUniversity Presbyterian Mackenzie, [email protected]

This text starts from the hate speech promoted during the presidency of Jair Messias Bolsonaro (2019-2022) to reflect on how we got here as individuals, communicators and society and what are the characteristics of this contemporary communicational phenomenon. For this, we will present three perspectives on hate speech to understand hate speech in an interdisciplinary way. The first will be the individual and biological sphere, on the neurological triggers of anger, the emotion that sustains hate speech, a theme so dear to the social sciences that it has caused the so-called emotional turn in the field. Next, the systemic issue of the hate circuit of narratives in communication environments will be presented, how they arise, how they propagate through networked information supports, how they feed back between contents crisscrossed. Finally, we will broaden the debate to the issue of historical presentism, a phenomenon of postmodernity that makes heterogeneous discourse something threatening to homogenizing groups, without spaces for the historical nuances necessary for the understanding of complex themes, simplified by hate speech, which circulate at the speed of digital social networks.With this approach, we hope to better understand what are the motivators of hate speech, such as those reported at the beginning of this text, and perhaps understand how to stop this spiral of narrative violence that affects the current society. Keywords: hate speech, communication, circulation, emotions, presenteeism

7. CLIPPING: HATE SPEECH IN SOCIAL MEDIA AGAINST FEMALE SOPORTS JOURNALISTS IN GREECE

Lida TseneOpen University of Cyprus, [email protected]

The web 2.0 gave us the opportunity to explore new ways of collaboration and communication. Digital platforms and social media became a fertile ground for people to interact and express their opinions unfiltered, while the non-obligation to reveal oneself directly added an extra level of freedom in the way they shared news, thoughts and observations. But unfortunately, there is also the other side of the same coin. This democratisation facilitated somehow heated discussions which frequently result in the use of insulting and offensive language. In this chapter we are discussing sexist hate speech towards female sports journalists in Greece. Our research hypothesis drives from two basic facts related to the underrepresentation of women both in media and in sports. Through content analysis and in depth interviews we attempted to explore whether women working in the sports journalism field in Greece have been targets of online abuse, with a special focus on sexism hate speech, how do they respond and the impact this might have on their professional development and mental health, the role of Internet and social media as well as possible solutions to this challenge. Keywords: equity, gender, hate speech, sexist hate speech, social media, sports journalism

8. MAPPING SOCIAL MEDIA HATE SPEECH REGULATIONS IN SOUTHERN AFRICA: A REGIONAL COMPARATIVE ANALYSIS

Allen MunoriyarwaUniversity of Botswana, [email protected]

This chapter provides a comparative content analysis of social media hate speech in seven selected Southern African countries of South Africa, Zimbabwe, Eswatini (formerly Swaziland), Lesotho, Zambia, Democratic Republic of the Congo (DRC) and Botswana. Its aim is to examine how these countries, regulate social media hate speech, and how they legally sanction it. The chapter observes that as a preventive measure of social media hate speech, regulations have failed in these countries. It notes the weaponisation of hate speech to haunt legitimate anti-regime forces in some of these countries, and further notes how social media hate speech is increasingly blurring the lines on the maintenance of social order, political authoritarianism and free speech. The chapter concludes that an overhaul of social media hate speech regulations is necessary in Southern Africa if the laws are to serve their legal purposes. Keywords: hate speech, social media, Southern African region, authoritarianism, weaponization

9. ETHIOPIAN SOCIO-POLITICAL CONTEXTS FOR HATE SPEECH

Muluken Asegidew ChekolDebre Markos University, [email protected]

Continuous Ethiopian youths’ protests in Ethiopia for two years, forced the EPRDF’s government to reform that has brought Abiy Ahmed to the Prime Minister position on April 2, 2018. This change has resulted in so many improvements on content and structure of the media including the online platform. Mostly, media had been filled with unison messages. Nevertheless, the situation did not last long; ethnic tension has risen again; ethnically motivated conflicts have become prevalent and caused peoples’ death, and displacement. Hate speech and fake news also seemingly become common both on some mainstream and online media, which ultimately forced the state to endorse a law to suppress hate speech and fake news. This chapter prepared base on empirical studies. The study employed a mixed method research approach to understand and explain the prevalence, natures, severity, and regulation of social media hate speech in Ethiopia. As a data source, using a multi-stage sampling of users’ comments offered on three purposeful selected Ethiopian Ethnic-media’s social media sites, namely ASRAT, OMN, and DWTV, hate speech analysis were made. In addition to the content analysis of the online comments on the Facebook pages and the YouTube channels of the three media, the study included focus group discussions, interviews, and documents analysis tools to owe relevant data. Accordingly, the study found a substantial prevalence of social media hate speech, dominated by offensive severity, and less incitement to violence, and genocide. It is also found that the ethnic-politics based hate was overriding. Identity-driven contesting and reform incidents were the main trigger factors of social media hate speech. It is argued, the law in place to minimize hate speech, may be used by the executive body for political interests to silence critical voices. As such, the prevalent of hate speech on the online media will have severe effects on the Ethiopian community. Along with the law, political dialogue to dig out the root causes of the hate speech, and enhancing media literacy in the country could be the potential solutions to deter hate speech in Ethiopia. Keywords: hate prevalence, hate severity, hate natures, speech regulation, Ethiopia

10. SOCIAL MEDIA NARRATIVES AND REFLECTIONS ON HATE SPEECH IN NIGERIA

Aondover Eric MsughterCaleb University Imota, [email protected]

All over the world, hate speech represents a form of threat to damage the lives of individuals and increase the sense of fear. The recent trend in journalism malpractice in the country is the dissemination of hate speech and vulgar language. Within this context, the paper examined social media narratives and reflections on hate speech in Nigeria. The theoretical postulations of Castells’ Theory of Network Society, Durkheim’s Social Fact and Weber’s Social Action or Relations Theory, The Functional Theory of Campaign Discourse, Critical Discourse Analysis Theory and Critical Race Theory were used as theoretical framework. Based on the literature, the paper argues that while still countering hate speeches in the traditional media, the emergence of social media has broadened the battlefield in combating the hate speech saga. Social media offers an ideal platform to adapt and spread hate speech and foul language easily because of its decentralised, anonymous and interactive structure. The prevalence of hate speech on social media bordering on political and national issues, and even social interaction in Nigeria, especially on Facebook, Twitter, YouTube and LinkedIn is becoming worrisome. This is because apart from undermining the ethics of journalism profession, it is contributing in bringing disaffection among tribes, political class, and religion or even among friends in the society. The paper concluded that Nigerian public is inundated with negative social media usage such as character assassination and negative political campaigns at the expense of dissemination of issues that help them make informed choices. Keywords: hate speech, narratives, Nigeria, reflections, social media

11. HATE SPEECH AMONG SECURITY FORCES IN PORTUGAL

Tiago LapaIscte - University Institute of Lisbon, [email protected] Di FátimaLabCom - University of Beira Interior, [email protected]

The European Union and the United Nations recognize hate speech as a threat to democracy, human rights, and peace. However, there is no universal definition of what hate speech is. Its meaning has been fluid and diverse, varying across countries, governing bodies, and disciplinary lenses. There are also considerations about the distinction between offline and online hate speech, since digital platforms might allow anonymity, invisibility, the instantaneous spread of hateful content and the clustering of hate speakers with like-minded individuals (Brown, 2018) that might be instilled with a sense of empowerment and exemption. It has been argued that online hate speech can be described as toxic behavior and in cases outright unlawful, exacerbated by Internet culture and the digital underworlds. On social media, hate speech can take different forms, but has been characterized by its hurtful or potentially harmful (visual and/or textual) language. This chapter presents a brief case study on the use of closed Facebook groups by security force officers to propagate hate speech against activists and minorities in Portugal. In this context, academics and legislators have always been faced with the contraposition between hate speech and freedom of expression. Where does one begin and the other end? One may question the efficacy of hate speech regulations, especially when law enforcement officers use social media to promote hate speech as if it were acceptable in democratic societies. Keywords: hate speech, social media, security forces, Facebook, Portugal

Chapter 1 AGGRAVATED ANTI-ASIAN HATE SINCE COVID-19 AND THE #STOPASIANHATE MOVEMENT: CONNECTION, DISJOINTNESS, AND CHALLENGES

Lizhou FanUniversity of Michigan, USAHuizi YuUniversity of Michigan, USAAnne J. GillilandUniversity of California, USA

1. Introduction

Anti-Asian hate is a growing social problem in both the US and around the world. Anti-Asian hate incidents, including hate speech and hate crimes, have seen unprecedented increases in the US since the beginning of the COVID-19 pandemic. From 2020 to 2021, anti-Asian hate crimes increased by 833% in New York City and 700% in Sacramento (Levin, 2021). Anti-Asian physical assaults in the US doubled from 8.1% of total hate incidents in early 2020 to 16.2% in late 2021 (the Stop AAPI Hate coalition, 2020, 2022). Anti-Asian hate, however, is not a new social issue but rather is deeply rooted in long and systematic racism in the US towards people of Asian origins. Among targets of anti-Asian hate, anti-Chinese hate has a particularly long and specific history, especially on the west coast, dating all the way back to the arrival of the first Chinese immigrants in the nineteenth century.

Two of the first US immigration laws, The Page Act of 1875 (1875) and The Chinese Exclusion Act (1882), intentionally and explicitly prohibited Chinese laborers from entering the country. Chinese laborers were seen as endangering “the good order of certain localities” (The Chinese Exclusion Act, 1882), creating workplace competition, and as foreigners who could not become US citizens and therefore should be kept out of the country. In addition to this legal discrimination and the daily prejudice experienced by Chinese immigrants, there is also a history of scapegoating Chinese people and communities during epidemics (Zhou, 2021). For example, when smallpox broke out in San Francisco in 1875, city health officers blamed Chinese immigrants in Chinatown; as they did again for the prevalence of venereal disease and during an unusual outbreak of bubonic plague between 1900 and 1904 (Trauner, 1978).

With such entrenched historical discrimination and stigmatization and the emergence of the COVID-19 virus as a direct triggering event, it is perhaps not surprising that toxic racism and even violent hate crimes escalated rapidly as the ensuing pandemic spread across the US and around the globe. When the Chinese city of Wuhan was identified in 2020 as the location of the first known cases of the virus, aggressive discrimination and stigmatization began, both online and in daily life towards people of Chinese origins. Egged on by a US President who persisted in referring to COVID-19 as the “Chinese virus” and “Kung flu”, anti-Asian and anti-Chinese haters again treated Asians and Asian Americans, especially those of Chinese heritage, as medical scapegoats, repeating disinformation that associated the virus with Chinese food, eating habits and hygiene (King, 2020; Q. Yang et al., 2021). On March 16, 2021, six women of Asian origin were killed in Atlanta, possibly out of racist motivations. This tragic event, known as the 2021 Atlanta Spa Shootings (Stewart, 2022), precipitated counter anti-Asian hate and a heightened sense of social urgency in the US of the imperative to address anti-Asian hate. The #StopAsianHate movement, an online-offline hybrid movement is an innovative form of social activism that combines the internationality of hashtag activism with local protests, and was one of the first responses to the aggravated anti-Asian hate. It has given a heightened presence to Asian and Asian American communities that heretofore had lower-than-average involvement in social movements and limited political influence. Social media in particular has amplified their voices and provided diversified channels for being heard.

This chapter discusses the processes and outcomes of our research that applies mixed computational and human methods to identify the dynamics of anti-Asian hate speech and counterspeech on social media and provide insights into the effectiveness of that counterspeech. After a brief review of recent research on computational techniques for analyzing hate speech and the effectiveness of counterspeech, the chapter describes the processes and methods we used to build and analyze two archives of Twitter relating to anti-Asian hate and summarizes our findings in four main areas:

1. The trending anti-Asian hate speech categories on Twitter and their changes in volume during the early stage of the COVID-19 pandemic;

2. The volume and hashtag discourses of the counter anti-Asian hate movement, #StopAsianHate;

3. The connection and disjointness between anti-Asian hate and counterspeech, as well as current challenges in tackling anti-Asian hate;

4. The implications for documenting and analyzing social media data streams, which prototype and work towards “archival digital intelligence”.

2. Related Work

The prevalence of hate speech and counterspeech on social media has attracted increasing research interest over the past five years. Earlier research argued that counterspeech is a promising way to respond to and mitigate the harms caused by hate speech (Lepoutre, 2017). Mathew et al. (2018) proposed counterspeech as an effective method for tackling hate speech without harming freedom of speech. Other researchers sought to detect and classify hate and counterspeech, understand their dynamics, and provide suggestions for countering hate speech.

Finding hate and counterspeech and differentiating between them can be challenging because of the complexity of how humans use language to express themselves, especially how they use language to discuss controversial topics, engage in emotional or heated exchanges, express prejudiced notions, or counter comments they find objectionable. Today, social media are widely used for such discourse, and because their content can be captured and archived in digital form, they can yield a rich text base on which to perform research relating to different types of speech, their dynamics and their impact. With advances in computational tools and natural language processing (NLP) techniques, developing effective hate and counterspeech detection and classification systems has become possible and increasingly nuanced. For example, Mathew et al. (2020) developed a classifier based on social media user data and linguistic patterns that can detect whether a user is a hateful or a counter speaker. Garland et al. (2020) used an ensemble learning algorithm that pairs a variety of paragraph embeddings with regularized logistic regression to classify hate and counterspeech. Yu et al. (2022) found that neural networks for identifying hate and counterspeech can perform better if context such as the preceding comment in a conversation is taken into consideration. Although no direct comparisons have been undertaken of the variety of methods that are now available, context-aware and neural network-based NLP methods are widely believed to perform well in detecting and classifying hate and counterspeech.

Regarding the dynamics between hate and counterspeech, in addition to counterspeech’s effects on hate speech, recent research also examines the differences and interactions between them. Mathew et al. (2020) studied the topical difference between hate and counter speakers on Twitter and concluded that hate speakers, who often use subjective and negative expressions associated with envy, hate and ugliness, attracted more popularity than the counter users, who use more words related to government, law, and leadership. Garland et al. (2022) investigated the interactions of hate and counterspeech with different degrees of organizational behavior and found that organized counterspeech may help more than unorganized counterspeech in curbing online hate discourse.

In addition to policy-level insights, NLP researchers have developed and suggested implementing automatic and large-scale counterspeech generation to tackle hate speech, which can serve as “a third voice” to inform social media users of their inappropriate language uses without harming the principles of freedom of speech (Alsagheer et al., 2022). In general domains and rich resource languages, recent work showed that it is possible to combine pre-trained and large-scale language models, using for example, GPT-2 for synthetic text production, with additional tuning methods, for example, stochastic decodings, to generate counterspeech (Tekiroğlu et al., 2020; Tekiroğlu et al., 2022). In cross-domain and multilingual settings, it is also possible to create datasets and develop models that can help create counter-hate rhetorics (Chung et al., 2019, 2021). Beyond data and model, Zhu and Bhat (2021) found that a pipeline containing a generative model, a filter model, and a retrieval-based model can improve diversity and relevance in generating counterspeech for online hate speech.

While the current research on hate and counterspeech is extensive and has demonstrated application potential, due to the complexity of the origin and development of hate speech, hate speech targeted towards specific marginalized groups has been much less subject to analysis and can also be missed if the scope of the research is too general. This scarcity of granular research focusing on specific communities that have been the target or subjects of hate and counterspeech is a critical absence. Among the few studies to date, He et al. (2021) analyzed the development and diffusion of anti-Asian hate and counterspeech since COVID-19 on Twitter and found that counterspeech discouraged users from becoming hateful. However, due to the limited scope of this study’s data collection, some unpredictable but closely related events may not be covered by the preselected fixed set of keywords. For instance, the #StopAsianHate social movement is not directly connected to hate speech data collected using keywords related to COVID-19 and Asians. Moreover, none of the previous research differentiates different types of hate speech or tries to understand the motive or origin of the hate speech.

Thus, it is imperative to come up with an analytical framework, as well as a prototype, of hate and counterspeech that can make connections between discourses by applying detailed contextual aboutness that goes beyond binary classification. Instead of depending on in-dataset chronological closeness, overlapping keywords, or entangled user networks, as many other studies do, we report here on our efforts to find a cross-dataset connection between hate and counterspeech using granular information about the mappings between different categories of hateful and countering rhetoric.

3. Data and Methods

3.1. Data

To document and analyze hate speech related to China and counterspeech in the #StopAsianHate movement, we used the Twitter search API1 to obtain public social media discourse that contains hate and counterspeech. Using the query “china+and+coronavirus”, we collected about 3.5 million tweets and published them in the COVID-19 Hate Speech Twitter Archive (CHSTA)2 (Fan et al., 2020). Using the query term “StopAsianHate”, we collected more than 5.5 million tweets and published them in the Counter-anti-Asian Hate Twitter Archive (CAAHTA)3 (Fan et al., 2021). In this section, we introduce the overall volumes and discourses of hashtags of CHSTA and CAAHTA respectively.

3.1.1. CHSTA: The Covid-19 hate speech Twitter Archive

The blue line in Figure 1(a) shows the volume of total tweets obtained between March 8 and April 6, 2020, using the query “china+and+coronavirus”. There is an overall increasing trend of tweets across this period, with a substantial increase in the number of tweets between March 16 to March 19, 2020. We believe this occurrence is the result of burgeoning confirmed cases in the U.S. and growing media attention. Of the 3,457,402 tweets related to “china+and+coronavirus”, 25,467 are labeled as Hate Speech (Fan et al., 2020). We identify a tweet as hate speech if it contains at least one word from the Hatebase dictionary4, which contains thousands of discriminatory words. We note that although Hatebase is a continuously updating dictionary of hate words, it might not fully capture all hate speech that is used. It may also not be completely up to date with the language being developed and used at speed in social media streaming. We use the red line in Figure 1(a) to show the trend in the volume of hate speech over time. This trend has a high association with the trend of total tweets, both peaking between March 16 and March 29, 2020.

a) Volume change of tweets

b) Wordcloud of hashtags

Figure 1 – Volume change and hashtag wordcloud of CHSTA.

The wordcloud in Figure 1(b) shows the discourse of tweets as reflected in their hashtags and provides a summary of user input topics. By analyzing the most frequently used hashtags, we identified several main categories of speech contained in the archive, including location, person, organization and abstract concept. The location hashtag “wuhan” is the most prevalent hashtag used in the archive, with over 24,000 occurrences. Other location hashtags such as Italy, USA, Hongkong and Hubei (of which Wuhan is the capital city) are also prominent. A large number of location hashtags appears to support the hypothesis that the trend of speech discourse is associated with demographics and geographic location. Additionally, hashtags such as “chinesevirus” and “wuhanvirus” carry discriminatory connotations and are a violation of the WHO’s convention for naming new human infectious diseases (World Health Organization, 2015). As suggested by this exploratory analysis, Twitter users frequently use discriminatory or prejudicial language against particular groups. Based on this result we conducted further analyses using lexicon-based information extraction methods.

3.1.2. CAAHTA: The counter-anti-Asian hate Twitter Archive

Figure 2(a) shows the longitudinal trend of the total number of tweets obtained using the query term “StopAsianHate”. We noticed two significant spikes: one on March 18, 2021, and another on March 30, 2021. To identify the driving force behind such sudden increases in anti-Asian hate public discourse, we searched for key events around those times. The Atlantic Spa Shooting, which took place on March 17, 2021, led to the increase in anti-Asian hate discourse (first spike) on March 18, 2021, and precipitated all subsequent social movements and events. On March 26, 2021, the popular South Korean boy band BTS advocated for “#StopAsianHate” on Twitter, which led to notably increased media attention and public discussion on Twitter in the subsequent days. By further analyzing the traffic peaks in relation to the key events, we observed that social influencers play vital roles in advocating for and promoting social events.

a) Volume change of tweets

b) Wordcloud of hashtags

Figure 2 – Volume change and hashtag wordcloud of CAAHTA.

Similar to the first hate speech archive (CHSTA), with CAAHTA we identified a set of more than 300 frequently used hashtags that could be used as specific query words in future archival ingest activities. From the wordcloud, we identified a few emerging topics and concerns. Besides hashtags that advocate for general actions such as #stopasianracism and #endantiasianviolence, we also observed hashtags that call for specific actions such as #gofundme (advocating fundraising for survivors of Asian hate crimes) and #racismisnot before comedy (responding to specific types of anti-Asian hate speech). Additionally, we observed hashtags of related racial movements such as #blacklivesmatter and #blm. These preliminary findings suggested that there might be multiple dimensions of counter-anti-Asian hate speech discourse, which we have analyzed systematically as described in the following sections.

3.2. Methods

In this study, we used Computational Discourse Analysis (CDA) to analyze the connection between the two separate social media data archives. CDA is a mixed method that uses natural language processing (NLP) to automatically detect cohesion and local coherence, which can then be used in making summative inferences (Dascalu, 2014), while the inclusion of theoretical frameworks enhances the applicability and specificity of the results of the computational analysis. As a data-driven method, CDA is widely applied to harvest data and build archives from social media and web pages (Andreotta et al., 2019; Fan et al., 2022), as well as in conducting predictive modeling (Emmert-Streib & Dehmer, 2021).

The CDA method is useful in this study because it operationalizes theoretical frameworks computationally, and combines the strengths of both humans and algorithmic processing. As already introduced in the Data section, we need to connect the hate and counterspeech discourse identified from the two social media archives that is neither collected in the same time period nor developed through queries that used overlapping keywords. Thus, human-identified contextual aboutness is key to making connections between the two corpora, since traditional computational methods such as topic modeling or text clustering are unable to construct this high-level connection.

We therefore first clustered (through computation) and labeled (through human annotation) tweets in CHSTA into three categories, namely Stereotyping, Stigmatization, and Derogatory Language, that are potentially indicative of anti-Asian hate speech. We then analyzed the top hashtags in CAAHTA by labeling (through human annotation) counterspeech categories, including Advocating Action, Influencing Narrative Change, and Building Identity, that corresponded to the three hate speech categories already applied to CHSTA, as well as three dimensions of social movement and ten sub-dimensions, which are discussed below. Finally, we combined the above analyses, focusing on any matching between hate and counter categories.

3.2.1. Clustering and analyzing hate speech in CHSTA

Figure 3 – The workflow of computational discourse analysis for CHSTA.

Note:The color of each frame represents the category of the sub-step – green frames are in-step text sources, yellow frames are end-of-step text results, red frames are actions of computational decision-making or human analysis references, and blue frames are API querying actions.

As Figure 3 shows, after retrieving and detecting hate speech in CHSTA, as described in the Data section above, we proceeded through the following processing steps. To cluster and analyze hate speech in CHSTA, we first used Sentence-BERT (SBERT), a transformer-based pre-trained NLP model to derive semantically meaningful sentence embeddings (Reimers & Gurevych, 2019). SBERT sentence embeddings, or sentence vectors, can support effective comparison between sentence meanings and can put together semantically similar sentences. Our implementation uses SBERT5 with Python and the pre-trained model ‘covid-twitter-bert-v2’ (Müller et al., 2020), which is trained on COVID-19-related Twitter data and can map tweets in CHSTA to a 512-dimensional vector space. We then use the K-Means to cluster these sentence embeddings based on the scikit-learn package in Python6. We use Lloyd’s K-Means clustering algorithm (Lloyd, 1982), since it is simple and efficient in processing large-scale language embeddings with high dimensions7, and we obtained 100 clusters of potential anti-Asian hate speech.

To further analyze the clusters of hate speech, we began with the widely-accepted United Nations (UN) definition of COVID-19-related hate speech: “a broad range of disparaging expressions against certain individuals and groups that have emerged or been exacerbated as a result of the new coronavirus disease outbreak – from scapegoating, stereotyping, stigmatization and the use ofderogatory, misogynistic, racist, xenophobic, Islamophobic or antisemitic language8.” Focusing specifically on anti-Asian hate speech during COVID-19 and considering the expressiveness of concepts in short expressions in natural language, we used the categories mentioned in the UN definition to come up with an aggregated characterization framework. As Table 1 shows, our hate speech analytical framework has three categories: Stereotyping, Stigmatization, and Derogatory Language. Notably, these labels contain combined elements in the UN definition and we provide simple examples as explanations.

Category

Definition and Notes

Relation to UN Definition

Simple Example

Stereotyping

A fixed idea that many people have about a thing or a group that may often be untrue or only partly true. Often without hate words.

Corresponds to stereotyping, racist, and xenophobic language.

“Chinese eat bat.”

Stigmatization

An action of describing or regarding someone or something as disgraceful or with great disapproval. Often uses misinformation or disinformation for reasoning. May contain hate words.

Corresponds to stigmatization and scapegoating.

“China is f*ck*ng evil because it created coronavirus.”

Derogatory Language

Language showing a critical or disrespectful attitude without apparent reason. Often because of xenophobia and racism. Often contains hate words.

Corresponds to derogatory, racist, and xenophobic language.

Racial slurs.

Note: The simple examples above are provided as examples of hate speech and may cause discomfort. The authors do not agree with and strongly condemn any form of hate speech or hate crime, including the contents above. Some of the letters in the hate words have been masked with asterisks.

Table 1 – Categories of hate speech in CHSTA.

3.2.2 Categorizing hashtag activism in CAAHTA

Figure 4 – The workflow of computational discourse analysis for CAAHTA.

Note: The color of each frame represents the category of the sub-step – green frames are in-step text sources, yellow frames are end-of-step text results, red frames are actions of computational decision-making or human analysis references, and blue frames are API querying actions. C1 corresponds to the number of dimensions of hashtag activism and C2 shows the binary annotation of counterspeech correspondence.

As Figure 4 shows, after retrieving tweets in CAAHTA, we extracted the hashtags for analysis. On social media, hashtags are representative keywords that are used to build up public support for affirmative social-political changes and social movements (Goswami, 2018). When a hashtag or a group of hashtags are used intensively, these short user inputs can serve similar purposes to slogans in protests, and they promote searching, liking, and forwarding the contents behind the hashtag through online social networks. When hashtags trend, narrative agency and activist messages associated with these hashtags will disseminate rapidly and can become the catalyst for online social movements (e.g., #BlackLiveMatter and #MeToo) (Xiong et al., 2019; G. Yang, 2016).

We then based our analysis of the hashtag activism in the #StopAsianHate social movement on 315 frequently used hashtags that encompassed more than 96% of all the hashtag uses. We applied Fan et al. (2021)’s adaptation of Reuning and Banazak’s analytical framework for social movement phenomena (Reuning & Lee, 2019), which resulted in the identification of three dimensions, Advocating Action, Influencing Narrative Change, and Building Identity as well as 10 sub-dimensions of the hashtags’ functionality in representing activism. As Table 2 indicates, the Advocating Action dimension includes Specific Advocate, which advocates for specific actions, and General Advocate, which contains the non-specific or overarching advocacy. The Influencing Narrative Change dimension includes different sub-dimensions based on identity groups and includes AAPI Influencer, social influencers with Asian origins, and General Influencer –social influencers with ethnicities other than Asian or Asian American. The Building Identity dimension includes six sub-dimensions of broader contexts related to Asian and Asian American identities and covers frequently mentioned concepts and incidents related to the unfolding social movement. We also provide examples for each dimension and its sub-dimensions in Table 2.

Dimension

Sub-dimension