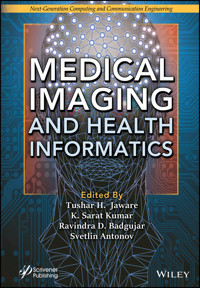

Medical Imaging and Health Informatics E-Book

211,99 €

Mehr erfahren.

- Herausgeber: John Wiley & Sons

- Kategorie: Wissenschaft und neue Technologien

- Serie: Next Generation Computing and Communication Engineering

- Sprache: Englisch

MEDICAL IMAGING AND HEALTH INFORMATICS

Provides a comprehensive review of artificial intelligence (AI) in medical imaging as well as practical recommendations for the usage of machine learning (ML) and deep learning (DL) techniques for clinical applications.

Medical imaging and health informatics is a subfield of science and engineering which applies informatics to medicine and includes the study of design, development, and application of computational innovations to improve healthcare. The health domain has a wide range of challenges that can be addressed using computational approaches; therefore, the use of AI and associated technologies is becoming more common in society and healthcare. Currently, deep learning algorithms are a promising option for automated disease detection with high accuracy. Clinical data analysis employing these deep learning algorithms allows physicians to detect diseases earlier and treat patients more efficiently. Since these technologies have the potential to transform many aspects of patient care, disease detection, disease progression and pharmaceutical organization, approaches such as deep learning algorithms, convolutional neural networks, and image processing techniques are explored in this book.

This book also delves into a wide range of image segmentation, classification, registration, computer-aided analysis applications, methodologies, algorithms, platforms, and tools; and gives a holistic approach to the application of AI in healthcare through case studies and innovative applications. It also shows how image processing, machine learning and deep learning techniques can be applied for medical diagnostics in several specific health scenarios such as COVID-19, lung cancer, cardiovascular diseases, breast cancer, liver tumor, bone fractures, etc. Also highlighted are the significant issues and concerns regarding the use of AI in healthcare together with other allied areas, such as the Internet of Things (IoT) and medical informatics, to construct a global multidisciplinary forum.

Audience

The core audience comprises researchers and industry engineers, scientists, radiologists, healthcare professionals, data scientists who work in health informatics, computer vision and medical image analysis.

Sie lesen das E-Book in den Legimi-Apps auf:

Seitenzahl: 581

Veröffentlichungsjahr: 2022

Ähnliche

Table of Contents

Cover

Title Page

Copyright

Preface

1 Machine Learning Approach for Medical Diagnosis Based on Prediction Model

1.1 Introduction

1.2 Machine Learning Approach and Prediction

1.3 Material and Experimentation

1.4 Performance Metrics and Evaluation of Classifiers

1.5 Discussion and Conclusion

References

2 Applications of Machine Learning Techniques in Disease Detection

2.1 Introduction

2.2 Types of Machine Learning Techniques

2.3 Future Research Directions

References

3 Dengue Incidence Rate Prediction Using Nonlinear Autoregressive Neural Network Time Series Model

3.1 Introduction

3.2 Related Literature Study

3.3 Methods and Materials

3.4 Result Discussions

3.5 Conclusion and Future Work

Acknowledgment

References

4 Early Detection of Breast Cancer Using Machine Learning

4.1 Introduction

4.2 Methodology

4.3 Segmentation

4.4 Feature Extraction

4.5 Classification

4.6 Performance Evaluation Methods

4.7 Output

4.8 Results and Discussion

4.9 Conclusion and Future Scope

References

5 Machine Learning Approach for Prediction of Lung Cancer

5.1 Introduction

5.2 Feature Extraction and Lung Cancer Analysis

5.3 Methodology

5.4 Proposed System and Implementation

5.5 Conclusion

References

6 Segmentation of Liver Tumor Using ANN

6.1 Introduction

6.2 Liver Tumor

6.3 Benefits of CT to Diagnose Liver Cancer

6.4 Literature Review

6.5 Interactive Liver Tumor Segmentation by Deep Learning

6.6 Existing System

6.7 Proposed System

6.8 Result and Discussion

6.9 Future Enhancements

6.10 Conclusion

References

7 DMSAN: Deep Multi-Scale Attention Network for Automatic Liver Segmentation From Abdomen CT Images

7.1 Introduction

7.2 Related Work

7.3 Methodology

7.4 Experimental Analysis

7.5 Results

7.6 Result Comparison With Other Methods

7.7 Discussion

7.8 Conclusion

Acknowledgement

References

8 AI-Based Identification and Prediction of Cardiac Disorders

8.1 Introduction

8.2 Related Work

8.3 Classifiers and Methodology

8.4 Result Analysis

8.5 Conclusions and Future Scope

References

9 An Implementation of Image Processing Technique for Bone Fracture Detection Including Classification

9.1 Introduction

9.2 Existing Technology

9.3 Image Processing

9.4 Overview of System and Steps

9.5 Results

9.6 Conclusion

References

10 Improved Otsu Algorithm for Segmentation of Malaria Parasite Images

10.1 Introduction

10.2 Literature Review

10.3 Related Works

10.4 Proposed Algorithm

10.5 Experimental Results

10.6 Conclusion

References

11 A Reliable and Fully Automated Diagnosis of COVID-19 Based on Computed Tomography

11.1 Introduction

11.2 Background

11.3 Methodology

11.4 Results

11.5 Conclusion

References

12 Multimodality Medical Images for Healthcare Disease Analysis

12.1 Introduction

12.2 Brief Survey of Earlier Works

12.3 Medical Imaging Modalities

12.4 Image Fusion

12.5 Clinical Relevance for Medical Image Fusion

12.6 Data Sets and Softwares Used

12.7 Generalized Image Fusion Scheme

12.8 Medical Image Fusion Methods

12.9 Conclusions

References

13 Health Detection System for COVID-19 Patients Using IoT

13.1 Introduction

13.2 Related Works

13.3 System Design

13.4 Proposed System for Detection of Corona Patients

13.5 Results and Performance Analysis

13.6 Conclusion

References

14 Intelligent Systems in Healthcare

14.1 Introduction

14.2 Brain Computer Interface

14.3 Robotic Systems

14.4 Voice Recognition Systems

14.5 Remote Health Monitoring Systems

14.6 Internet of Things–Based Intelligent Systems

14.7 Intelligent Electronic Healthcare Systems

14.8 Conclusion

References

15 Design of Antennas for Microwave Imaging Techniques

15.1 Introduction

15.2 Literature

15.3 Design and Development of Wideband Antenna

15.4 Results and Inferences

15.5 Conclusion

References

16 COVID-19: A Global Crisis

16.1 Introduction

16.2 Clinical Manifestation and Pathogenesis

16.3 Diagnosis and Control

16.4 Control Measures

16.5 Immunization

16.6 Conclusion

References

17 Smart Healthcare for Pregnant Women in Rural Areas

17.1 Introduction

17.2 National/International Surveys Reviews

17.3 Architecture

17.4 Anganwadi’s Collaborative Work

17.5 Schemes Offered by Central/State Governments

17.6 Smart Healthcare System

17.7 Data Collection

17.8 Hardware and Software Features of HCS

17.9 Implementation

17.10 Results and Analysis

17.11 Conclusion

References

18 Computer-Aided Interpretation of ECG Signal—A Challenge

18.1 Introduction

18.2 The Cardiovascular System

18.3 Electrocardiogram Leads

18.4 Artifacts/Noises Affecting the ECG

18.5 The ECG Waveform

18.6 Cardiac Arrhythmias

18.7 Electrocardiogram Databases

18.8 Computer-Aided Interpretation (CAD)

18.9 Computational Techniques

18.10 Conclusion

References

Index

Also of Interest

End User License Agreement

Guide

Cover

Table of Contents

Title Page

Copyright

Begin Reading

Index

Wiley End User License Agreement

Pages

v

ii

iii

iv

xvii

xviii

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

365

366

Scrivener Publishing

100 Cummings Center, Suite 541J

Beverly, MA 01915-6106

Next-Generation Computing and Communication Engineering

Series Editors: Dr. G. R. Kanagachidambaresan and Dr. Kolla Bhanu Prakash

Developments in articial intelligence are made more challenging because the involvement of multidomain technology creates new problems for researchers. Therefore, in order to help meet the challenge, this book series concentrates on next generation computing and communication methodologies involving smart and ambient environment design. It is an effective publishing platform for monographs, handbooks, and edited volumes on Industry 4.0, agriculture, smart city development, new computing and communication paradigms. Although the series mainly focuses on design, it also addresses analytics and investigation of industry-related real-time problems.

Publishers at ScrivenerMartin Scrivener ([email protected])Phillip Carmical ([email protected])

Medical Imaging and Health Informatics

Edited by

Tushar H. Jaware

K. Sarat Kumar

Ravindra D. Badgujar

and

Svetlin Antonov

This edition first published 2022 by John Wiley & Sons, Inc., 111 River Street, Hoboken, NJ 07030, USA and Scrivener Publishing LLC, 100 Cummings Center, Suite 541J, Beverly, MA 01915, USA

© 2022 Scrivener Publishing LLC

For more information about Scrivener publications please visit www.scrivenerpublishing.com.

All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, except as permitted by law. Advice on how to obtain permission to reuse material from this title is available at http://www.wiley.com/go/permissions.

Wiley Global Headquarters

111 River Street, Hoboken, NJ 07030, USA

For details of our global editorial offices, customer services, and more information about Wiley products visit us at www.wiley.com.

Limit of Liability/Disclaimer of Warranty

While the publisher and authors have used their best efforts in preparing this work, they make no representations or warranties with respect to the accuracy or completeness of the contents of this work and specifically disclaim all warranties, including without limitation any implied warranties of merchant-ability or fitness for a particular purpose. No warranty may be created or extended by sales representatives, written sales materials, or promotional statements for this work. The fact that an organization, website, or product is referred to in this work as a citation and/or potential source of further information does not mean that the publisher and authors endorse the information or services the organization, website, or product may provide or recommendations it may make. This work is sold with the understanding that the publisher is not engaged in rendering professional services. The advice and strategies contained herein may not be suitable for your situation. You should consult with a specialist where appropriate. Neither the publisher nor authors shall be liable for any loss of profit or any other commercial damages, including but not limited to special, incidental, consequential, or other damages. Further, readers should be aware that websites listed in this work may have changed or disappeared between when this work was written and when it is read.

Library of Congress Cataloging-in-Publication Data

ISBN 978-1-119-81913-4

Cover image: Pixabay.Com

Cover design by Russell Richardson

Set in size of 11pt and Minion Pro by Manila Typesetting Company, Makati, Philippines

Printed in the USA

10 9 8 7 6 5 4 3 2 1

Preface

There are many aspects to medical imaging and health informatics, including how they can be applied to real-world biomedical and healthcare challenges. Therefore, a collection of cutting-edge artificial intelligence (AI) and other allied approaches for healthcare and biomedical applications are provided in this book. Moreover, a diverse collection of state-of-the-art techniques and recent advancements in AI approaches are given, which are geared toward the challenges that healthcare institutions and hospitals face in terms of early detection of diseases, data processing, healthcare monitoring and prognosis of diseases.

Medical imaging and health informatics is a subfield of science and engineering which applies informatics to medicine and includes the study of design, development, and application of computational innovations to improve healthcare. The health domain has a wide range of challenges that can be addressed using computational approaches; therefore, the use of AI and associated technologies is becoming more common in society and healthcare. Currently, deep learning algorithms are a promising option for automated disease detection with high accuracy. Clinical data analysis employing these deep learning algorithms allows physicians to detect diseases earlier and treat patients more efficiently. Since these technologies have the potential to transform many aspects of patient care, disease detection, disease progression and pharmaceutical organization, approaches such as deep learning algorithms, convolutional neural networks, and image processing techniques are explored in this book.

This book also delves into a wide range of image segmentation, classification, registration, computer-aided analysis applications, methodologies, algorithms, platforms, and tools; and gives a holistic approach to the application of AI in healthcare through case studies and innovative applications. It also shows how image processing, machine learning and deep learning techniques can be applied for medical diagnostics in several specific health scenarios such as COVID-19, lung cancer, cardiovascular diseases, breast cancer, liver tumor, bone fractures, etc. Also highlighted are the significant issues and concerns regarding the use of AI in healthcare together with other allied areas, such as the internet of things (IoT) and medical informatics, to construct a global multidisciplinary forum.

Since elements resulting from the growing profusion and complexity of data in the healthcare sector are emphasized in this book, it will assist scholars in focusing on future research problems and objectives. Our principal goal is to leverage AI, biomedical and health informatics for effective analysis and application to provide a tangible contribution to innovative breakthroughs in healthcare.

Dr. Tushar H. JawareDr. K. Sarat KumarDr. Ravindra D. BadgujarDr. Svetlin AntonovApril 2022

1Machine Learning Approach for Medical Diagnosis Based on Prediction Model

Hemant Kasturiwale1*, Rajesh Karhe2 and Sujata N. Kale3

1Thakur College of Engineering and Technology, Kandivali (East), Mumbai, MS, India

2Shri Gulabrao Deokar College of Engineering, Jalgaon, MS, India

3Department of Applied Electronics, Sant Gadge Baba University, Amravati, MS, India

Abstract

The electrocardiography is the most crucial biosignals for critical analysis of the heart. The heart is the human body’s most vital and variety of control mechanisms that regulate the heart’s activities. The heart rate is an essential measure of cardiac function. The heart rate is represented as a time interval equal between two corresponding electrocardiogram (ECG) “R” peaks. The heart rate varies with the heart’s state. A machine learning technique is used to categorize the statistical parameters mentioned above to predict the individual’s physical state, including sleep, examination, and exercise, based on a physiologically important factor known as HRV. The chapter is focused on uses of manual classified data. Each hospital, clinic, and diagnostic center produces massive quantities of information such as patient records and test results to predict the presence of heart disease and provide care for the early stages. The results are validated and compared with predictions obtained from different algorithms. Classification and prediction are a mining technique that uses training data to construct a model, and then, that model is applied to test data to predict outcomes. Different algorithms are employed to disease datasets to diagnose chronic disease, and the findings have been positive. There is a need to establish an appropriate technique for the diagnosis of chronic diseases. This chapter discusses with insight various kinds of classification schemes for chronic disease prediction. Here, readers will come to choice know machine learning and classifiers made to get knowledge out of datasets.

Keywords: ECG, biosignals, machine learning, HRV, classification, prediction, cardiac diseases

1.1 Introduction

Biosignals are being used in various medical data, such as the electroencephalography (EEG), capturing electric fields created by brain cell activity, and magnetoencephalography (MEG) capturing magnet fields produced by electrical brain cell activity. The electrical stimulation comes from biological activity in various parts of the body. The most popular types of methods currently used to record biosignals in clinical research are described below, along with a brief overview of their functionality and related clinical application signals [1].

1.1.1 Heart System and Major Cardiac Diseases

The electrical activity generates the following types of signals:

Magnetoencephalography (MEG) signals

Electromyography (EMG) signals

Electrooculography (EOG)signals

Phonocardiography (PCG) signals

Electrocorticography (ECoG) signals

Electrocardiography (ECG or EKG) signals

Intervals between the waves are used as indicators of irregular cardiac operation, e.g., a prolonged PR interval from atrial activation to the start of ventricular activation may indicate cardiac failure [2, 3]. In addition, ECGs are used to study arrhythmias [4], coronary artery disease [5], and other heart failure disorders. In biosignals, the sampling frequency (or sampling rate) and the recording period are directly proportional to the data size and the data acquisition process speed. The ECG will be essential for the heart rhythm and disease research. The different heart conditions are as follows:

a) Arrhythmias

b) Coronary heart disease

c) Various types of heart blocks

d) Fibrillations

e) Congestive heart failure (CHF)

f) Myocardial infarction (MI)

g) Premature ventricular contraction (PVC)

1.1.2 ECG for Heart Rate Variability Analysis

Electrocardiogram (ECG) is a waveform pattern that describes the state of cardiac activity and cardiac safety. The ECG signal is non-stationary and non-linear. The ECG has a spectrum of frequencies between 0.05 and 100 Hz [6]. ECG analysis methods, including the heart rate variability (HRV), QRS identification, and ECG post-processing, have advanced considerably since device implementation. The word HRV reflects the interval difference between successive heartbeats.

1.1.3 HRV for Cardiac Analysis

The biomedical signal is an important health assessment parameter. For example, it has been used to detect and predict human stress [1], stroke, hypertension, sleep disorder, age, gender, and many more. The popular techniques to analyze the HRV fall into three categories as time domain, spectral or frequency domain based on fast Fourier transform (FFT) [7], and nonlinear methods consisting of Markov modeling, entropy-based metrics [8], and probabilistic modeling [9]. There are seven commonly used statistical time domain parameters [10] calculated from HRV segmentation during 5-min recording, comprising of RMSSD, SDNN, SDANN, SDANNi, SDSD, PNN50, and autocorrelation, which are considered for implementation. The HRV is also calculated by a device called PPA (peripheral pulse analyzer); it works based on pulses measured, which is different from HRV measurement using ECG. However, the focus would be on ECG-based HRV measurement, but the validation PPA-based method is considered [11]. Nonlinear measurement approaches aim to calculate the structure and complexity of the time series of RR intervals. HRV signals are non-stationary and nonlinear in nature. Analysis of HRV dynamics by methods based on chaos theory and nonlinear system theory is based on findings indicating that the processes involved in cardiovascular control are likely to interact with each other in a nonlinear manner. The more on indices (features/parameters) are discussed in Section 1.3.2.

1.2 Machine Learning Approach and Prediction

Learning is closely connected to (and sometimes overlaps with) quantitative statistics, which often concentrate on forecasting computers’ use. It has close connections with mathematical optimization, which provides the fields of methodology, theory, and implementation. The second sub-area focuses more on the study of exploratory data and is also known as non-monitored learning [2]. Unsupervised machine learning (ML) is also possible [11] and can be used to learn and construct baseline conduct profiles for different entities [12]. To gain knowledge of the past and to detect useful trends from massive, unstructured, and complex databases, machine learning algorithms use a range of statistical, probabilistic, and optimization methods [12]. These algorithms include automatic categorization of texts, network intrusion detection, junk e-mail filtering, credit-card fraud detection, consumer buying behavior, manufacturing optimization, and disease modeling. Most of these applications are performed using managed variants of the algorithms of ML rather than unattended [13].

The heart disease detail includes several features that predict heart disease. This large amount of medical data allowed data mining techniques to discover trends and diagnose patients. The historical medical data is very high, so it requires computational methods to process it. Data mining is a technique that removes the hidden pattern and uses as an analytical tool to analyze historical data. There are several different classification schemes for disease datasets. ML techniques are applied for classifying the statistical parameters above in a cardiological signal analysis to predict the RR interval estimate cannot be overemphasized. A precise method of calculation therefore needs to be developed. It is clear from the existing research theory that the conventional systems for chronic disease prediction are unable to establish reliable diagnostic systems as workers make it difficult to get correct responses and can minimize response time. Adaptive systems, by comparison, can increase the chances of success and can advise clinicians on care decisions. Current healthcare programmers can be enhanced by the efficient use of parallel classification systems, as they promote parallel implementation on multiple systems. Parallel classification systems also have a great potential to increase the predictive performance of diagnostic systems for chronic diseases [13, 14]. Here, classifiers are discussed out of the available are K-Nearest Neighbor (KNN), Support Vector Machine (SVM), Ensemble AdaBoost (EAB), and Random Forest (RF).

1.3 Material and Experimentation

The proposed method comprises of two phases:

processing the enrolment database (PEP) and

Prediction (P).

Figure 1.1 shows that the research purpose types of database are created based on acquisition units. The standard database has varying sampling frequency which comprises of different age groups of male and female.

A total number of subjects and corresponding signal were acquired with different set conditions. This may comprises of female and male with varying age group with sampling frequency of 256 and 500 Hz [6, 15]. The model will be testing for cardiac HRV-based analysis with both the ECG and non-ECG (PPA). For the research purpose, the congestive heart failure, arrhythmia, sudden cardiac death, ventricular arrhythmia, CHF database data being considered along with externally obtained ECG and non-ECG.

1.3.1 Data and HRV

The research uses the normal and cardiac subject’s standard data [16] and externally acquired ECG or non-ECG data. Hence, the proposed techniques for the classification of cardiac diseases use data with varying characteristics. The DAQ cards help in creating a database of ECG or non-ECG signals. Figure 1.2 shows the HRV data and categorization, ensuring the data obtained is free from significant artifacts or noise. The sources of data and systems related to signal acquiring are a part of system. For more insight, the following methods/techniques are used for data acquisition in support of standard tools.

Figure 1.1 Acquisition system and sources [source: 14].

Figure 1.2 ECG acquisition system with connection (low cost).

1.3.1.1 HRV Data Analysis via ECG Data Acquisition System

The analog circuit for ECG acquisition is possible with one or three channels. The analog devices as a signal conditioning circuit are used for data collection electronically.

The three-channel data acquiring system is attached to the body of the subject for recording purpose. These probes collect the ECG signal and give it as input to the ECG kit. The ECG kit comprises low-pass filters and an ECG chip. The electronic assembly is customized to acquired signal and processed further till detection. The myDAQ software, which works with NI DAQ, records the ECG wave, processes it, and provides analysis regarding the subject’s heart condition. Three probes are connected to the ECG kit to test the signal and CRO to verify the signal. The extracted ECG signal from the subject is filtered as first step of process. The NIDAQ card processes a pure electrical signal. The front panel of the analog circuit with MCP6004 and instrumentation amplifier with other passive components is preferred as low cost and effective option. The circuit removes the baseline noise, line interference, and extracting data even for a few more seconds. It is effective for short duration records and has low storage capacity. The mechanism to reduce noise or artifact is effective to some extent for this circuit. The wireless connectivity is also major advantage of chapter system.

1.3.2 Methodology and Techniques

The proposed method breaks down HRV signals with the collection of features and checks consistency. Features are derived from the HRV signals components. Eventually, the classification is done with the classification unit. The classifier is used here for inspection and checking earlier. The best classifier is selected based on the classification parameters.

The approach proposed comprises two phases: Enrolment Database Processing (PEP) and Prediction and Identification (PI). All available data samples and ECG signals obtained by the units are fed for analysis as shown in Figure 1.3. However, the proposed model is compatible with age, gender, and feature (static) as other input conditions [17]. The proposed model design, heart disease dataset, data pre-processing, and performance measurement are critical and have been taken care of. The proposed methods are developed based on the following essential parameters:

Short-term and long-term analysis

Feature’s indices and their sequencing

Mathematical indices

The technique for a standard database and classifiers

▪ The noise and impact study on ECG-HRV

▪ Noise impact on non–ECG-HRV

The technique for an acquired database (ECG and non-ECG HRV) and classifiers

Performance evaluation criteria and validation

Figure 1.3 Cardiac diseases identification model for cardiac diseases [source: 24].

1.3.2.1 Classifiers and Performance Evaluation

The parameters used for evaluating the algorithm’s performance are accuracy, precision, F-measures, recall, and execution time [18, 19]. These parameters are defined using four measures: True Positive (TP), True Negative (TN), False Positive (FP), and False Negative (FN) [20, 21].

1.3.2.1.1 Performance Metrics

Performance metrics calculate how well a given algorithm performs with accuracy, precision, sensitivity, specificity, and other parameters. The different performance metrics are as below.

1.3.2.1.1.1 CONFUSION MATRIX

The confusion matrix shows the performance of the algorithm. It depicts how the classifier is confused while predicting. The rows indicate the class label’s actual instance, while the columns indicate the predicted class instances. Table 1.1 shows a confusion matrix for binary classification. TP value means the positive value is correctly predicted, FP means positive value is falsely classified, FN means the negative value is falsely predicted, while the TN means negative value is correctly classified. A confusion matrix table used to calculate different performance metrics.

1.3.2.1.2 Major Features Contributors With Analysis

The details added mathematical features are an essential part of the total of 24 features in Tables 1.2A and B. The formulation of Table 1.2 is a restructured table looking into the needs of research to analyze short term and long-term duration data size. The mathematical features Dalton DSD index, Dalton MABB index, and De Hann LTV index are added and have significant in view of analysis.

The HRV indices are known as HRV parameters or HRV features. The feature acronym and feature name reflected in Table 1.2. The time domain and frequency domain, and linear and some of the nonlinear indices are part of the research [22]. The indices are divided into four groups. The proposed model works on the development of a new set of indices group [23, 24]. The mathematical indices, namely, Dalton and De Hann, are being figured as part of the feature group (Group 4), as shown in Table 1.2B. The feature group’s novelty is that they are created as per their essential characteristics to improve the model’s performance. The mathematical equation explains the dependency of variables with features [25].

Table 1.1 Confusion matrix.

Actual label

Predicted label

+(1)

−(0)

+(1)

True Positive

False Negative

−(0)

False Positive

True Negative

Table 1.2 (A) New proposed HRV indices with groups.

Sr. no.

Feature acronym

Feature name

Time domain (Group 1)

1

meanRR

Mean value RR period

2

SDNN

Standard deviation of intervals (NN)

3

Mean

Mean value of the heart rate (HR)

4

sdHR

Standard heart rate

5

NNx

Total number of interval successive NN intervals greater than “x” ms

6

HRVTi

Integral of the density of the RR interval histogram divided by its height

7

TINN

Baseline width of the RR interval histogram

8

pNNx

Percentage of successive RR intervals differ by more than “x” ms

9

RMSSD

Root mean square of successive RR interval differences

Frequency domain (Group 2)

10

aHF

Areas within a higher frequency band (0.15–0.4 Hz)

11

aLF

Areas within a lower frequency band (0.04–0.15 Hz)

12

Raio (aLF/aHF)

The ration of LF to HF

Nonlinear (Group 3)

13

Ent

Sample entropy

14

Hval

Hurst component

15

avgpsdf

Average power spectral density

16

hfdf

Higuchi fractal dimension

17

D

The factor of the dimension of time series

18

Alpha

Scaling exponent for alignment of series points

Other features (parameters/indices) (Group 4)

19

SD1

The standard deviation of the distance of each point from the y-axis

20

SD2

The standard deviation of the distance of each point from the x-axis

1.3.3 Proposed Model With Layer Representation

The HRV analysis for cardiac diseases is complicated, so the step-by-step processes are defined as a part of HRV analysis. The research work has come up with the development of a robust model via the layer model. The features contribution and impact is a significant contribution of research that helps in the classification of cardiac diseases. The model development has a three-part fixed set feature model (FSM), flexi intra group selection model, and qualitative analysis, as shown in Figure 1.4. The long-term and short-term analyses are unique with the development of the model. The model performs under all conditions, and so the results obtained are encouraging for future growth. Figures 1.4 and 1.5 show a novel approach to identify and predict cardiac diseases with many features like feature extraction, feature concatenation, and combination. The responsiveness of the algorithm is on HRV parameters (linear, nonlinear, time, and frequency). Here, research work has included mathematical parameters like Dalton and Higuchi to enhance the method’s efficiency.

Table 1.2 (B) New proposed HRV indices with groups.

21

CD

Correlation dimension

22

Dalton DSD index

The standard deviation of RR of length HR signal (long-term variability index)

23

Dalton MABB index

Absolute of one-half of arithmetic mean value of differences of subsequent RR intervals (short-term variability index)

24

De Hann LTV index

As an interquartile range of radius location of particular RR intervals (long-term variability index)

Figure 1.4 Robust model layers.

Figure 1.5 HRV model for cardiac prediction.

i) Support Vector Machine (SVM)

a. SVM linear

b. SVM polynomial

c. SVM Gaussian

ii) RF

a. With variation in the number of trees

iii) KNN

iv) EAB

The research aims to enhance HRV analysis to identify and predict cardiac diseases using a ML algorithm. The model’s performance depends on the quality of input data and features for predication cardiac diseases. The research present the model which can be customized looking into needs data size and type of input signal. The model tested all possible subjects’ conditions and database like raw and non-ECG signal. The research reviews current perspectives on the prediction of cardiac diseases that needs 24 h, short-term (~1 min), and long term (>1 min) HRV. The research enhances the importance of HRV and its implications for health and performance. The investigation provides an insight into widely used HRV time domain, frequency domain, and nonlinear metrics, along with mathematical indices for better understanding; the information is shared here using graphs. The research goal is to show the classifiers’ effectiveness and ease of use in predicting heart diseases. The research provides HRV assessment strategies for clinical and optimal performance interventions nervous system. The extraction and selection of the change (variation) of heart rate during short term (5 min) is analyzed using the time domain and frequency domain to provide the degree of balance and activity of the autonomic. The proposed research work on an algorithm meets the standards of measurement and physiological interpretation and biosignal processing algorithms. The development flow works with feature concatenations and the model’s outcome with 18 features with fixed set and grouped as time domain, frequency, and linear-nonlinear domain. The other side of the flow is more adaptable with feature concatenations and combinations with 18 features extending it to 24 features. The ML model has been successful in overcoming the challenges to large extents mentioned by researchers.

1.3.4 The Model Using Fixed Set of Features and Standard Dataset

The HRV model using a fixed set of features is the first HRV model developed for prediction. The fixed function is time domain (nine functions), frequency domain (three features), and linear-nonlinear (fix features). The model needs to work on a specific domain or combination of a group such as time-frequency, time-nonlinear, and time-nonlinear. After applying a ML algorithm, to each domain and combinations (group), the 18 sets of features [Time-Frequency Linear-Nonlinear (TFLN)] have the best amongst this analysis. The comparison of results is with other groups. Although all the four types of data, the external dataset, have been tested, the following section presents the model’s analysis with standard datasets and classifiers. The performance of the model evaluates with specific metrics using a set of features. The model identifies the input signal and predicts cardiac diseases using ML. The prediction model uses 18 features, and the model worked well based on performance evaluation parameters.

1.3.4.1 Performance of Classifiers With Feature Selection

Table 1.3 shows the performance of classifiers for a given input, i.e., standard data. The table shows accuracy, sensitivity, and specificity, which are the most crucial performance evaluation measures. The same has been shown graphically in Figure 1.6. The proposed model distinguishes the CHF accurately from normal ones with accuracy, sensitivity, and specificity. The accuracy, sensitivity, and specificity are 99.78%, 100%, and 100%, respectively. The accuracy of the classifier KNN and RF is comparable and close to 100%. RF classifiers’ accuracy for datasets is essential, which is high SDDB, ARRTHY, and VENT-ARRTHY. The performance of KNN, EAB, and SVM is but lower than RF classifier. The FNR is 20% for EAB against 0% for other classifiers, which is one of the indications of performance classifier along with TPR (TP Rate), TNR (TN Rate), and FPR (FP Rate).

Table 1.3 Performance measurement on a standard dataset.

Classifier performance

Database (Standard)

Accuracy

Sensitivity

Specificity

RF

CHF (A)

99.78

100

100

ARRTHY (B)

99.88

100

100

SDDB (C)

99.77

100

100

VENT_ARRTHY (D)

95

97.01

97.74

EAB

CHF (A)

92.31

80

100

ARRTHY (B)

97.48

100

100

SDDB (C)

95.24

100

87.5

VENT_ARRTHY (D)

97

97.67

98.18

SVM

CHF (A)

92.31

100

87.5

ARRTHY (B)

95.65

100

75

SDDB (C)

95.24

100

87.5

VENT_ARRTHY (D)

86

88.02

91.52

KNN

CHF (A)

99.79

100

100

ARRTHY (B)

97.83

100

87.5

SDDB (C)

66.67

46.15

100

VENT_ARRTHY (D)

56

66.41

77.07

Figure 1.6 Performance evaluation of model on standard database.

1.4 Performance Metrics and Evaluation of Classifiers

The F-score, also known as the F-measure, is a measure of a test’s accuracy. The precise calculation is shown in Figure 1.6 and Table 1.4 and the F-score recall together.

The exactness is the number of positive results correctly identified divided by the number of all positive results, including those not correctly identified. The reminder is the number of positive findings correctly detected, divided by the number of positive samples. For TFNL functions, the F1 scoring is high compared to time features only. The statistics for Kappa from Cohen are an excellent metric that can deal with the problems of both classes and classes. A 100% score against other groups has been registered by KNN. Cohen’s Kappa offers a prediction in which precision cannot be expected. The Matthews correlation code (MCC) or phi coefficient is used to calculate binary (two-class) grade quality in ML. Intradialytic hypotension is predicted by the region under the recipient operating characteristic (AUROC). For KNN, it is 100% and for EAB it is 84.3%. The higher the value, the better the model efficiency. The Threat Score (TS) is also called Critical Success Index (CSI), the categorical output prediction verification metric is equivalent to the total number of accurate predictions for events. At 80%, CSI with EAB is the lowest. The outcome of the model using evaluation measures has shown that the model is responsive to input signal changes and is thus used for short- and long-term analysis. The rising availability of personal computers and computing power has led significantly to increased HRV analyses.

1.4.1 Cardiac Disease Prediction Through Flexi Intra Group Selection Model

The HRV feature–based technique is a novel way of having a high degree of precision and accuracy. As the barriers between domains no longer exist for combinations purpose, so the model is named Flexi Inter-Intra Group Selection Model (ISM). Sometimes, here, it will be called as Flexi Group Selection Model or Flexi Group Model. The qualitative and quantitative assessment is possible due to feature concatenations and combination stages. The model with intra and inter indices set selection of feature, compatibility with classifiers, and extraction is the main highlight of the above-modified HRV model. After de-noising, feature extraction, and dimensionality reduction, the raw data parameters will process the input signal based on the carrying information. The evaluation is done on classifier output and parameters and validated with input pre-processed signals. The evaluation parameters are essential to the analysis set for knowing exactly redundancies and conversion factor. The ISM model works very well with ECG HRV analysis or non-ECG HRV analysis for cardiac diseases identification and prediction. The HRV model is developed for ECG or non-ECG input conditions followed by feature selection and feature concatenation within 24 features. The ML approach ensures the system is adequately trained for specified samples and tested. Figure 1.7 shows the ISM structure with 18 features and 24 features. The 24-feature model is a modified and improved model to boost classifier performance. From the testing, it is clear that a model with RF and SVM have better-performing characteristics. ML is important because of its remarkable ability to adapt and provide solutions to complex problems effectively and quickly.

Table 1.4 HRV model and classifier performance.

Database (Standard)

HRV model with fixed set features

Classifier

RF

EAB

SVM

KNN

ERROR rate

1

6.77

5.69

1

Precision

83.3

100

83.3

100

Negative Predictive value

100

88.9

100

100

Recall

100

80

100

100

F-score

86.2

95.2

86.2

100

Critical success index

83.3

80

83.3

100

MCC

85.4

84.3

85.4

100

Cohen’s kappa

84.3

83.1

84.3

100

Figure 1.7 ISM HRV model.

1.4.2 HRV Model With Flexi Set of Features

The model is developed for 18 features with selection and extraction within 18 as a group now. Here, the flexi feature set model (ISM) works well for data combinations of ECG and non-ECG signals. This model works with 18 features like FSM, but combination and concatenations are possible with the model. The data size is an important consideration along with short train or long train data. The FSM accuracy decreases on external data input and works well for fixed sampling frequency data. Table 1.5 shows the performance of the Flexi Set Feature model with 18 features. It is important to have results and assessment of each to take the analysis to a model compatible with input conditions, making the model Robust. Figure 1.8 shows all important classifiers considered for study and experimentation.

The qualitative analysis of 18 feature flexi set of feature model is as follows:

Classifier outcome of 18 features flexi approach is as

a. EAB and KNN accuracy is almost the same as that of the FSM.

b. SVM performance is at 85.90% and RF accuracy increases to 94.89%.

The performance on expanded dataset and for a varied length of samples.

Table 1.5 Accuracy comparison with fixed set feature model.

Accuracy

EAB

KNN

SVM

RF

FSM

ISM (18)

FSM

ISM (18)

FSM

ISM (18)

FSM

ISM (18)

61.54

61.54

85.55

85.41

84.77

85.90

89.89

94.87

Figure 1.8 Classifier performances on flexi set features (ISM-18).

Sensitivity rates of RF-42 and F1-score are 91.27% and 89.12%, respectively.

Accuracy rates of RF-35, RF-42, and RF-50 are 93.59%, 94.87%, and 93.59%, respectively.

Figure 1.8 shows that 2 per mov. average (accuracy) suggests scope for a smooth transition from one point to another, especially in RF. The ISM with 18 has shown a lot of promises with its outcome, which has led to improve version of ISM (18). The model describes feature concatenation and combinations with limited success.

1.4.3 Performance of the Proposed Modified With ISM-24

The aim is to assess the impact of ML methods in developing a model that classifies normal and cardiac failure in long-term ECG time series. The robust HRV model comprises a combination generator model, parameter array, feature selection and computation, and feature segregation at the top and bottom unit. The qualitative assessment is possible using the HRV ISM model. The RF algorithm is one of the best classification algorithms. RF can identify extensive data with precision. Figure 1.6 shows a learning method; a number of decision trees based on the time of training and the performance of the modal. RF acts as a random vector for all systems. Table 1.6 compares the traditional methods and ML model with the proposed ISM-24.

Whenever there are a more significant number of training samples available, building an ML model is advisable. From Table 1.7, the proposed ISM outperforms the other traditional and ML techniques. As compared to the conventional classification algorithms, ISM-24 captures deeper features and produces a nearly accurate classification using RF-45.

Table 1.6 Comparison of proposed methods with traditional methods with RF.

Table 1.7 Training and testing set combinations vs. evaluation parameters.

Training-testing split

Accuracy

Precision

Recall

F-score

30%–70%

83.24

92.12

82.02

87.16

50%–50%

92.30

92.66

84.80

88.41

70%

–

30%

96.79

93.45

96.15

90.5

The ISM-18 performs well on the combined database; the classification accuracy increases significantly compared to the existing traditional algorithm. The highest accuracy on the online dataset by the proposed method is 96.79% showing an improvement of around 2% over the ISM-18 model. The more the features have high accuracy in RF-45 but not always guarantee the results unless each feature’s impact is known. The traditionally features like RMSSD, SDNN, and LF; HF has used DWT for HRV-based analysis for cardiac diseases. It is extremely important to see the impact of time parameters within the domain, particularly for biosignals. The proposed methods and their qualitative analysis explain the mechanism for the identification of each dominating feature.

1.5 Discussion and Conclusion

Training of the system with a good dataset is key to achieving a high classification efficiency. Therefore, in this analysis, after training, the classifier was fed with all the combination of features in the absolute test process. The ANOVA was used once only to test input and correlation factors for suitability. Under time domain, frequency domain, and nonlinear domain, the proposed classification approach is tested by fixed set and accuracy was found to be much less than intra group selection methods. For the best feature selection process, extraction was evaluated before and run for multiple times to test robustness, based on computational time and the highest precision metrics. The model’s versatility intra community selection and adaptability for such varied and complex data is one of the unique features and is shown here using evaluation parameters. These results show that if a good dataset trains a classifier system, it gives higher performance. One of the major advantages of the proposed approach is that the use of combination set with standard ML will obtain a high classification efficiency. The limitation of the proposed method is that due to the number of complex data inputs, the training takes time. While the ML technology has many advantages, it is not flawless. In some ways, the following variables hinder their capacity.

ML algorithms are proven to be effective in predicting all scenarios.

Another problem is the right interpretation of the tests of ML algorithms.

The high sensitivity to errors is another drawback of ML algorithm.