18,99 €

Mehr erfahren.

- Herausgeber: John Wiley & Sons

- Kategorie: Geisteswissenschaft

- Sprache: Englisch

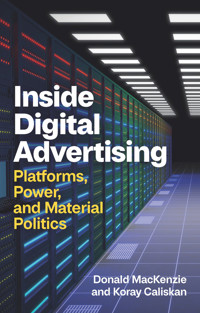

Dozens of times daily, access to your screen is auctioned to advertisers, sometimes by your own phone or laptop without your knowledge. In the background are huge, electricity-hungry, carbon-emitting systems that conduct roughly two trillion near-instantaneous, automated auctions every day.

This book takes you into the heart of this mysterious world. It describes how Google built its astonishing global system of warehouse-scale computing and turned that system into an unprecedented, multibillion-dollar, money-earning machine, and how Facebook – almost by accident – also became an advertising leviathan. It examines the tensions between those giants and the smaller firms that populate digital advertising’s open marketplace. Those tensions, as well as conflicts over user privacy, give rise to a new kind of politics that plays out in material systems in the form of crucial clashes between different ways of designing those systems. Building on work in the emerging interdisciplinary field of market studies, MacKenzie and Caliskan examine digital advertising’s material politics, its giant megamachines, and the foundations of platform power.

Inside Digital Advertising lays bare the processes that underpin today’s global advertising industry. It will be a key book for students and academics in the social sciences, humanities, and business studies, and it will appeal to anyone interested in the forces that are shaping our everyday digital world.

Sie lesen das E-Book in den Legimi-Apps auf:

Seitenzahl: 388

Veröffentlichungsjahr: 2025

Ähnliche

CONTENTS

Cover

Table of Contents

Dedication

Title Page

Copyright

Acknowledgments

1 Introduction

Our approach

Megamachines and material political economy

Platforms and platform power

Data sources

The literature on digital advertising

Notes

2 Display Ads, Cookies, and the Open Marketplace

The Web, display ads, and the dot-com bubble

Cookies

A market within a machine

The open marketplace

The different world of apps

Notes

3 Money Machines and the Characteristics of Digital Advertising

Building a megamachine

Money machine

The crucial feedback loop

The complexities of Google

Five characteristics of digital advertising

Notes

4 Hacking the System

“A single and complete advertising system”

The rise of header bidding

Conflicts over header bidding

Entangled with the law

The environmental politics of automated auctions

Notes

5 Enfolding Your Phone

Serious games

Optimizing advertising

Is it the same phone?

Redesigning the megamachine

Subterranean material politics

Identity politics

Notes

6 Digital Advertising’s Tensions

Intermediaries and mediators

Tensions in the open marketplace

Walled gardens

“Machines need room to work”: de-agencing and de-individualization

Notes

7 Conclusion

What we’ve covered in the previous chapters

Other areas of digital advertising

Reforming advertising, and what practitioners can and can’t do

Platform power

Infrastructural power

Constraining platform power

Redesigning megamachines

Notes

References

Index

End User License Agreement

Guide

Cover

Table of Contents

Dedication

Title Page

Copyright

Acknowledgments

Begin Reading

References

Index

End User License Agreement

Pages

ii

iii

iv

vi

vii

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

Dedication

For Caroline and Zeynep

Inside Digital Advertising

Platforms, Power, and Material Politics

Donald MacKenzie and Koray Caliskan

polity

Copyright © Donald MacKenzie and Koray Caliskan 2026

The right of Donald MacKenzie and Koray Caliskan to be identified as Authors of this Work has been asserted in accordance with the UK Copyright, Designs and Patents Act 1988.

First published in 2026 by Polity Press Ltd.

Polity Press Ltd.65 Bridge StreetCambridge CB2 1UR, UK

Polity Press Ltd.111 River StreetHoboken, NJ 07030, USA

The open access version of this book is licensed under the Creative Commons Attribution 4.0 International licence (CC BY 4.0). This licence permits others to share and adapt the work for any purpose, provided appropriate credit is given, a link to the licence is provided, and any changes made are indicated. To view a copy of this licence, visit https://creativecommons.org/licenses/by/4.0/.

Except where otherwise noted, all material in this book is © Donald MacKenzie and Koray Caliskan 2026 and is made available under the CC BY 4.0 licence. Every effort has been made to identify and clear rights for third-party content. Such material is not necessarily covered by the CC BY 4.0 licence. Where a separate credit line indicates a different licence or rights holder, that material may only be reused under the terms specified there.

ISBN-13: 978-1-5095-6865-9

A catalogue record for this book is available from the British Library.

Library of Congress Control Number: 2025936229

The publisher has used its best endeavours to ensure that the URLs for external websites referred to in this book are correct and active at the time of going to press. However, the publisher has no responsibility for the websites and can make no guarantee that a site will remain live or that the content is or will remain appropriate.

Every effort has been made to trace all copyright holders, but if any have been overlooked the publisher will be pleased to include any necessary credits in any subsequent reprint or edition.

For further information on Polity, visit our website:politybooks.com

Acknowledgments

We are enormously grateful to Frances Burgess, Addie McGowan, and Charlotte Rommerskirchen, the other members of our Edinburgh University/The New School team researching digital advertising. Frances managed the entire project, including its finances, organized research trips, maintained a project bibliography, and word-processed successive drafts of both this book and the papers we are drawing on. Addie, a digital-advertising practitioner as well as impressive academic, gave us vital insights and has influenced this book in multiple ways. Charlotte has led our work on the regulation of advertising, and her influence is also strong on what we say about that here. Although not a formal team member, Neil Marchant has made an invaluable contribution, transcribing our many interviews (some conducted in noisy cafes and restaurants) with remarkable accuracy and in a very timely way.

We are also very grateful to John Thompson at Polity Press for welcoming this book so warmly, and to two anonymous referees for helpful suggestions for revisions. The Columbia University Sociology Department’s workshop, Science, Knowledge, and Technology, generously hosted three seminars on our research in 2023–5. Feedback from those seminars and the other audiences to whom we have presented this work has made a major contribution to our evolving thinking. Donald particularly thanks Jean Czerlinski Whitmore Ortega, who has been an invaluable guide, both to technologies central to digital advertising and intellectual issues that the field raises.

We owe a huge debt of gratitude to our interviewees from within digital advertising without whom we couldn’t have written this book. They took time out from their busy lives to introduce us to the field’s practicalities, systems, and conflicts. We also thank the organizers of sector meetings who allowed us to attend, often free of charge or at a reduced rate. The funding for our research came primarily from a research grant from the UK Economic and Social Research Council (ES/V015362/1), with initial exploratory interviews funded by an earlier ESRC project (ES/R003173/1). The book draws on three existing articles: MacKenzie, Caliskan, and Rommerskirchen (2023), McGowan, MacKenzie, and Caliskan (2024), and MacKenzie (2024). We are grateful to our co-authors and the copyright holders for allowing us to reuse this material here. And we would also like to thank the many existing researchers on advertising (whose work we discuss in chapter 1) for welcoming us into their community.

Needless to say, any errors are our responsibility. We have learned an enormous amount from those to whom we have spoken, but we apologize if we have got anything wrong. We hope, though, that our informants in digital advertising, generous as they were with their time and input, will judge this book to be an insightful, sometimes critical, but, above all, fair account of the world in which they spend their working lives.

1Introduction

Enter a query into a search engine. Visit a news organization’s website, or another site that has to make money from its visitors. Or go to your favorite social media platform. In the fraction of a second before what you’re looking for appears, a great deal happens behind the scenes. Your single action can trigger at least one, and sometimes more than a hundred, near-instantaneous automated auctions of the opportunity to show an advert on your screen. Globally, the process is repeated almost unimaginably often: over 100 billion digital ads are shown daily, and as many as two trillion ad auctions take place.1

This book is about what goes on behind the scenes, on this gigantic scale, in those crucial fractions of a second. In the words of three campaigners against political manipulation, the digital world is often “like a one-way mirror.” Those behind the mirror “can see the public, but the public cannot see them,” and so “don’t know who is trying to influence them” (Ravel, Woolley, and Sridharan 2019: 6).2 Digital advertising’s systems use a variety of tools to help them “see the public.” Cookies, which we will discuss in chapter 2, are the best-known example – you have probably “consented” to them several times already today – but we still encounter digitally savvy people who don’t know that their mobile phone has a unique identifier number. If advertising technology systems can access it, they can build a picture of your activity across apps, helping them, for example, to decide how much to bid for the opportunity to show you an ad. Android phones’ identifier numbers can generally be accessed, but Apple has blocked default access to iPhones’ identifiers, sparking controversy and disruption within digital advertising that we focus on in chapter 5.

The risk to privacy in using cookies, identifier numbers, or other technologies to “see” users is only one of the crucial issues involved in digital advertising. The winning bid in any one ad auction – and thus the revenue from showing a single ad – is normally tiny, often just a fraction of a cent, but multiplied by the trillions of ads shown annually it accumulates.3 Aggregate revenue from digital advertising globally totaled around US$612 billion in 2023, and it is rising (Lebow 2024). That income stream funds the everyday digital world, sometimes in part, sometimes completely. Without it, Google Search, which you probably use many times daily, would not exist, nor would Google Maps or your favorite social media platform – or, at least, you would have to pay for those services. Many of the news and other websites you visit, games you play, or apps you use could not survive without advertising income.

Another issue is that the trillions of daily automated ad auctions aren’t abstract economic operations: they are material computations. Some happen on your own phone or laptop, almost always without you knowing. Others happen in datacenters: huge, normally windowless, warehouses, each housing tens of thousands or more computers. Wherever it happens, an ad auction consumes electricity, as does the transmission of an ad to your device and the rendering of it on your screen. Some of that electricity may be from renewable sources, but much of it involves carbon emissions.

On average, showing just one digital ad to one user involves emitting a little puff of carbon dioxide sufficiently big that if it were cigarette smoke you would be able to see it: it’s somewhere between a tenth and a whole pint of carbon dioxide (MacKenzie 2023).4 And there are over 100 billion – perhaps as many as 400 billion (Kotila 2021) – such puffs every day. Digital advertising is responsible for, very roughly, around a tenth of information and communication technology’s global emissions (Pärssinen et al. 2018).

So what goes on behind the one-way mirror matters. We’ll begin our account of it in chapter 2 with digital advertising’s original, still very important, main form: “display ads,” in other words ads shown to you simply because you are visiting a webpage or using an app, without you necessarily having demonstrated any interest in a purchase. Display ads now account for around 30% of revenues from digital advertising (IAB 2022), and originally were the preserve of what participants often call digital advertising’s “open marketplace,” inhabited by a multiplicity of generally small or medium-sized firms.

Then, in chapter 3, we’ll move to the two global leviathans of digital advertising: Google and Facebook, now renamed Meta Platforms Inc. Meta’s platforms show display ads, but the mechanism by which they are sold is utterly different from the traditional open marketplace. Google was the crucial exponent of another of the most important forms of digital advertising: search advertising, the ads that appear when you search for something of commercial interest. This quickly became a bigger money earner than display ads, and now accounts for a little over 40% of digital-advertising revenues (IAB 2022). The field’s remaining sectors, which we will not discuss in any detail, include advertising on connected television/video-streaming services (just over 20% of total revenues), and a number of smaller sectors such as digital audio and the digital equivalent of classified ads (in aggregate around 8% of revenues).

Digital advertising is economically extremely concentrated. Alphabet, which is Google’s parent company, received around 39% of all digital advertising revenues globally in 2023, and Meta about 22%.5 Between them, therefore, the two leviathans of digital advertising have a market share of around 60%, but their position is not always as impregnable as that figure might suggest. In chapter 4, we will examine a major challenge to Google and, in chapter 5, a very different challenge to Facebook/Meta. Chapter 6 discusses how advertising’s human practitioners navigate the complexities and perils of their world, and chapter 7 draws out the book’s lessons.

Our approach

When you first take a glimpse behind the one-way mirror, one aspect of what you see doesn’t surprise you too much. Digital advertising is a market or, rather, a set of markets. There are providers of a “supply” of advertising opportunities: the big platforms such as Google, YouTube, Facebook, Instagram, TikTok, and Amazon; and smaller publishers such as news organizations, owners of other websites that make money from advertising, games developers, and connected television/streaming services such as Netflix. And there is “demand” for those advertising opportunities from advertisers, both big and small, and from the advertising agencies that work on their behalf. The distinction between supply and demand is built into the field’s terminology. Publishers often sell advertising opportunities via what are called “supply-side platforms,” or SSPs, and advertisers almost always delegate to “demand-side platforms,” or DSPs, the technologically very demanding task of bidding in the trillions of auctions that SSPs run.

When, however, you start to look closely, more surprising things come into view. For example, what’s bought and sold are quite often only apparent advertising opportunities: for instance, ads that can’t be seen by human eyes, perhaps because they are “shown” in a browser tab other than the one that’s open on the user’s screen, or where the “viewer” is a computerized “bot” simulating a human audience. And demand and supply are often far less distinct than you might imagine. For instance, the advertisers or advertising agencies that constitute the “demand” for advertising often don’t themselves decide whether to bid in Google’s or Meta’s auctions, and if so how much to bid: they leave these decisions to those platforms, in other words to “supply.”

To understand digital advertising, we therefore need an approach that goes beyond simple, standard economics. Our work is a contribution to the burgeoning interdisciplinary field of “market studies,” succinctly described in a recent introduction to it as “an effort to understand and unscramble the entangled knot of practices, agents, devices and infrastructures that constitute markets” (Geiger at al. 2024: 1). More specifically, we work at the intersection of economic sociology and science and technology studies (STS), two academic fields that have interacted productively since the late 1990s. Crucial in bringing them together was the French STS scholar and economic sociologist Michel Callon, especially in his pioneering edited collection, The Laws of the Markets (Callon 1998).

What Callon and STS scholars more generally bring to market studies is close attention to the role in economic life of measurement (from centuries-old weights and measures to today’s digital metrics), systematic practices such as those of accounting, the cognitive tools employed in those practices, and physical things and material devices, whether as old as clay tablets or as sophisticated as the ultrafast algorithmic trading systems that now play a dominant role in many financial markets (MacKenzie 2021). Those are not matters of mere detail, STS-influenced scholars argue, but can shape economic life profoundly. A strong, specific form of that argument is the thesis of the “performativity of economics”: the idea that economics – which needs to be conceived of here quite broadly, not just as the academic discipline – is not simply the analysis of economic phenomena “from the outside,” but at least sometimes is enacted – i.e., “performed” – within economic life (Callon 1998, 2007).6 Mathematical models of financial markets, for example, sometimes influence patterns of prices in those markets (MacKenzie 2006; MacKenzie and Millo 2003).

Callon is a central member of a group of scholars within STS – including his colleague, the late, much-missed Bruno Latour, and also, among many others, Madeleine Akrich, John Law, and Annemarie Mol – who developed what is sometimes called “actor-network theory.” To present this in full is beyond the scope of this book (see, e.g, Latour 2005), but one productive way of thinking about it is as a shift from a sociology of nouns – “the economy,” “society,” “culture” – to a sociology of verbs. The economy, for example, should not be thought of as an already existing “thing,” but as something that constantly has to be brought into being, that constantly needs to be enacted. The researcher’s focus thus needs to shift from “the economy” to “economization” (Caliskan and Callon 2009, 2010), in other words, to the rendering of relations and entities as “economic.” Digital advertising, for instance, involves the attempt – sometimes successful, sometimes not, as we shall see in this book – to turn your attention to your phone or laptop’s screen (itself not an intrinsically economic phenomenon) into something economically valuable. In other words, digital advertising seeks to “economize” your attention, indeed to “marketize” it, to turn it into something that can be bought and sold as an advertising opportunity.

Another crucial aspect of the work of Callon and those influenced by him is consistent attention to materiality. That is at the heart of the threefold overall argument of Inside Digital Advertising. First, we are going to revive an old term, megamachine, to capture the materiality, the scale, and the environmental impact of digital advertising’s technologies. Second, we will emphasize what we’ll call the material political economy of those technologies. Like any machine, advertising’s megamachines can be designed and configured in materially different ways, and the differences are often economically consequential and in a broad sense political. Third, those issues are at the heart of platform power. That power is multifaceted and sociotechnical – i.e., inextricably both social and technological – and in chapter 7 we will lay out what this book reveals about its components.

Megamachines and material political economy

When computers first became everyday devices, it was easy to think of the digital world as somehow non-material: as “virtual,” as constituting a realm of “cyberspace” that was inherently different from the mundane materiality of daily life.7 No longer. It has become inescapably clear that the digital world is underpinned by a vast, energy-consuming, ever-growing infrastructure. Anyone who still needed convincing of this would surely have changed their mind in September 2024, when it was announced that a mothballed nuclear reactor at the most famous civil nuclear site in the United States, Three Mile Island in the Susquehanna River, was being brought back on stream in a 20-year deal to supply power to Microsoft’s computer datacenters (McCormick and Smyth 2024: and see Figure 1.1 below). The following month, Google and Amazon both signed deals with start-up companies developing “small modular” nuclear reactors. Google, for example, has ordered at least six from Kairos Power, which is designing reactors that will be cooled with molten lithium and beryllium fluoride salts, not the traditional water (Moore 2024; Smyth 2024).

The term megamachine, which we are using to capture the sheer scale of the materiality of today’s digital world, was coined by the social critic and polymath Lewis Mumford to refer to “a radically new type of social organization”: the armies of disciplined people who, though equipped with only basic tools, built giant monuments such as the Pyramids and the flood control and irrigation systems of ancient empires. They formed “an archetypal machine,” but one “composed of human parts,” writes Mumford (1967: 11). In more recent times, Mumford went on to argue, “the ancient megamachine” has been “resurrected” with alarming consequences, and given “a more perfect technological structure, capable of planetary and even interplanetary extension…. With nuclear energy, electrical communication, and the computer, all the necessary components of a modernized megamachine at last became available” (Mumford 1971: 166, 274).

Figure 1.1 Powering the digital economy

The nuclear energy site at Three Mile Island in the Susquehanna River in 2014, photographed from Middletown, Pennsylvania. On the right, with steam rising from them, are the cooling towers of reactor Unit 1, now being recommissioned to power Microsoft’s datacenters. On the left are the cooling towers of Unit 2, no longer operational. Unit 2’s near-meltdown in 1979 remains the most serious civil nuclear power incident in the US.

Source: Photograph by “Z22,” own work, https://creativecommons.org/licenses/by-sa/3.0/ CC BY-SA 3.0 license.

We are in no sense followers of Mumford, and examining his – in our view, overly broad-brush – criticism of centralized technologies and “the technocratic prison” (Mumford 1971: 435) would lead us far beyond the confines of our topic.8 His tendency to think and write about “the megamachine” in the singular is not helpful: for us, megamachines are inherently plural. It is nevertheless useful to have a word, “megamachine,” to capture large-scale assemblages of people and machines that often have characteristics quite different from those of their component parts. The presence of people, it is worth emphasizing, remains important. Even Google, developer and operator of the archetypal digital megamachine, still employs large numbers of people: its parent corporation, Alphabet, had 182,500 employees in December 2023.9 Although digital advertising’s core processes are automated, human beings, personal relationships among them, and their interests and beliefs are all still crucial to the design, construction, and use of its systems.

The relationship of today’s digital megamachines to their non-human components is that they inhabit giant assemblages of physical machines. “Inhabit” is the correct word because the particular physical machines that make up a megamachine can change from minute to minute. A digital megamachine is in that sense a metamachine: a material computational process, or set of processes, running on a huge, possibly ever-changing, set of physical machines.

The megamachines discussed in this book are of two main types. The first inhabit a giant, tightly integrated assemblage of physical machines, with a single corporate owner, such as Google/Alphabet or Meta. Those assemblages, discussed in chapter 3, are not simply accidental aggregates but, to a substantial extent, consciously designed, and their component parts work together, not always seamlessly, but with relatively limited friction.

Google Search, which we will discuss in more detail in chapter 3, has a strong claim to be the original digital megamachine of this first kind. The title of an early article by three Google engineers, “Web Search for a Planet” (Barroso, Dean, and Hölzle 2003), gives a sense of the magnitude of Google’s ambition: to have its systems ingest and index nearly the entirety of the already extremely large World Wide Web. That goal was already hugely demanding even at Google’s launch in 1998, and its difficulty grew roughly a million-fold over the following decade, as the number of Google searches and the number of webpages to be searched each increased by a factor of around a thousand (Dean 2010).

Figure 1.2 Housing a megamachine

This photograph by Robert Webster of the Google datacenter, Mayes County, Oklahoma, gives a sense of the physical size of Google’s “warehouse-scale” computers.

Source: Photograph by “Xpda,” own work, https://creativecommons.org/licenses/by-sa/3.0/ CC BY-SA 3.0 license.

To achieve scale (a crucial characteristic of the powerful platforms discussed in this book), Google’s engineers had to rethink what a computer was. It shouldn’t be thought of as a single machine, they realized, but as an ensemble of thousands, tens of thousands, or even more computers packed into a datacenter, such as that shown in Figure 1.2. “[W]e must treat the datacenter itself as one massive warehouse-scale computer,” wrote Google engineers Luiz André Barroso and Urs Hölzle (2009: vi). As we will discuss in chapter 3, the individual computers on which

Search and other Google services ran were cheap, individually unreliable machines, but by fusing them together into a few dozen giant “warehouse-scale” computers, each housed in a strategically located datacenter to ensure worldwide coverage, key processes could run uninterrupted, globally, even in the face of multiple failures of these individual machines.

A megamachine of this first type, involving giant streams of data (“big data,” as it was starting to be called) being processed by warehouse-scale computers with a single corporate owner, can still be programmed by the owner’s engineers. The task, however, needs to be tackled differently from the traditional programming of an individual computer. Crucial to this was what was to become a hugely influential approach to programming, MapReduce, first developed at Google in 2003 (and described publicly in 2004) by two engineers central to the programming of Google Search, Sanjay Ghemawat and Jeff Dean.10

MapReduce enabled Google’s programmers to divide up a giant computational task among huge numbers of machines without having themselves to tackle the complexities of fusing individually unreliable machines into a smoothly functioning megamachine. With MapReduce, the “run-time system,” not the human programmer, “takes care of the details of partitioning the input data, scheduling the program’s execution across a set of machines, handling machine failures, and managing the required inter-machine communication” (Dean and Ghemawat 2004: 1).

The second type of megamachine that we will discuss has no single owner and is an emergent assemblage rather than consciously designed. While parts of it can be programmed, it cannot be programmed as a whole. Its component parts operate more or less in concert, but typically with greater friction than megamachines of the first type. Digital advertising’s longest-established form, Web-display advertising (discussed in chapters 2 and 4) is, for example, a megamachine of this second type. It consists in good part of a plethora of interacting systems deployed by large numbers of different firms, mostly of modest size. Google’s systems also play central roles in that megamachine, but their centrality is fiercely contested, as we shall see in chapter 4.

Megamachines and giant warehouses stuffed with tens of thousands of computer servers may seem very alien from everyday life, but these megamachines also inhabit your laptop and your smartphone. You can hold your phone in the palm of your hand, and if its battery still has charge it can run without interaction with the external world, but it is often part of at least one megamachine, some parts of which can be so big that they can almost be seen from space.11 If, for example, your phone is an iPhone, much of the time it forms part of a megamachine owned and controlled by Apple. Use your phone, however, for a Google Search, and that megamachine clicks into action. Use it to visit a social media platform, and your phone then forms part of that platform’s megamachine. The other apps you use, the ads you are shown within them, the elaborate apparatus that seeks, often with some difficulty, to measure and optimize the efficacy of those ads – those are often contested material terrain, with different megamachines contending for control.

Our reason for conceptualizing digital advertising, and digital economies more generally, as consisting in good part of megamachines is not simply to underline their scale but to emphasize design. Machines can be designed differently, and material practices can be conducted differently, and which ways prevail is often not simply an issue of technical efficiency but also of power relations, sometimes including those of class, race, and gender.12 Megamachines, too, can be designed and configured differently, and we would argue that it is important consciously and explicitly to consider how to redesign megamachines even of the second, emergent rather than planned, type.

That is in part an issue of environmental politics, given megamachines’ huge consumption of energy and water, the latter used in datacenters’ cooling systems to dissipate the heat generated by enormous arrays of computer servers. But there are other crucial design issues too, including one that we explore in chapters 4 and 5. Should your phone or your laptop simply be a relatively passive appendage to the relevant megamachine, sending information on your digital activities to the megamachine’s more central systems, and receiving from them ads decided on by those systems? Or should your device play a more active role, itself running auctions for your attention, and storing important data, such as the record of your taps on ads, in its own memory, rather than immediately sending that data to other parts of the megamachine?

Issues such as those make clear that digital economies are not simply material economies (as their huge electricity consumption makes evident) but spheres of material political economy. The challenges to Google and Facebook/Meta that we will discuss in chapters 4 and 5 were attempts to reconfigure megamachines, attempts that were economically consequential and, in a broad sense, political. The initiative we describe in chapter 4, for example – to move a crucial part of the market for Web-display ads away from Google’s datacenters and into your laptop or phone – involved an effort to change the balance of power between Google and publishers (especially news publishers) and to boost publishers’ advertising revenues. It was thus a prime example of material political economy.

Our notion of material political economy has its roots in science and technology studies, in particular the idea of “material politics” put forward by John Law and Annemarie Mol (2008) and Andrew Barry (2013). Again, we think about materiality in terms of verbs, not nouns. In particular, don’t take “material political economy” to mean an emphasis on the hardware of computer systems rather than software. Instead, think about material political economy in terms of the difference between the verbs should, must, and can.

For example, Apple’s challenge (described in chapter 5) to Facebook/Meta, other mobile-phone apps, and in-app advertisers involved Apple materially restricting their access to the identifier number of each iPhone, known as its IDFA (Identifier for Advertisers). Apple could have said that app developers should not use IDFAs to track users without their permission, or even that they must not do this. Instead, as we will see in chapter 5, Apple’s engineers altered iPhones’ operating system so that app developers cannot do this, unless that system contains the electronic record of a tap by the user materially consenting to tracking. That, for us, makes Apple’s move an instance of material political economy, a change in what can and cannot happen, which is economically consequential and in a broad sense political. And the fact that the change was implemented in code – it took the form of an operating system update, rather than an alteration to the physical hardware of iPhones – doesn’t stop it being material political economy.

The change that Apple’s engineers made also teaches us something important about megamachines. They can be socially powerful and physically big – “macro” – but they can pivot on things that seem merely “technical” and are sometimes physically tiny: “micro.” The crucial identifier, your iPhone’s IDFA, is an example. It is a 32-digit number electronically encoded in a tiny portion of the silicon-chip memory of a small device that you carry around with you almost all the time. If you have an Android phone, its memory contains an equivalent 32-digit number, Google’s version of Apple’s IDFA. Physically minuscule, these numbers make a big difference. As we will describe in chapter 5, their reliable functioning helped platforms that took the form of mobile-phone apps gain size, global scope, and power.

A megamachine, at least of the first of the two types discussed above, is a “solid durable macro-actor,” to borrow a phrase from Michel Callon and Bruno Latour’s first exposition in English of their actor-network theory (1981: 283). As they emphasize, however, we should not take the existence of such macro-actors for granted, but always investigate how they come into being. An actor – in Callon and Latour’s view, “[a]ny element which bends space around itself,” not just a human being – “grows with the number of relations he or she can put, as we say, in black boxes,” which function reliably, and the contents of which “no longer need … to be reconsidered” (1981: 284–6). That process, according to Callon and Latour, is what creates macro-actors such as megamachines and, at least in part, is what gives them their power. MapReduce, for example, was centrally involved in that process as it played out in Google: it put the megamachine’s complexities into what could be treated as a reliably operating opaque box, so enabling Google’s programmers to write code that would run on a giant scale, but without their having themselves continually to wrestle with those complexities.

The crucial role of Apple’s IDFAs in megamachines that take the form of mobile-phone apps meant that when Apple in 2021 made default access to IDFAs by apps and advertising systems impossible, it was seriously disruptive. That case is a little unusual, though, in that making something impossible to do is an extreme form of material political economy, one that is difficult to achieve given the unpredictability of technical systems and the ingenuity of human beings seeking to subvert them. Making something hard or awkward to do is more common. Latour gives a simple everyday example: the keys to many European hotel rooms, prior to today’s swipe cards. To avoid the nuisance and expense of having a new key cut, or perhaps for security reasons, hotel owners wanted to stop guests leaving the hotel with their keys in their pockets or bags. As Latour points out, one way of doing that – the should way – was to have a sign saying: “Please leave your room key at the front desk before you go out.” A “should,” however, can always be ignored, and even changing the sign to “you must leave your key at the desk” might have been insufficient. So hotel owners acted materially, and had a heavy weight added to each key, so that it was awkward for the guest to carry the key around (Latour 1991: 104).

Issues of what it is possible, quick, even easy, to do – and what is awkward, slow, or impossible to do – are pervasive in digital life and appear many times in the chapters that follow. Sometimes, there is a clear intention: Apple’s senior managers and engineers plainly knew what they were doing when they restricted access to iPhones’ IDFAs. Material systems, though, are not simply the result of human intentionality. They often have features or exhibit behavior that nobody intended, and it would be mistaken to restrict our discussion to features of such systems only where there is evidence of human intent. Sometimes, indeed, we simply lack the data to infer intent, an issue that we will discuss in the section below on our data sources.

Platforms and platform power

At the core of digital advertising are a small number of platform-owning corporations, notably Alphabet (Google’s parent), Meta, and Amazon. How should their platforms be conceived of and analyzed?

One approach we take is to think of a platform as a “stack” of economization processes, in the sense (already explained) of processes that render things economic. A “stack” is a layered, interacting set of computational processes, and our interviewees often refer to the various systems employed in the advertising market, such as the demand-side and supply-side systems referred to above and the ad servers that we will discuss in chapters 2 and 4, as the advertising technology or “AdTech stack.”

What we see as stacked in a platform, however, are not just computational processes but processes of economization. Again, digital advertising offers a simple example. As noted above, users’ attention is marketized. But marketization is not the only mode of economization. Gifts are a form of economization, at least when they are viewed, as they are by anthropologists, as carrying with them obligations, as creating a non-monetary form of debt. So is barter, again if conceived of anthropologically as something more complicated than just exchange without the medium of money: as “dynamic, self-contradictory, and open-ended … deriv[ing] from, and creat[ing] relationships” (Humphrey and Hugh-Jones 1992: 11, 17).

Google, for example, marketizes users’ search queries, although, as already suggested, it is often Google’s own systems, not advertisers themselves, that bid in the billions of auctions that determine which ads are shown in response to a commercially relevant search term. Google, however, also “gifts” or barters ordinary, “organic” search results (i.e., the results that aren’t ads) to users, who in return provide the platform with potentially valuable data: see, e.g., Elder-Vass (2016) and Fourcade and Kluttz (2020).13 How best materially to configure this stacking is a crucial problem for the developers of platforms, as we shall see when we discuss in chapter 3 the early years of both Google and Facebook, the founders of which were acutely aware of the possibility of getting this wrong.

We lay out the view of platforms as stacked economization processes elsewhere (Caliskan, MacKenzie, and Callon 2024; Caliskan, Callon, and MacKenzie forthcoming). Here, however, we think it is also important to tackle the issue of platform power, which is an essential counterpart of our notions of megamachine and material political economy. There’s a widespread, correct intuition that platforms of the kind discussed in this book are powerful. The best-known expression of that intuition is Shoshana Zuboff’s The Age of Surveillance Capitalism (2019), which famously portrays nearly all-powerful surveillance capitalists, of which the big digital platforms (especially, in her view, Google’s platforms) are the prototype. Surveillance capitalists’ systems “know everything about us” and “not only know our behavior but also shape our behavior at scale” (Zuboff 2019: 8, 11; emphases in original). That argument, in our view, is overstated: digital advertisers’ data is often “broken data” in the sense of Pink et al. (2018), and while advertisers might wish to shape behavior, their capacity actually to do that is frequently quite limited.

That platform power can be overstated does not, however, imply that it does not exist. It is, as we’ve said, intrinsically multidimensional. Zuboff is right that one aspect of it is “data power.” Big Tech platforms “own vast quantities of user information” (Iliadis and Russo 2016: 1), even if they usually do not in any simple sense “marketize” or sell that data: they hoard it, making it an asset, and can thus “control and manage the very information markets depend upon” (Birch 2023: 14). Platforms also have “algorithmic power,” in Taina Bucher’s sense: “[i]n ranking, classifying, sorting, predicting, and processing data, algorithms are political in the sense that they help to make the world appear in certain ways rather than others” (2018: 3). And the big-platform corporations enjoy a degree of market dominance within digital advertising that troubles regulators such as the United Kingdom’s Competition and Markets Authority (CMA 2020).

In this book, however, we also follow platform researchers Thomas Poell, David Nieborg, and colleagues, and seek to go beyond “monolithic perspectives on platform dominance,” such as Zuboff’s, and to broaden the “conceptualisation of platform power” (Poell, Nieborg, and Duffy 2023: 1391; van Dijck, Nieborg, and Poell 2019: 1). In particular, we will emphasize platform power’s megamachine materiality. For example, Google’s capacity to build reliable “warehouse-scale” computers out of large numbers of cheap, individually fallible machines, and its ability to use MapReduce to simplify the programming of those computers were vital components of its platform power.

We will also highlight the importance to platform power of systematicity, which again we’ve already touched on implicitly. An instructive contrast here is between Google’s integrated AdTech stack and the rest of the “open marketplace” in Web-display ads, with its large number of smaller firms and only partial centralization via Google’s systems. The open marketplace operates at megamachine scale, but frictions and “broken data” abound, for example in the process of “cookie matching” that we will touch upon in chapter 3. In contrast, Google operates an integrated system (as Rieder 2022 emphasizes), and that was and is important to its platform power, forming for example a major obstacle to the material challenge to Google that is the focus of chapter 4.

Data sources

Our investigation of digital advertising draws upon three sources of data. First is the trade press, in particular the daily Ad Exchanger, which covers digital advertising in particular depth.14 Second, we have participated in six face-to-face advertising-sector conferences (three in the United States, three in the United Kingdom), twelve online conferences, 39 other online events such as webinars, and two online training courses. Formal presentations to these conferences and webinars frequently resemble sales pitches, but conference panels and smaller events are less scripted, as, e.g., were a short-lived flurry of AdTech discussions on Clubhouse. Even the sales pitches indirectly helped us identify tensions within digital advertising because they often implicitly position the product/service in question as a response to them.

Third, we have conducted 119 interviews with 96 practitioners of digital advertising, publishers, and related technical specialists. Interviews are semi-structured, typically 45–60 minutes long, and all but six were audio-recorded and transcribed. Apart from two particularly well-known (and therefore hard to anonymize) interviewees who have given us their permission to name them, we refer to interviewees using two-letter codes, assigned chronologically by the date of our (first) interview with them: see Table 1.1. Our canvas was initially deliberately broad: we sought interviewees from across digital advertising and at first simply pursued any opportunity to speak to people with firsthand experience of its practices and systems. It is only a slight exaggeration to say that initially we spoke to anyone who would speak to us.

Gradually, our data collection became more analytically led. We immersed ourselves thoroughly in the field, reading the trade press, taking part in as many sector meetings as possible, following the sector’s debates, and gradually learning about the field’s systems, practices, organizations, and specialized terminology. Immersion enabled us to identify specific systems, practices, and episodes (such as Apple’s 2021 restrictions on apps’ access to iPhones’ IDFAs) that are of particular analytical interest, and where possible to focus interviews on those, also seeking out further interviewees with involvement in them. Convenience, snowballing, and the analytically led selection of interviewees and evolving, interviewee-specific questions ruled out even rudimentary quantitative analysis (“N percent said X; M percent said Y”) of interviews but brought benefits in the concrete depth of what we learned. Proceeding iteratively in this way is inherently slow (our research began in 2019 and continues) but that has its virtues in providing insights into change through time.

We make no claim that our interviewees are in any sense “representative.” There is almost certainly overrepresentation of people in more senior positions because they are easier for us to identify and often more confident in speaking to researchers. Women are underrepresented: only a quarter (29 out of 119) of our interviews are with women. Our research is almost entirely restricted to digital advertising in the United States and Europe (see Table 1.1). We haven’t, for example, tried to investigate China, which is very different culturally and politically, with a large but differently structured advertising sector.

A shortcoming of a different kind is that we have found that Big Tech platforms often sharply constrain what employees can say about internal matters, making them wary of being interviewed and/or sometimes very guarded in what they say. In particular, we were not able to interview employees of either Meta or Apple for this book, so lack firsthand fieldwork data on internal processes in those corporations.

That creates a methodological danger that we have been careful to avoid: the risk involved, in the case of large corporations that are complex organizations, of inferring a unified corporate intent. “Politics,” including material politics, is to be found not only in the interactions among big corporations and between them and the wider world, including government, but also within them. Sometimes at least, what looks like a corporation’s “decision” should actually be seen instead as an outcome: a compromise between clashing internal forces, or the result of one such force prevailing over others. But we often lack the data to be sure, so in the chapters that follow we are often agnostic on questions of corporate “intent.”

Table 1.1 Interviewees

US

UK

Continental Europe

India

Total

Advertiser

AE, AG,

AF

AQ, CC,

CF

14

AH, CV,

CD, CE

DE, DF,

DG, DQ

Advertising agency

AB, AK, AL,

AI, AP, AX,

AJ, AV,

20

AM, CJ,

AZ, BB, BL,

DL, DM,

CK, DO

CG, CL

DN

”Demand-side-

AN, BI,

AC, AO,

CH

15

oriented” AdTech,

CM, CO,

AU, AY, DD

eg Demand-Side

CP, CR,

Platform (DSP)

DH, DI, DR

“Supply-side-

AS, BJ, BK,

BE, BF, BP

AT

18

oriented” AdTech,

BQ, BR, BS,

e.g. Supply-Side

BT, BW,

Platform (SSP)

BX, BZ, CB,

CN, CS, DK

Major platform

AA, CQ,

AR

5

DC, DP

Other publisher

BN, BU,

AW, BA,

19

BV, BY, CA,

BC, BD,

CT, CU,

BG, BH,